Mimicked Voices and Nonhuman Listening: AI Deepfakes, Speech, and Sonic Manipulation in the Digital War on Ukraine

The essays collected in this series (link to the Introduction) trace how nonhuman listening operates through sound, speech, and platformed media across distinct but interconnected domains. Across these accounts, listening no longer secures meaning or relation; it becomes a site of contestation, where sound is mobilized, processed, and weaponized within systems that privilege circulation, recognition, and response over truth. In this contribution, Olga Zaitseva-Herz examines how nonhuman listening operates under conditions of war, where AI-generated voices and deepfakes destabilize the very grounds of auditory trust. Through the case of Ukraine, she shows how platforms and political actors alike exploit algorithmic listening systems to amplify affect, circulate disinformation, and transform voice into a tool of psychological warfare. Listening, in this context, becomes not a means of understanding but a terrain of uncertainty. –Guest Editor Kathryn Huether

—

Russia’s full-scale invasion of Ukraine has unfolded as the most digitally mediated war to date, shaped not only by what circulates online but by how content is heard, interpreted, and amplified. Here, listening is not limited to human hearing: it also includes algorithmic systems that detect, rank, and amplify content, as well as political actors and online publics who interpret and recirculate it. Social media platforms—Telegram, Instagram, TikTok, Facebook—have become sites of psychological warfare where AI-generated audio, video, text, and image-based content are crafted to manipulate perception and provoke rapid emotional responses, often through algorithmic systems attuned to virality and affect. Ukrainian political authorities regularly caution users by saying that everything one reads, hears, or sees could be a psychological weapon. This is not rhetorical. Content is often designed to produce outrage, shock, and despair—emotions that travel quickly across platforms and influence public mood.

AI is used to create fake news videos, synthetic voices, and deepfake conversations, complicating how authenticity is heard and assessed. Some recordings circulating on social media simulate “leaked” phone calls revealing political dissent or strategic plans that are then shared on social media sites such as Telegram, Instagram, and Facebook. At the same time, the fact that people’s original voices can now also be generated with AI means that one can claim that their recorded voice is AI-generated. A widely circulated case involved Russian music producer Iosif Prigozhin, whose alleged call criticizing the Kremlin provoked significant backlash. Soon after he claimed the recording was an AI forgery – a statement whose truth remains unclear, but which strategically exploits growing public awareness of deepfakes as a means of discrediting or distancing from damaging material. Deepfakes thus do not merely deceive; they also destabilize the conditions of listening and trust, turning listening itself into a site of strategic uncertainty.. This uncertainty exploits a growing crisis of trust in listening itself, where voices can always be disavowed as synthetic. Against this backdrop, music and voice emerge as especially powerful media for manipulation, parody, retaliation, and symbolic struggle.

AI Songs as a Tool of Revenge

AI generative tools are also used for irony or parody, such as in the viral remake “Samotni Moskali,” [Lonely Muscovites], which mocks the Ukrainian pop star Ani Lorak, who moved to Russia. On November 13th, 2023, Ukrainian journalist and politician Anton Gerashchenko’s Telegram channel posted a video remake of Ani Lorak’s old song “Poludneva Speka” [Midday Heat], renamed “Samotni Moskali.” This video quickly went viral on social media. Her big hit from the ’00s has been remade into strongly pro-Ukrainian content, featuring clips from current frontlines to illustrate new lyrics generated by an AI voice engineered to closely mimic Lorak’s vocal timbre and affect. The parody relies on listeners recognition of her voice and affective style, while the imitation introduces a strong contentual shift between the original and synthetic lyrics.

This social media burst was a response to Ani Lorak’s claimed political neutrality in the context of Russia´s full-scale war against Ukraine, despite clear signs from her that supported Russia. These actions seemed aimed at revenge and at the same time, the public breakup of her Ukrainian fan base, showing the impact of her choices, while her Ukrainian audience felt betrayed. It led to many satirical memes, including AI-generated songs related to her stage persona, appearing on social media. Knowing that, under current Russian politics, she could get into trouble there if the government took the promoted `support´ for the Ukrainian army seriously. The revenge group went even further by creating a homepage called “Ani Lorak Foundation,” completely dedicated to fundraisers for the Ukrainian army, which is represented like Lorak’s own project where she showcases her support of Ukrainian battalions. Some military drones deployed by the Ukrainian side even ended up bearing stickers with the name of the “Ani Lorak Foundation.“ This case demonstrates how AI tools became instruments of public satire, sabotage and protest in the context of the current full-scale war.

AI Songs as a Weapon

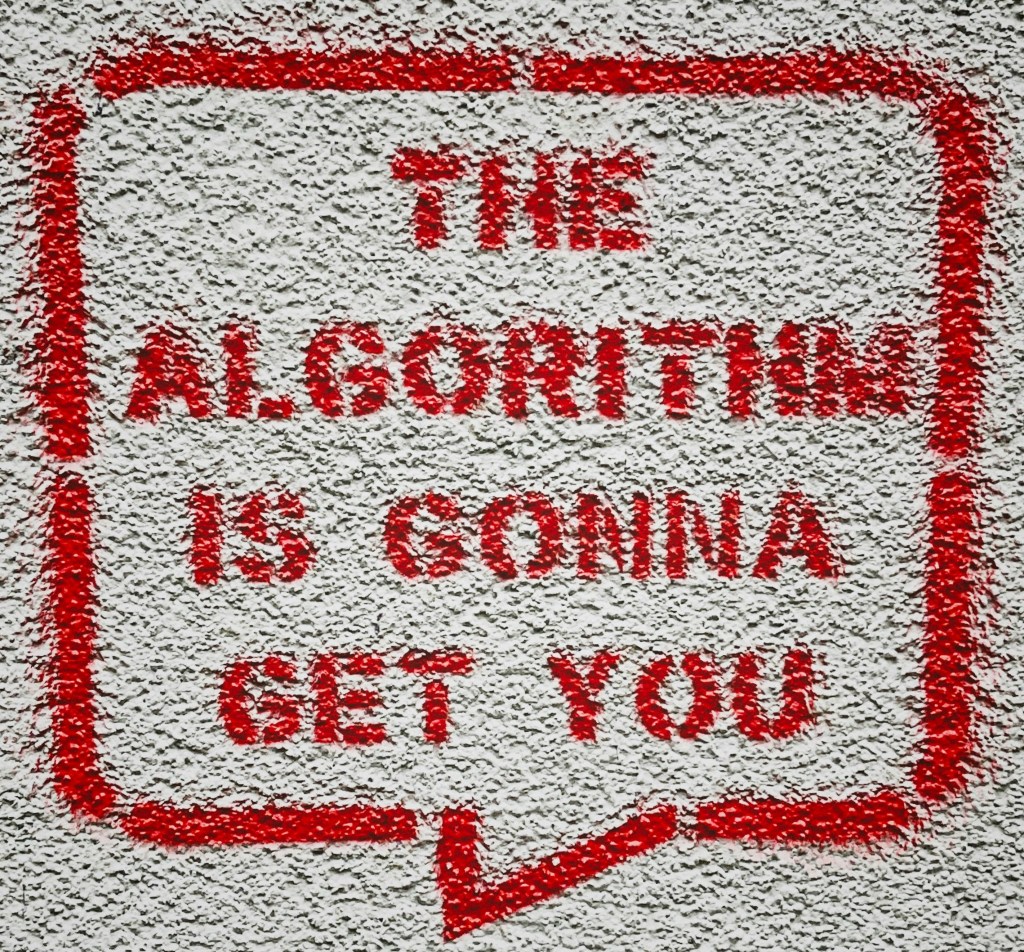

During the full-scale invasion, Russia has been using AI-generated music as a weapon for propaganda and disinformation. In 2023, multiple songs in Ukrainian were created to disrupt Ukraine’s military mobilization efforts and went viral. One of these, the song “Mamo, Ia Ukhyliant” [Mother, I am a Draft Dodger], became particularly popular in a multitude of variations. Their circulation shows how platforms “listen” to wartime content through metrics of repetition, provocation, and affective intensity, amplifying messages not because they are true, but because they are likely to generate reaction and spread. These songs were algorithmically promoted on TikTok and successfully sparked a viral challenge aimed at undermining Ukraine’s mobilization in 2024 by encouraging Ukrainian men to evade the draft, flee, and party abroad instead. In return, Ukrainian intelligence has released an official statement that these songs are products of the Russian disinformation campaign.

This example shows how AI-generated songs are actively used as powerful tools of war, spreading political messages and influencing people’s political choices. Also, the fact that all these songs about draft evasion were released in Ukrainian highlights the goal of targeting Ukrainian men specifically, since Russian men usually don’t speak Ukrainian and therefore wouldn’t be affected by the content. Furthermore, the presence of a large number of these `draft dodger’ songs at the same time created the impression of widespread societal acceptance through repetition and algorithmic amplification. In this way, repetition itself became a signal of apparent legitimacy: the more frequently such content circulated, the more easily platforms and audiences could register it as evidence of broader consensus around draft evasion within Ukrainians.

AI Pictures on Facebook Mimicking Sound and Sonic Affect

Visual disinformation follows similar viral patterns. There has been a surge of AI-generated images with war-related content, often mimicking sound to intensify emotional impact and prompt affective listening by showing a screaming child amid the rubble or a crying soldier in a Ukrainian uniform, paired with a patriotic, pro-Ukrainian message that encourages interaction, such as a like or comment. Even without actual sound, such images solicit a kind of affective listening in which suffering is not literally heard but imagined, projected, and emotionally registered through visual cues. Meanwhile, although this truth-blurring pattern attracted significant attention among many Ukrainians, ironic counter-memes emerged, mocking its primitive approach.

According to warnings from the Ukrainian online security agency, these accounts aim to interact with pro-Ukrainian users, ultimately adding them as friends or followers. Then, when they build a large enough audience, they shift the type of content they share to pro-Russian. The strategy relies on gathering an audience that is specifically pro-Ukrainian, as they interact with images of crying soldiers or the suffering of the Ukrainian people at the front. In this sense, the filtering process functions as a form of nonhuman listening at the level of audience formation: platforms and account managers learn which publics respond to particular emotional cues, cultivate those publics through repeated engagement, and later redirect them toward different ideological content. This creates a filtering mechanism through which an initially pro-Ukrainian audience is gathered, profiled, and later ideologically redirected, alienating loyal followers while pulling political opinion in a more pro-Russian direction.

Pro-Russian AI Songs in Germany to weaken Support of Ukraine

In Germany, AI-generated songs are being utilized as propaganda tools to promote pro-Russian sentiment and anti-Ukrainian views. The right-wing party AfD has embraced AI songs as a potent tool in this regard. Multiple mostly anonymous YouTube accounts have emerged spreading right-wing ideas, with these songs not only addressing German political issues but also openly supporting Russia. For instance, one song titled “Meine Stimme Habt ihr nicht” [You don’t get my vote] features an AI-created avatar of a tall, strong woman holding German and Russian flags. The version of the same song was also released in Russian. The lyrics criticize Germany’s political course, including military aid to Ukraine, and expresses a desire to be friends with Russia. Its circulation across German and Russian suggests that listening is being calibrated for different national and linguistic publics, allowing similar political messages to be heard through distinct affective and ideological frames shaped by language, audience, and context.

Contemporary propaganda is increasingly shaped not just by human intent but by rapidly developing nonhuman listening systems—both in production and amplification. Algorithmic listening and perception are exploited to privilege what provokes, not what is true, complicating efforts to regulate digital hate, emotion, and influence. In this context, listening becomes not only a human practice of interpretation, but also a technical system of detection, ranking, and amplification—and, crucially, a site of failure where truth, trust, and perception can no longer be reliably aligned.

—

Featured Image: Photo by Stanislav Vlasov on Unsplash.

—

Olga Zaitseva-Herz is an ethnomusicologist working at the intersection of Ukrainian music, war, displacement, and digital culture. She is currently a postdoctoral researcher at the Kule Centre for Ukrainian and Canadian Folklore at the University of Alberta and a guest scholar at Think Space Ukraine at the University of Regensburg. Her research examines how song operates as a medium of political mediation, cultural diplomacy, and historical memory, with a particular focus on popular music and AI-generated sound during Russia’s full-scale invasion of Ukraine. Combining perspectives from ethnomusicology, sound studies, and media analysis, her work investigates how music shapes narratives of resistance, belonging, and global visibility, and how sonic practices illuminate the broader entanglements of culture, technology, and power.

—

REWIND! . . .If you liked this post, you may also dig:

Hate & Non-Human Listening, an Introduction–Kathryn Huether

Your Voice is (Not) Your Passport—Michelle Pfeifer

Mapping the Music in Ukraine’s Resistance to the 2022 Russian Invasion—Merje Laiapea

SO! Amplifies: An Interactive Map of Music as Ukrainian Resistance to the 2022 Russian Invasion—Merje Laiapea

Recent Comments