Sounding Out! Unplugged: “Power in Listening” (August 2026)

Hello listeners + readers!

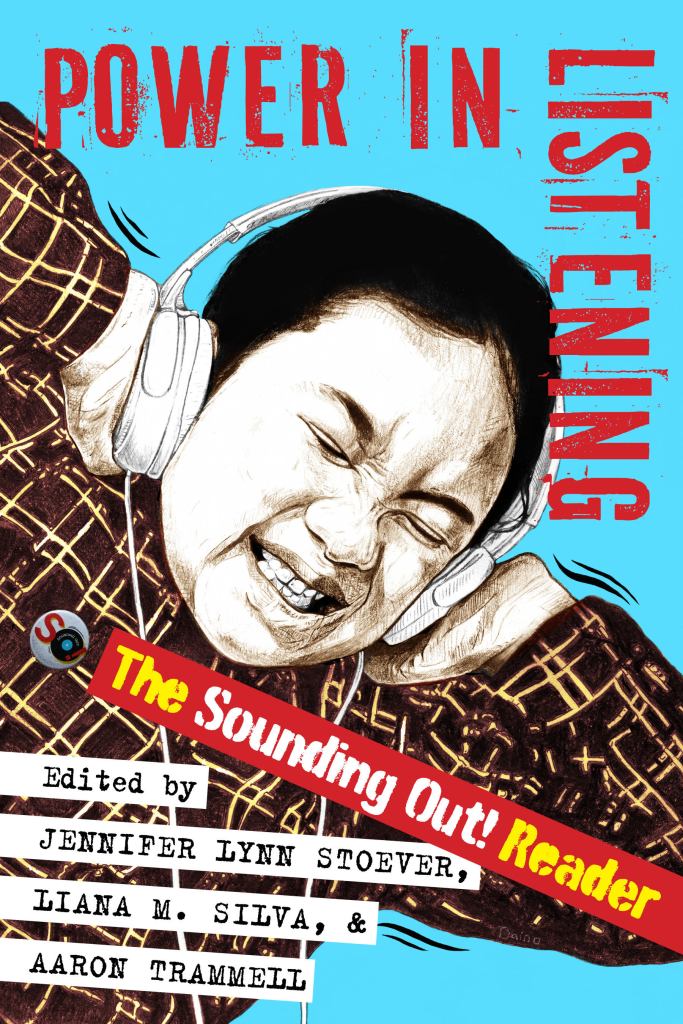

We usually take July as a BYE month to celebrate our yearly blog-o-versary, but this year, we are going bigger! Team SO! is pausing for the full summer–June, July, AND August–to catch our breath in advance of the publication of the official Sounding Out! print anthology, Power in Listening (New York University Press) on August 25, 2026 (although you can pre-order now, if you’d like at Indiepubs, direct from NYU, and other book outlets). This book is a long time coming and we are really proud of what we have put together. It’s a fresh mix of brand new essays with fan-favorites that have been revised, expanded and fully updated to the present, with an introduction by the editorial collective and a forward by SO!‘s very own Neil Verma.

Power in Listening is a love letter to everyone who has participated in the ongoing collective project of the blog over our first 15 years and a fantastic way to kick off the future together. Like the blog, it’s sharp, accessible, gorgeously written, diverse, and ready for the classroom, the library, the beach, public transit, the coffee shop, the couch on a rainy day, the club . . .wherever you love to read, but now you can be unplugged too, which we all need more than ever. Scroll down for more details about the book, including a full author list!

Enjoy the coming months–the book will be out there catching eyes in August and the blog will grab your ears once again this September! Please help us spread the word about the book– we’d love it if you’d tell two friends, so that they two friends, and so on, and so on, and so on. . .or you can share social media, whatever works for you!

Thank you and SO! looking forward–see you in September!

JLS, SO! Ed-in-Chief

P.S. Details to come on release parties, conference events, speaking engagements, podcasts, broadcasts, and all that good stuff! If you’d like us to come out your way to talk about the book, the blog, and all things sound, we have a Google form for that! Contact NYU Press at this link if you are interested in reviewing the book on your publication: https://nyupress.org/resourcesold/for-media/

—

How listening shapes power

Power in Listening explores how listening shapes—and is shaped by—power. From the politics of “sad girl” Spotify playlists to the sonic architectures of surveillance and the gendered voices of Siri and Alexa, this collection investigates how sound and listening inform identity, embodiment, and social life. How does Beyoncé’s remix of her “elevator incident” expose the surveillance of Black bodies? How do deaf listeners use multiple senses to navigate sound? How are Latina voices racialized through ideas of volume and tone?

Building from the groundbreaking Sounding Out! blog, Power in Listening curates 36 new, revised, and expanded essays from scholars, artists, DJs, and activists across more than twenty disciplines. Together, they trace how auditory culture intersects with race, gender, sexuality, technology, and media—from radio and tape to streaming and AI.

Accessible yet rigorous, this reader reveals sound studies in motion: a field that listens as a form of inquiry, protest, and care. Each essay connects theory and everyday experience, offering tools to hear the world—and each other—more critically. Power in Listening invites readers to experience listening as a social practice, a political act, and a method of understanding one’s place within a resonant and contested public sphere.

—

Authors

Neil Verma, Nichole Prucha, Rami Stucky, Max Abner, Ola Mohammed, Christie Zwahlen, Art Blake, Liana Silva, Maria Chaves Daza, Tara Betts, Marlén Ríos, Kimberly Williams, Samantha Ege, Aaron Trammell, Christina Giacona, Andrew Salvati, Kemi Adeyemi, Enongo Lumumba-Kasongo, Andreas Pape, AO Roberts, Milena Droumeva, Steph Ceraso, Linda O’Keeffe, Michael Levine, Amanda Gutierrez, Asa Mendelsohn, Rebecca Lentjes, Priscilla Peña Ovalle, Justin Burton, Gustavus Stadler, Dolores Inés Casillas, Jennifer Lynn Stoever, Chris Chien, Benjamin Tausig, Hubert Gendron-Blais, Maile Costa Colbert, and Dustin Tahmahkera

Section Titles and Topics

- Sonic Presents

- Putting The “I” in Listening: Memoir as Method

- The Sound You Make Is Not Your Own: Our Social Voices

- “Hop With It, Rock With It”: Listening to Popular Culture

- Bits and Screeches: Technology and Sound

- Hitting the Streets: Space, Place, and Sound

- Panaudicism: Sound and Surveillance

- Listening While White: Sound and Racial Privilege

- “Can You Hear Me Now?”: Sound, Agency, and Activism

—

What folks are saying. . .

Spotlighting the work of emerging scholars under innovative rubrics like space, gender, time, race, and power, Power in Listening curates an impressive array of authors and disciplinary approaches of the highest caliber. This is a welcome, fresh take on the field of sound studies. ~Roshanak Kheshti, author of Modernity’s Ear: Listening to Race and Gender in World Music

From voice and memoir to technology, space, race, surveillance, and activism, Power in Listening centers captivating soundworkers. and shows how listening can unsettle hierarchies and make new worlds audible. This sharply curated collection brings together newly revised classics from the blog as well as bold new essays that treat listening not as neutral perception, but as a site of power, struggle, pleasure, and possibility. Smart, generous, and unapologetically loud, this book doesn’t just reflect a field. It changes how you hear it. ~Karen Tongson, author of Norm Porn: Queer Viewers and the TV That Soothes Us

Not only chronicles the dynamism of the field of sound studies, but also beckons readers to find the listening experience to be an unmistakably political social practice. Power in Listening is an exceptional achievement, uniting scholars and artists across countless disciplines to foster conversations and new scholarship for years to come. ~Iván Ramos, author of Unbelonging: Inauthentic Sounds in Mexican and Latinx Aesthetics

—

Jennifer Lynn Stoever is Associate Professor of English at Binghamton University, founding Editor-in-Chief of Sounding Out!, and author of The Sonic Color Line: Race and the Cultural Politics of Listening.

Liana Silva is Managing Editor of Sounding Out! She is a teacher, writer, reader, and editor living in Houston, TX. She graduated from Binghamton University’s Department of English in 2012. In the past she was Editor-in-Chief of the professional publication Women in Higher Education.

Aaron Trammell is Assistant Professor of Informatics and Core Faculty in Visual Studies at UC Irvine and author of Repairing Play: A Black Phenomenology and The Privilege of Play. He is Editor-in-Chief of the journal Analog Games Studies and was an honoree of the hobby game industry’s prestigious Diana Jones Award.

This Past Weekend with Theo Von: Brocasting Trump, Part II

But first. . .

A Brief Synopsis of an Introduction to Bro-casting Trump: A Year-long SO! Series by Andrew Salvati

—

In total, Trump appeared on fourteen podcasts or video streams during his 2024 campaign, which together earned a combined 90.9 million views on YouTube and on other video streaming platforms, not even including audio podcast listens, which, because of the decentralized nature of RSS, are notoriously difficult to pin down.

That’s a lot.

In the following series of posts, I am particularly concerned with Trump’s success with the so-called podcast bros – partially because my own research interests are in the area of mediated masculinities, but also because they may have put him over the edge with a key demographic – with (white) Gen-Z men.

Over this series—which began in January 2026 with Logan Paul—I will examine several of Trump’s appearances on largely apolitical “bro” podcasts during the 2024 campaign season, including his interviews with Logan Paul, Theo Von, Shawn Ryan, Andrew Schulz, the Nelk Boys, and Joe Rogan. In the course of this examination, I will pay attention not only to what Trump said on these shows, but also to the way in which they established a sense of intimacy, and how that intimacy worked to underscore Trump’s reputation for authenticity. Along the way, I will also discuss the podcasts and podcasters themselves and attempt to locate them within the broader scope of the manosphere. Finally, given the passage of time since Trump’s appearances, I will consider to what extent, if any, individual hosts have become critical of his administration’s policies and actions – as Joe Rogan famously has.

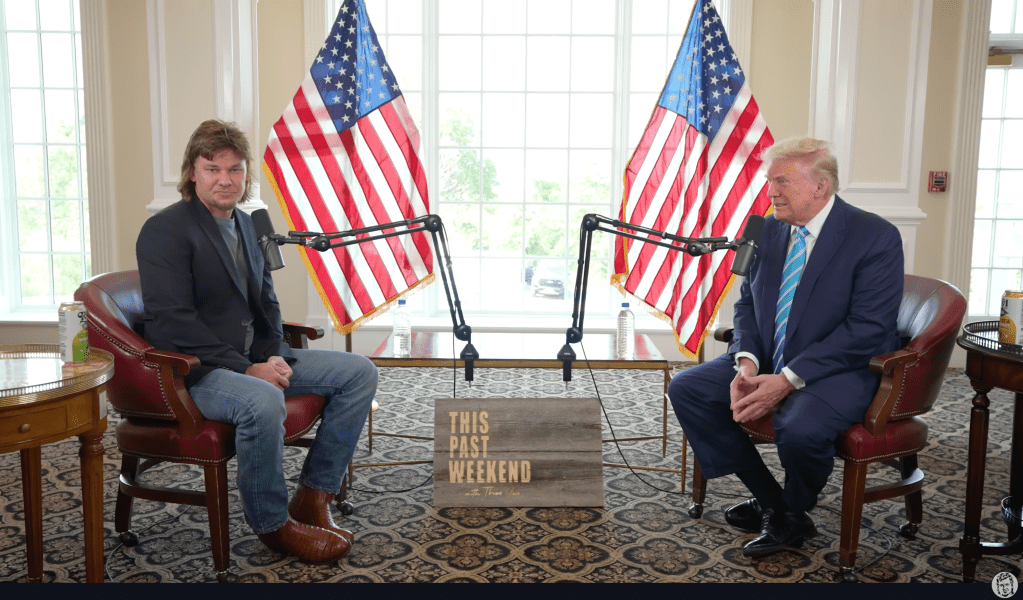

Here’s the second installment, on This Past Weekend with Theo Von.

***

With about five-and-a-half minutes remaining in the podcaster and comedian Theo Von’s August 2024 interview with Donald Trump, the conversation turned to the U.S. southern border. Thus far, the interview had not shied away from policy concerns; however, though the questions were earnest, the answers were evasive and superficial. Noting that he had hosted Border Patrol agents on his show in the past, Von reported that one of the biggest problems that the agency faced was that its officers were arresting the same people over and over again. The reason, according to Von, was that “the people that are coming in illegally aren’t being prosecuted.”

The 44-year-old podcaster then asked the president in his lilting Louisiana accent what he would do differently to alleviate the problem and make the border more secure. Like many, it was a question that allowed Trump to indulge in his penchant for superlative and self-aggrandizement.

“So, the borders, well, I did it. I did it,” Trump declared. “We had the best border … we had the wall built. We had more going to come beyond, long beyond what I promised. I built hundreds of miles of wall, and it worked.”

Now, this post isn’t necessarily the venue for relitigating the failures of what was Trump’s signature project during his first administration, for reminding you, dear reader, that despite his promise on the 2016 campaign trail that he would “build a great, great wall on our southern border” (which Mexico would pay for), and despite signing an executive order just days after taking office that directed the Secretary of Homeland Security “to immediately plan, design, and construct a physical wall along the southern border,” by the end of his term in office in January, 2021, only 452 miles of wall had been constructed – much of which was not new, and had merely replaced existing barriers. Such reminders can be found elsewhere.

Rather, the moment captured the credulity which Von freely gave the former president throughout the interview, and thereby highlighted what I suggested in my last post is the problem – or, from the candidate’s perspective, the virtue – of a media strategy that allotted a significant amount of time to non-journalists: it was unlikely that he’d get much pushback.

***

And after listening through the episode a few times and trying to put myself in the place of an apolitical Theo Von listener, or one perhaps too young to remember the first Trump administration, I began to more fully appreciate the extent to which his apparent authenticity coupled with a sense that he is not just a political outsider, but an autonomous agent free of obligation to party. Typically, this last part comes out when Trump takes aim at “them” – Joe Biden, Kamala Harris, Chuck Schumer, Nancy Pelosi, and other unnamed Democratic elites, as well as any other members of the “deep state,” or “establishment” who oppose him.

In contrast to these shadowy figures, Trump presents himself as someone who, largely because of his wealth, remains independent, and as such, is uncorrupted by “them.” He can thus position himself as a man of the people, and in fact frequently trumpeted his own popularity during the episode – with Von only too happy to provide affirmation.

But this turn toward the border and to immigration policy is also significant in retrospect, given that Von has since been critical of the second Trump administration’s mass deportation policies, and of the Department of Homeland Security’s (DHS’s) unauthorized use of his image and voice in one of its marketing videos in a way that seemed as if he supported the department’s deportation efforts.

In the now-deleted video (part of which can be seen here), Von looks directly into the camera and says “Heard you got deported dude, bye.”

The comedian quickly took to X (formerly Twitter) to vent. “Yoo DHS I didn’t approve to be used in this,” he said in a post that he later deleted. “I know you know my address so send a check. And please take this down and please keep me out of your ‘banger’ deportation videos. When it comes to immigration my thoughts and heart are a lot more nuanced than this video allows. Bye!”

Roughly a week later, on October 2, 2025, Von returned to the subject on his podcast with an impassioned statement explaining to his listeners the situation with the video and outlined some of his own thoughts on immigration. Contextualizing the clip by saying that it had been made in a parking lot after one of his comedy shows as a joke – though in Von’s telling, what he said still comes off as callous, as the “girl” who approached him with the camera was trying to tell the comedian that her friend had recently been deported – Von went on to talk about the blowback he received as a result of the DHS video, which was in no way an accurate depiction of his complex thoughts on immigration.

“And my father immigrated here from Nicaragua, right?” he explained, his voice beginning to break. “Like one of my prized possessions is I have his immigration papers [from] when he came here. And I have them in a frame … and, so I have tons of thoughts about it, but this was just fucked up, right? It was fucked up. And it was everywhere. It was on all platforms and stuff.”

What Von seemed to be doing here was saying that, though he may have supported a tough line on illegal immigration and had little tolerance for those who had been admitted into the country with a criminal record, he could not necessarily get behind the Trump DHS’s indiscriminate deportation scheme, which was sweeping up immigrants who had come into the country the “right way” alongside those who maybe hadn’t.

However in listening to Von’s Trump interview from 2024, it’s hard not to hear the future president laying the groundwork for what would become a maximalist strategy on immigration. “We have over 20 million people, in my opinion, right now, that came into our country [the number of unauthorized immigrants in the U.S. was estimated at 14 million in 2023]. Many come from prisons, jails, mental institutions, many terrorists,” Trump claimed, later adding that “we’re going to spend a lot of time getting the criminals out … we have a lot of people, hundreds of thousands of murderers. We have people, drug dealers … it’s not even believable.”

Although it would have been difficult at the time of the recording to imagine the terror that Trump’s Immigration and Customs Enforcement (ICE) sweeps would unleash on communities like Los Angeles, Chicago, New York, and Portland in the months following his return to office, we can hear in his attempts to vilify unauthorized foreign nationals, and in his fear-mongering about how many of them were bad actors, a justification for the use of blunt force rather than nuanced policy.

And it seemed like Von agreed, at least in principle, with the law-and-order logic underpinning Trump’s statements. “Oh, I don’t think people should be allowed to be in our country if they’re criminals,” he stated.

To give this conversation a charitable reading, it is perhaps likely that Von assumed that, once in office, Trump’s administration would have the tools to determine which foreign nationals were authorized to be in the country and which were not. Further, he may also have believed ICE would know who among this group had a criminal record – and not conduct mass roundups based on race.

Yet, as we should have all probably known by the summer of 2024, for Trump and his chief advisers, blunt force (and cruelty) was the point. Recall the so-called “Muslim Ban” instituted during Trump’s first term, which was hardly an example of a well-calibrated policy, but was rather a “total and complete shutdown” of travelers and immigrants from seven Muslim-majority countries (though even this wasn’t without its conflicts of interest as it excluded several countries like Saudi Arabia and the United Arab Emirates where Trump had business dealings).

Even the wall, which was conceived by Trump insiders in 2015 as a mnemonic device intended to help their boss to remember to mention illegal immigration at his campaign rallies, was deemed effective precisely because it was not subtle. As Trump 2016 campaign adviser Sam Nunberg told Business Insider, “I think one issue is people did understand walls … the wall in 2016 was symbolic of Donald Trump: common sense, practical solutions, simplified answers – as opposed to long nuanced, detailed policy speak.”

President Donald J. Trump’s signature is seen on a plaque on the border wall Tuesday, Jan. 12, 2021, at the Texas-Mexico border near Alamo, Texas. (Official White House Photo by Shealah Craighead) (PDM 1.0)

And this would be a fair characterization of Trump’s remarks on This Past Weekend – when Von asked earnest policy questions, Trump offered simplified, seemingly common sense responses that presented his own approach to the problems of government as something different than politics-as-usual, different because it was guided by an intensely practical, no-nonsense ethos.

Like his appearance on Logan Paul’s Impaulsive, Trump’s calm, yet forceful tone of voice on This Past Weekend tended to support his overall credibility as a leader capable of bringing logical solutions to a crisis-ridden government – of brining decisive, masculine order to the chaos in Washington. Such was the impression that listeners may have gotten, for instance, from Trump and Von’s discussion of the president’s first term executive order mandating price transparency for hospital care, which Von asked Trump about specifically, and which, Trump claimed, “would have brought down the cost of care by 50, 60%” if Biden and Kamala had enforced it.

But Trump’s appeals to common sense also provided cover for what might have otherwise been an embarrassing bit of hypocrisy. When Von began to turn the conversation toward the power of lobbyists, asking why it was that the government couldn’t seem to do anything about the so-called revolving door, Trump explained that there was a “whole constitutional thing there” (the First Amendment right to petition the government), and agreed with Von that it was “a problem and … a big problem,” adding that “we were [in his first term] doing things about it.”

What his administration did, was issue an executive order banning executive branch employees from becoming lobbyists for a period of five years. This move may have seemed like it indicated a genuine desire to “drain the swamp,” as Trump routinely promised to do on the campaign trail in 2016, but, as ProPublica revealed in a 2019 report, his administration had actually hired 1 lobbyist for every 14 political appointees that it had made since taking office (281 in total), which was four times more than Obama had appointed six years into office.

Given that they had provided ingress to the executive branch, it is perhaps unsurprising that they would eventually provide egress, executive order notwithstanding. Indeed, on the final day of his first term, Trump revoked the order without giving explanation, clearing the way for members of his administration to secure lucrative lobbying gigs. Such contradictions, however, were more or less concealed behind Trump’s populist rhetoric, behind his apparent recognition that conflicts of interest are a problem in politics, or that medical debt is crushing Americans.

But taking a sound studies perspective, we can also see – or hear – how Trump’s tone of voice, which admittedly seems less energetic than it was during his Logan Paul interview, tended to convey an assurance that what he said was an authentic expression of his own thoughts and perspectives. Again, this was not the kind of stream-of-consciousness raving that we have come to expect from his rallies, but rather a low-key, intimate conversation about relevant issues and facts – or, at least facts as Trump saw them.

The implication here is that Trump as a political leader is free to operate in ways that mere politicians and government officials simply can’t because of their obligations to party, to donors, or to lobbyists. What is likely missed in all of this, however, is that what Trump is describing is a thoroughly authoritarian approach to political power, one that is of a piece with his claim that “I alone can fix it.” Positioning himself outside the political establishment – and even independent of the Republican Party of which he is nominally the leader – Trump can offer himself as a political messiah and claim the moral authority to act without regard for democratic processes in the name of a specious popular mandate.

In other words, by contrasting himself with “them,” and by holding himself at a distance from the dominant political order, Trump clears himself of the obligation to work with any group or individual that he deems to be opposed to his own quasi-populist agenda.

And for Von and those in his audience who are fed up with the status quo, that is a powerful appeal.

—

Featured Image: Theo Von, Edited James Tamim, Wikimedia Images (CC BY-SA 2.0)

—

Andrew J. Salvati is an adjunct professor in the Media and Communications program at Drew University, where he teaches courses on podcasting and television studies. His research interests include media and cultural memory, television history, and mediated masculinity. He is the co-founder and occasional co-host of Inside the Box: The TV History Podcast, and Drew Archives in 10.

—

REWIND! . . .If you liked this post, you may also dig:

Impaulsive: Bro-casting Trump, Part I—Andrew Salvati

Taters Gonna Tate. . .But Do Platforms Have to Platform?: Listening to the Manosphere—Andrew Salvati

Listening to MAGA Politics within US/Mexico’s Lucha Libre –Esther Díaz Martín and Rebeca Rivas

Gendered Sonic Violence, from the Waiting Room to the Locker Room–Rebecca Lentjes

Recent Comments