Erratic Furnaces of Infrasound: Volcano Acoustics

Welcome back to Hearing the UnHeard, Sounding Out‘s series on how the unheard world affects us, which started out with my post on hearing large and small, continued with a piece by China Blue on the sounds of catastrophic impacts, and now continues with the deep sounds of the Earth itself by Earth Scientist Milton Garcés.

Welcome back to Hearing the UnHeard, Sounding Out‘s series on how the unheard world affects us, which started out with my post on hearing large and small, continued with a piece by China Blue on the sounds of catastrophic impacts, and now continues with the deep sounds of the Earth itself by Earth Scientist Milton Garcés.

Faculty member at the University of Hawaii at Manoa and founder of the Earth Infrasound Laboratory in Kona, Hawaii, Milton Garces is an explorer of the infrasonic, sounds so low that they circumvent our ears but can be felt resonating through our bodies as they do through the Earth. Using global networks of specialized detectors, he explores the deepest sounds of our world from the depths of volcanic eruptions to the powerful forces driving tsunamis, to the trails left by meteors through our upper atmosphere. And while the raw power behind such events is overwhelming to those caught in them, his recordings let us appreciate the sense of awe felt by those who dare to immerse themselves.

In this installment of Hearing the UnHeard, Garcés takes us on an acoustic exploration of volcanoes, transforming what would seem a vision of the margins of hell to a near-poetic immersion within our planet.

– Guest Editor Seth Horowitz

—

The sun rose over the desolate lava landscape, a study of red on black. The night had been rich in aural diversity: pops, jetting, small earthquakes, all intimately felt as we camped just a mile away from the Pu’u O’o crater complex and lava tube system of Hawaii’s Kilauea Volcano.

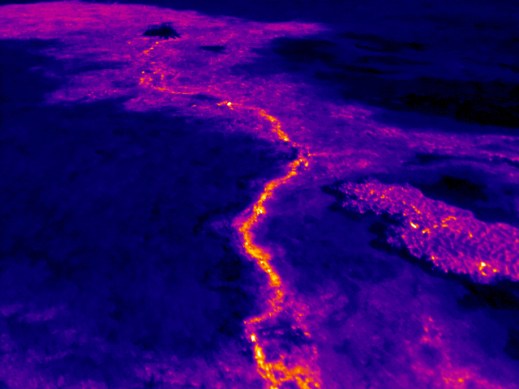

The sound records and infrared images captured over the night revealed a new feature downslope of the main crater. We donned our gas masks, climbed the mountain, and confirmed that indeed a new small vent had grown atop the lava tube, and was radiating throbbing bass sounds. We named our acoustic discovery the Uber vent. But, as most things volcanic, our find was transitory – the vent was eventually molten and recycled into the continuously changing landscape, as ephemeral as the sound that led us there in the first place.

Volcanoes are exceedingly expressive mountains. When quiescent they are pretty and fertile, often coyly cloud-shrouded, sometimes snowcapped. When stirring, they glow, swell and tremble, strongly-scented, exciting, unnerving. And in their full fury, they are a menacing incandescent spectacle. Excess gas pressure in the magma drives all eruptive activity, but that activity varies. Kilauea volcano in Hawaii has primordial, fluid magmas that degass well, so violent explosive activity is not as prominent as in volcanoes that have more evolved, viscous material.

Well-degassed volcanoes pave their slopes with fresh lava, but they seldom kill in violence. In contrast, the more explosive volcanoes demolish everything around them, including themselves; seppuku by fire. Such massive, disruptive eruptions often produce atmospheric sounds known as infrasounds, an extreme basso profondo that can propagate for thousands of kilometers. Infrasounds are usually inaudible, as they reside below the 20 Hz threshold of human hearing and tonality. However, when intense enough, we can perceive infrasound as beats or sensations.

Like a large door slamming, the concussion of a volcanic explosion can be startling and terrifying. It immediately compels us to pay attention, and it’s not something one gets used to. The roaring is also disconcerting, especially if one thinks of a volcano as an erratic furnace with homicidal tendencies. But occasionally, amidst the chaos and cacophony, repeatable sound patterns emerge, suggestive of a modicum of order within the complex volcanic system. These reproducible, recognizable patterns permit the identification of early warning signals, and keep us listening.

Each of us now have technology within close reach to capture and distribute Nature’s silent warning signals, be they from volcanoes, tsunamis, meteors, or rogue nations testing nukes. Infrasounds, long hidden under the myth of silence, will be everywhere revealed.

The “Cookie Monster” skylight on the southwest flank of Pu`u `O`o. Photo by J. Kauahikaua 27 September 2002

I first heard these volcanic sounds in the rain forests of Costa Rica. As a graduate student, I was drawn to Arenal Volcano by its infamous reputation as one of the most reliably explosive volcanoes in the Americas. Arenal was cloud-covered and invisible, but its roar was audible and palpable. Here is a tremor (a sustained oscillation of the ground and atmosphere) recorded at Arenal Volcano in Costa Rica with a 1 Hz fundamental and its overtones:

.

In that first visit to Arenal, I tried to reconstruct in my minds’ eye what was going on at the vent from the diverse sounds emitted behind the cloud curtain. I thought I could blindly recognize rockfalls, blasts, pulsations, and ground vibrations, until the day the curtain lifted and I could confirm my aural reconstruction closely matched the visual scene. I had imagined a flashing arc from the shock wave as it compressed the steam plume, and by patient and careful observation I could see it, a rapid shimmer slashing through the vapor. The sound of rockfalls matched large glowing boulders bouncing down the volcano’s slope. But there were also some surprises. Some visible eruptions were slow, so I could not hear them above the ambient noise. By comparing my notes to the infrasound records I realized these eruption had left their deep acoustic mark, hidden in plain sight just below aural silence.

I then realized one could chronicle an eruption through its sounds, and recognize different types of activity that could be used for early warning of hazardous eruptions even under poor visibility. At the time, I had only thought of the impact and potential hazard mitigation value to nearby communities. This was in 1992, when there were only a handful of people on Earth who knew or cared about infrasound technology. With the cessation of atmospheric nuclear tests in 1980 and the promise of constant vigilance by satellites, infrasound was deemed redundant and had faded to near obscurity over two decades. Since there was little interest, we had scarce funding, and were easily ignored. The rest of the volcano community considered us a bit eccentric and off the main research streams, but patiently tolerated us. However, discussions with my few colleagues in the US, Italy, France, and Japan were open, spirited, and full of potential. Although we didn’t know it at the time, we were about to live through Gandhi’s quote: “First they ignore you, then they laugh at you, then they fight you, then you win.”

Fast forward 22 years. A computer revolution took place in the mid-90’s. The global infrasound network of the International Monitoring System (IMS) began construction before the turn of the millennium, in its full 24-bit broadband digital glory. Designed by the United Nations’s Comprehensive Nuclear-Test-Ban Treaty Organization (CTBTO), the IMS infrasound detects minute pressure variations produced by clandestine nuclear tests at standoff distances of thousands of kilometers. This new, ultra-sensitive global sensor network and its cyberinfrastructure triggered an Infrasound Renaissance and opened new opportunities in the study and operational use of volcano infrasound.

Suddenly endowed with super sensitive high-resolution systems, fast computing, fresh capital, and the glorious purpose of global monitoring for hazardous explosive events, our community rapidly grew and reconstructed fundamental paradigms early in the century. The mid-naughts brought regional acoustic monitoring networks in the US, Europe, Southeast Asia, and South America, and helped validate infrasound as a robust monitoring technology for natural and man-made hazards. By 2010, infrasound was part of the accepted volcano monitoring toolkit. Today, large portions of the IMS infrasound network data, once exclusive, are publicly available (see links at the bottom), and the international infrasound community has grown to the hundreds, with rapid evolution as new generations of scientists joins in.

In order to capture infrasound, a microphone with a low frequency response or a barometer with a high frequency response are needed. The sensor data then needs to be digitized for subsequent analysis. In the pre-millenium era, you’d drop a few thousand dollars to get a single, basic data acquisition system. But, in the very near future, there’ll be an app for that. Once the sound is sampled, it looks much like your typical sound track, except you can’t hear it. A single sensor record is of limited use because it does not have enough information to unambiguously determine the arrival direction of a signal. So we use arrays and networks of sensors, using the time of flight of sound from one sensor to another to recognize the direction and speed of arrival of a signal. Once we associate a signal type to an event, we can start characterizing its signature.

Consider Kilauea Volcano. Although we think of it as one volcano, it actually consists of various crater complexes with a number of sounds. Here is the sound of a collapsing structure

As you might imagine, it is very hard to classify volcanic sounds. They are diverse, and often superposed on other competing sounds (often from wind or the ocean). As with human voices, each vent, volcano, and eruption type can have its own signature. Identifying transportable scaling relationships as well as constructing a clear notation and taxonomy for event identification and characterization remains one of the field’s greatest challenges. A 15-year collection of volcanic signals can be perused here, but here are a few selected examples to illustrate the problem.

First, the only complete acoustic record of the birth of Halemaumau’s vent at Kilauea, 19 March 2008:

.

Here is a bench collapse of lava near the shoreline, which usually leads to explosions as hot lava comes in contact with the ocean:

.

.

Here is one of my favorites, from Tungurahua Volcano, Ecuador, recorded by an array near the town of Riobamba 40 km away. Although not as violent as the eruptive activity that followed it later that year, this sped-up record shows the high degree of variability of eruption sounds:

.

The infrasound community has had an easier time when it comes to the biggest and meanest eruptions, the kind that can inject ash to cruising altitudes and bring down aircraft. Our Acoustic Surveillance for Hazardous Studies (ASHE) in Ecuador identified the acoustic signature of these type of eruptions. Here is one from Tungurahua:

.

Our data center crew was at work when such a signal scrolled through the monitoring screens, arriving first at Riobamba, then at our station near the Colombian border. It was large in amplitude and just kept on going, with super heavy bass – and very recognizable. Such signals resemble jet noise — if a jet was designed by giants with stone tools. These sustained hazardous eruptions radiate infrasound below 0.02 Hz (50 second periods), so deep in pitch that they can propagate for thousands of kilometers to permit robust acoustic detection and early warning of hazardous eruptions.

In collaborations with our colleagues at the Earth Observatory of Singapore (EOS) and the Republic of Palau, infrasound scientists will be turning our attention to early detection of hazardous volcanic eruptions in Southeast Asia. One of the primary obstacles to technology evolution in infrasound has been the exorbitant cost of infrasound sensors and data acquisition systems, sometimes compounded by export restrictions. However, as everyday objects are increasingly vested with sentience under the Internet of Things, this technological barrier is rapidly collapsing. Instead, the questions of the decade are how to receive, organize, and distribute the wealth of information under our perception of sound so as to construct a better informed and safer world.

IRIS Links

http://www.iris.edu/spud/infrasoundevent

http://www.iris.edu/bud_stuff/dmc/bud_monitor.ALL.html, search for IM and UH networks, infrasound channel name BDF

—

Milton Garcés is an Earth Scientist at the University of Hawaii at Manoa and the founder of the Infrasound Laboratory in Kona. He explores deep atmospheric sounds, or infrasounds, which are inaudible but may be palpable. Milton taps into a global sensor network that captures signals from intense volcanic eruptions, meteors, and tsunamis. His studies underscore our global connectedness and enhance our situational awareness of Earth’s dynamics. You are invited to follow him on Twitter @iSoundHunter for updates on things Infrasonic and to get the latest news on the Infrasound App.

—

Featured image: surface flows as seen by thermal cameras at Pu’u O’o crater, June 27th, 2014. Image: USGS

—

REWIND! If you liked this post, check out …

SO! Amplifies: Ian Rawes and the London Sound Survey — Ian Rawes

Sounding Out Podcast #14: Interview with Meme Librarian Amanda Brennan — Aaron Trammell

Catastrophic Listening — China Blue

Recent Comments