SO! Reads: Danielle Shlomit Sofer’s Sex Sounds: Vectors of Difference in Electronic Music

Distance, therefore, preserves a European austerity in recorded musical practices, and electroacoustic practice is no exception; it is perhaps even responsible for reinvigorating a colonial posterity in contemporary music as so many examples in this book follow this pattern–Danielle Shlomit Sofer, Sex Sounds, 14.

Sex Sounds: Vectors of Difference in Electronic Music (MIT Press, 2022) by Danielle Shlomit Sofer brings a complex analysis for contemporary de-colonial, queer and feminist readers. This book did its best to sustain an argument diving into eleven case studies and strongly problematising the Western white cis gaze. Sofer offers readers a new perspective in both the history of music and the decolonisation of that history.

In a moment when discussions of consent, censorship, pleasure, and surveillance are reshaping how we think about media, Sofer asks: What does sex sound like, and why does it matter? Their analysis cuts across high art and popular culture, from avant-garde compositions to pop music to porn, revealing how sonic expressions of sex are never neutral—they’re deeply entangled in gendered, racialized, and heteronormative structures. In doing so, Sex Sounds resonates with broader critical work on listening as a political act, aligning with ongoing conversations in sound studies about the ethics of hearing and the politics of voice, noise, and silence

The main focus of Sex Sounds is the historical loop of sexual themes in electronic music since the 1950s. Sofer writes from the perspective of a mixed-race, nonbinary Jewish scholar specializing in music theory and musicology. They argue that the way the Western world teaches music history involves hegemonic narratives. In other words, the author’s impetus is to highlight the construction of mythological figures such as Pierre Schaeffer in France and Karlheinz Stockhausen in Germany who represent the canon of the Eurocentric music phenomena.

Sex Sounds specifically follows the concept of “Electrosexual Music,” defined by Sofer as electroacoustic Sound and Music interacting with sex and eroticism as socialized aesthetics. The issue of representation in music is a key research focus navigating questions such as: “How does music present sex acts and who enacts them? ” as well as: “how does a composer represent sexuality? How does a performer convey sexuality? And how does a listener interpret sexuality?” (xxiv & xxix). Moreover, Sofer traces: “the threats of representation, namely exploitation and objectification” (xxxvii) as the result of white male privilege and the historical harm and violence this means (xiix & 271).

By exploring answers to these questions, Sofer successfully exposes how electroacoustic sexuality has historically operated as a constant presence in many music genres, as well as proving that music and sound did not begin in Europe nor belongs only to the Anglo-European provincial cosmos. Sex Sounds gives visibility to peripheral voices ignored by the Eurocentric canon, arguing for a new history of music where countries such as Egypt, Ghana, South Africa, Chile, Japan or Korea are central.

Sofer further vivisects the meaning of sexual sounds as not only Eurocentric and colonial but patriarchal and sexist. What is the history behind sex sounds in the electroacoustic music field? Can we find liberation in sex sounds or have they only reproduced dominance? Which role do sex sounds play in the territories of otherness and racial representation? Are there examples where minoritized people have reclaimed their voice? Sex is part of our humanity. But how do sex sounds dehumanize female subjects? These are more of the fundamental questions Sofer responds to in this study.

I aim, first and foremost, to show that electrosexual music is far representative a collection than the typically presented electroacoustic figures -supposedly disinterested, disembodied, and largely white cis men from Europe and North America –Sofer, Sex Sounds,(xvi).

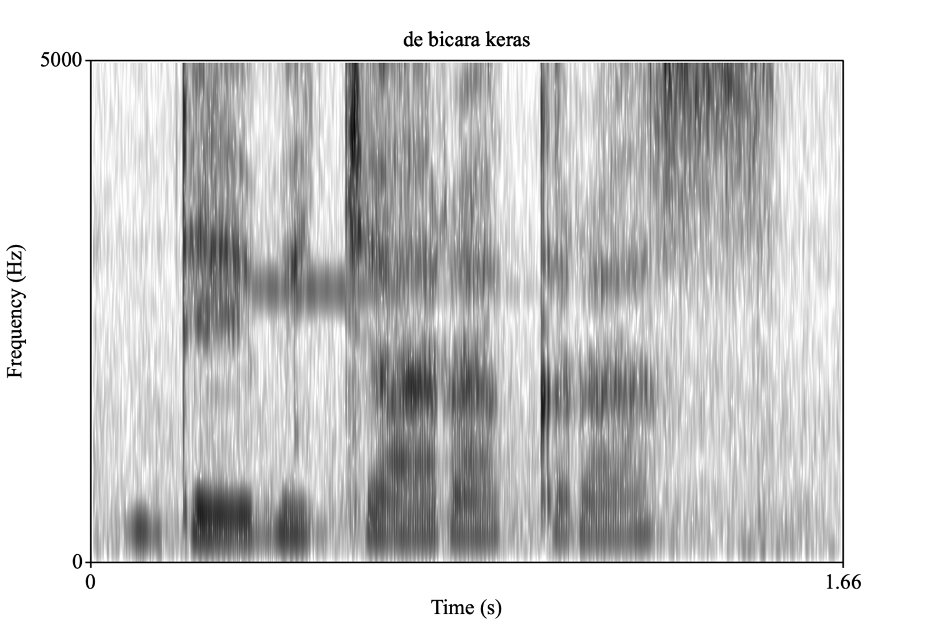

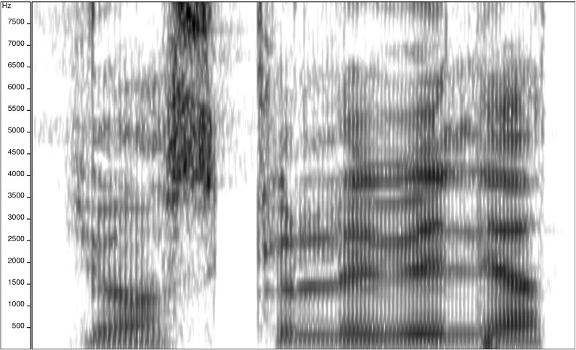

The time frame of the study ranges from 1950 until 2012, analysing four case studies. Sofer divides the book in two parts: Part I: “Electroacoustics of the Feminized Voice” and Part II: “Electrosexual Disturbance.” The first part contextualizes “electrosexual” music within the dominant cis white racial frame. The main argument is to demonstrate how many canonic electroacoustic works in the history of Western sound have sustained an ongoing dominance as a historical habit locating the male gaze at the center as well as instrumentalizing the ‘feminized voice’ as mere object of desire without personification and recognition as fundamental actor in the compositions. Under such a premise, Sofer vivisects sound works such as “Erotica” by the father of Musique Concretè Pierre Schaffer and Pierre Henry (1950-1951), Luc Ferrari’s “les danses organiques” (1973) and Robert Normandeu’s “Jeu de Langues” (2009), among other pieces.

Luc Ferrrari’s work from 1973 is one of many examples in which Sofer makes evident the question of consent, since the women’s voices he includes were used in his work without their knowledge, a pattern of objectivation that mirrors structures of patriarchal domination. Sofer “defines and interrogates the assumed norms of electroacoustic sexual expression in works that represent women’s presumed sexual experience via masculinist heterosexual tropes, even when composed by women” (xivii-xiviii). Sofer emphasises the existence of “distance” as a gendered trope in which women’s audible sexual pleasure is presented as “evidence” in the form of sexualized and racialized intramusical tropes. Philosophically speaking, this phenomena, Sofer argues, goes back to Friedrich Nietzsche and his understanding of the “women’s curious silence” (xxvii). In other words, a woman can be curious but must remain silent and in the shadows.

This is the case in Schaeffer and Henry’s “Erotica” (1950-1951), one of the earliest colonial impetus to electrosexual music in which female voices are both present and erased, present in the recording but erased as subjects of sonic agency, since the composers did not credit the woman behind the voice recordings. She has no name nor authorship, but her sexualized voice is the main element in the composition. This paradox shows the issue of prioritising the ‘Western’ white European cis male gaze. This gaze uses women’s sexuality as a commerce where only the composer benefits from this use. This exposes the problem of labor and exploitation within electroacoustic practice historically dominated by white men.

“Erotica” stands out for its sensual tension, abstract eroticism, and experimental use of the body as both subject and instrument. This work belongs to the hegemonic narrative of electroacoustic music with the use of sex sounds as aesthetic objects that insinuate erotic arousal as a construct of the male gaze.

Through examples like “Erotica” Sofer strongly questions the exclusion of women as active agents of aesthetic sonic creation since: “electroacoustic spaces have long excluded women’s contributions as equal creators to men, who are more typically touted as composers and therefore compensated with prestige in the form of academic positions or board dominations” (xxxix). This book considers: “the threats of representation, namely exploitation and objectification” (xxxvii). Here we navigate the questions of how something is presented, by whom, and with which profit or intention. In other words, how sounds: “are created, for what purposes, and in turn, how sounds are interpreted and understood” (xxxiii).These are problems rooted in both patriarchy and capitalism.

This book is a strong contribution to decolonize the history of music as we know it, although the citations here could be richer, including studies by Rachel McCarthy (“Marking the ‘Unmarked’ Space: Gendered Vocal Construction in Female Electronic Artists” 2014), Tara Rodgers (“Tinkering with Cultural Memory: Gender and the Politics of Synthesizer Historiography” 2015), and the work of Louise Marshall and Holly Ingleton, who used intersectional feminist frameworks to analyze the work of marginalized composers (including women of color) and the curatorial practices that shape electronic music history. Also, not to forget: Chandra Mohanty’s “Under Western Eyes: Feminist Scholarship and Colonial Discourses” (1988).

Musical artist Sylvester

I argue that, although many composers of color work in electronic music, the search term ‘electroacoustic’ remains exclusionary because of who declares themselves as an advocate of this music, and not necessarily in how their music is made–Sofer, Sex Sounds, (xiv).

After a deep dive into the genealogy of the patriarchal practices in electroacoustic music understood as electrosexual works (hence: “Sex is only re-presented in music p. xxix), Sofer moves to the territory of feminist contra-narratives. In the second part of their study, Sofer offers sonic practices and concrete examples that: “break the electroacoustic mold either by consciously objecting to its narrow constraints or by emerging from, building on, and, in a sense, competing with a completely different historical trajectory” (xlvi). Contra-narratives from the racialized periphery and underground landscapes appear in this book as case studies to hold the argument and expand the homocentric and patriarchal telos found even in the sonic archives as well as the Western theoretical corpus. These ‘Others’ reclaim their voices going a step further and gaining recognition.

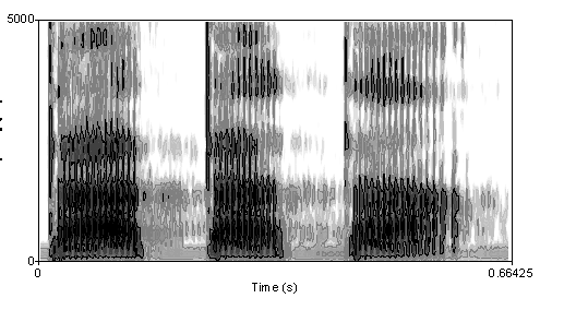

After examining examples of racialisation and objectification, Sofer selects some case studies from 1975 to 2013 in the second chapter of this section titled: “Electrosexual Disturbance.” In this section, Sofer also points to new forms of exclusion and instrumentalisation via “racial othering,” specifically in the context of popular music such as Disco where we find an emphasis on the feminized voice. Disco, as a genre rooted in Black, queer, and marginalized communities, inherently grappled with racial and gendered dynamics. Donna Summer’s “Love to Love You Baby” (1975) exemplifies this tension.

The track’s erotic vocal performance (23 simulated orgasms over 16 minutes) became emblematic of the hypersexualization of Black women in popular music. Summer’s persona as the “first lady of love” reinforced stereotypes of Black female sexuality as inherently exotic or excessive, a trope traced to racist and sexist historical narratives. Simultaneously, disco provided a space for liberation: Black and LGBTQ+ artists like Summer, Sylvester, and Gloria Gaynor used the genre to assert agency over their identities and bodies, challenging mainstream exclusion. The tropes of sex and race are a paradoxical combination bringing both oppression as well as liberation.

Sofer argues that Summers was commercially recognized but her figure as a composer was destroyed, creating consequently a hierarchy of labor. She was acknowledged for her amazing sexualized voice and performance on stage, but not recognized as a musician or equal to music producers. Here we see the practice of epistemological discrimination and extreme racial sexualisation. On the positive side, Summer became the Black Queen idol for gay liberation. Nevertheless, she remained as the sexualized and racial voice of the seventies.

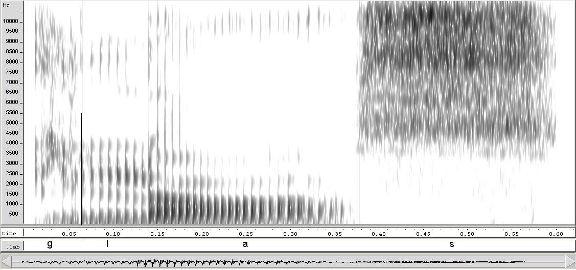

Sofer also presents the case of ex-sex worker, sex-educator and radical ecosex-activist Annie Sprinkle collaborating in a post-porn art video with the legendary Texan and lesbian composer Pauline Oliveros. For Sprinkle and Oliveros, Sofer offers a different phenomena at work, since both queer-women/Lesbian-women collaborated from the point of feminist independence and sexual liberation coming together for educational purposes.

Sluts & Goddesses (1992) is a porn film with an Oliveros soundtrack, produced by radical women– with only women–in a self-determined frame. The movie offers an example of collaboration moving from avantgarde sound composition expertise to trashy whoring and interracial lesbian power. This example was rare, but inspiring for the coming generations. Two lesbian Titans united for electrosexual disturbance from the feminist gaze, Sprinkle and Oliveros were a duo that broke silence.

This book revisits the acousmatic in its electronic manifestations to examine and interrogate sexual and sexualized assumptions underwriting electroacoustic musical philosophies.–Sofer, Sex Sounds, (xxi)

Sofer’s Sex Sounds enters into a vital and still-emerging conversation about how sound—particularly sonic expressions of sex and eroticism—shapes, disrupts, and reinscribes power. At a time when sonic studies increasingly reckon with embodiment, affect, and intimacy, Sofer brings a feminist and queer critique to the center of how we listen to, interpret, and culturally regulate the sounds of sex. Their book invites us to reconsider not only what we hear in erotic audio, but how we’ve been taught—socially, politically, morally—to hear it.

This book doesn’t just fill a gap—it pushes the field toward a more nuanced, bodily-aware mode of scholarship. For SO! readers, Sex Sounds offers both a provocation and a methodology: it challenges us to hear differently, to ask how power works not only through what is seen or said, but through what is moaned, whispered, muffled, or made to be heard too loudly.

—

Featured Image: “Stamen,” by Flickr User Sharonolk, CC BY 2.0

—

Verónica Mota Galindo is an interdisciplinary researcher based in Berlin, where they study philosophy at the Freie Universität. Their work goes beyond the academic sphere, blending sound art, critical epistemology, and community engagement to make complex philosophical ideas accessible to broader audiences. As a dedicated educator and sound artist, Mota Galindo bridges the gap between academic research and lived material experience, inviting others to explore the transformative power of critical thought and creative expression. Committed to bringing philosophy to life outside traditional boundaries, they inspire new ways of thinking aimed at emancipation of the human and non-human for collective survival.

—

REWIND!…If you liked this post, check out:

Into the Woods: A Brief History of Wood Paneling on Synthesizers*–Tara Rodgers

Ritual, Noise, and the Cut-up: The Art of Tara Transitory—Justyna Stasiowska

SO! Reads: Zeynep Bulut’s Building a Voice: Sound, Surface, Skin –Enikő Deptuch Vághy

Rhetoric After Sound: Stories of Encountering “The Hum” Phenomenon

..

“So I have heard The Hum… The rest of what I’m about to tell you is beyond reasoning, and understanding.” Here, in a Reddit post, Michael A. Sweeney prefaces their story of their first encounter with “the hum,” an unexplained phenomenon heard by only a small percentage of listeners around the world. The hum is an ominous sonic event that impacts communities from Australia to India, Scotland to the United States. And as Geoff Leventhall writes in “Low Frequency Noise: What We Know, What We Do Not Know, and What We Would Like to Know,” the hum causes “considerable problems” for people across the globe—such as nausea, headaches, fatigue, and muscle pain—as it continues to be an unsolved “acoustic mystery” (94).

..

Sweeney’s story of encountering the hum for the first time is remarkable. It begins in Taft, California, which Sweeney recounts as “a podunk little desert bowl town in the middle of nowhere. You can literally drive from one end to the other in under 10min, under 5 if you was speeding.” On this particular night, while walking down the main road of Taft, they report the scene being charged with electricity, and following this charge, hearing a moving sound, a traveling yet invisible sound. “This invisible thing was creating a noise like I had never heard before and sending a wave of static electricity throughout the air in every direction around it,” Sweeney explains. They try to track it down, but to no avail. After following the hum a few blocks and around a couple of corners, it just simply vanishes. As Kristin Gallerneaux aptly claims in her book High Static, Dead Lines: Sonic Spectres and the Object Hereafter, “the Hum’s oppression seems to come from everywhere and nowhere” (196), and this is especially true in Sweeney’s encounter.

While their account of the hum as electrically-charged is exceptional, Sweeney’s story adequately represents both the anomalistic qualities of the hum and its ability to elude a locatable and identifiable source. They attempt to describe the hum during this encounter as “like an invisible traveling vehicle of some sort,” but that, altogether, they are “not really sure” what it is. And they even admit that this explanation “makes no sense… whatsoever.” This difficulty in describing the phenomenon is reaffirmed not only by the stories told by other listeners but, too, the numerous scientific experiments that have been conducted after the hum’s frequent emergence beginning around the 1970s (Deming “The Hum: An Anomalous Sound Heard Around the World” 583).

In the US, two major studies have been conducted on the hum: The first in Taos, New Mexico, and the second in Kokomo, Indiana (Cowan “The Results of Hum Studies in the United States”; Mullins and Kelly “The Mystery of the Taos Hum”). Collectively, nearly three hundred residents in these communities have reported hearing a mysterious hum that exists without a known source. While it may sound like an idling diesel truck engine (Frosch “Manifestations of a Low-Frequency Sound of Unknown Origin Perceived Worldwide, Also known as ‘The Hum’ or the ‘Taos Hum’” 60), a dentist’s drill (Deming 575), “someone’s high-powered audio bass running amok” (Mullins and Kelly “The Elusive Hum in Taos, New Mexico”), or simply just an “invisible force”—as Sweeney claims—it may be none of the above. Musicologist Jorg Muhlhans asserts that “there is no clear evidence for either an acoustic or electromagnetic origin, nor is there an attribution to some form of tinnitus” (“Low Frequency and Infrasound: A Critical Review of the Myths, Misbeliefs and Their Relevance to Music Perception Research” 272). Thus, according to Muhlhans, these studies reveal that while hum’s sensorial impact is that of sound, the phenomenon itself is likely neither acoustic nor electromagnetic.

Beyond the limits of current scientific logics which attempt to make sense of sonic events and their impacts, the hum exists as an exceptional and unknown anomaly. It transcends the valuative limits of current knowledge in acoustics and ways of understanding how sound moves through, across, and within spaces to address potential listeners. “I’m not saying I saw waves of electricity or anything of the sort,” Sweeney adds to their above description of the hum as a sound “charged with electricity.” “It was more of a feeling than something you saw. I could just feel electricity everywhere, and see little tendrils of it from my fingertips as I ran them across each other… I just KNEW it was coming from whatever was making that sound, this invisible force that was traveling down the road.” In such an account, the hum defies scientific explanation, and this fact is supported by the multiple failed investigations into the phenomenon. As Franz Frosch details in their article “Hum and Otoacoustic Emissions May Arise Out of the Same Mechanisms,” some scientists have built multiple “custom shielded chamber[s]” out of copper and magnetic material to test for a potential acoustic or electromagnetic source of the hum (604). These investigations, time after time, can’t provide an answer—leaving it up to listeners of the hum to form their own.

Sweeney’s story—and the endless other stories of the hum that have been told across the world—depict the lived, affective, and rhetorical experience of anomalistic listening. To hear, to listen with the hum, is to experience the affective dimensions of a “sound” that has no apparent acoustic or electromagnetic source. This is because “sonic knowledge is framed through acoustics and experience” (84), as Mark Peter Wright notes in his book Listening After Nature: Field Recording, Ecology, and Critical Practice. So, to listen with the hum is to occupy a state of affection that is altogether unknown to not only science but the listener themself. Sweeney bluntly continues, writing about their experience of the hum, saying, “I only know exactly what I’ve told you today.” To know this sound, for this sound to exist as truth, this unfolds through stories found in the still-to-be-explained.

I consider “after sound” to characterize this felt condition for rhetorical action that is a result of listening beyond or after acoustic valuations. Instead of this being a moment void of sound, “after sound” defines a state of experience that is complicated by an attempt to control the valuative limits of what is and isn’t sonic. In this way, “after sound” only gestures toward the temporal to develop the different emergence of the sensorial. Because the hum affects in a manner similar to sound but without acoustic or electromagnetic origin, people who hear the hum make sense of this relentless experience through a condition that is after sound. Such a claim is represented in Sweeney’s admission that “[they] just have this weird feeling that this story needs to be told. That there’s more to The Hum than anyone has realized, and that maybe it needs to be further studied and looked into” (added emphasis). This felt, “weird feeling” is initiated after sound, and this is what I am considering as the call for rhetorical action. Such an affective, felt, and lived experience may only exist after the “logical” answers, failed scientific studies, and experiments lacking helpful results become determinative of sonic limits.

Further, “after sound” moves from Marie Thompson’s discussion of “source-oriented” noise (30) that she posits in her book Beyond Unwanted Sound: Noise, Affect, and Aesthetic Moralism. Speaking to the phenomenon of the hum, Thompson explains that an “unidentifiable noise is often amplified in perception, grasping the attention of the listener” (29). “After sound” is an hyper-attuned condition wherein the rhetorical actions of listeners are always attentive to what-may-(not)-be acoustics. It is their presence within utter sonic mystery that fuels the potential for persuasive response. And David Deming, a researcher in Geosciences, articulates in his article “The Hum: An Anomalous Sound Heard Around the World” that “in the absence of an answer provided by science, Hum hearers tend to find an explanation and hang on to it” (579), which illustrates the potential for listeners to respond via story within the conditions foregrounded by anomalistic encounters. “After sound” describes a moment of malleability that opens up in soundtime for different negotiations of sense and affect.

Responding to the conditions of listening with story, like Sweeney does, reflects an intention to share and persuade through an expression of sonic experience. As Katherine McKittrick states in her book Dear Science and Other Stories, “story opens the door to curiosity; the reams of evidence dissipate as we tell the world differently, with creative precision” (7). And V. Jo Hsu, speaking to the rhetorical potential of story, elucidates in their book Constellating Home: Trans and Queer Asian American Rhetorics that “story can slow down, hold still, redefine, and/or reimagine our physical movements to renegotiate their shared meanings” (18). How I’m conceiving of storytelling after sound develops from the insights developed by these authors and other scholars exploring the rhetorical potential of story in cultural rhetorics.

Story, in the domain of sound, enables listeners to reconsider how, when, why, and where the sonic is defined and valued. While it must not be the sole rhetorical technology after sound, Sweeney clearly relies on storytelling to make sense of their encounter with the hum. This story and other stories told about the hum illustrate how listeners practice the negotiation of sound’s meaning by collectively exploring ways of investigating and experimenting with(in) phenomena. Telling stories after sound is to reach across and through a community of listeners to find shared truths that come to be through encounters with hearing. Altogether, rhetoric after sound queries the intersection of perception and intelligibility to jostle forward the meaning of listening. At a moment when the world is at a loss for answers, this is a practice of seizing opportunities for hopeful and imaginative intervention into the valuative limits of the sonic.

“I’ll end it here,” Sweeney begins in their last paragraph, “and I can assure you that everything I have told you is the absolute truth. It happened to me and not a friend of a friend. I was awake, I’m not making any of it up, and all I want are REAL answers.” Sweeney’s call for a resolution was posted 10 months ago, in May 2023. And still today, the hum continues to lack answers, and the unsolved mystery continues to impact listeners. More recently, Popular Mechanics reported in a November 2023 article, titled “A Ghostly Nighttime Hum Is Invading Random Towns. Scientists Don’t Know What It Means,” that the hum has reached Omagh in Northern Ireland and that citizens are “trapped inside [of a mystery].” So far, no matter the amount of media coverage nor number of affected people across the world, encounters are ultimately defined by sounds unknown. After sound—when communities of listeners are left behind by these valuative limits—rhetorical action persists in search of an explanation.

—

Featured Image: Spiral by Flickr User Richard CC BY-NC 2.0 DEED

—

Trent Wintermeier is a second-year PhD student in the Department of Rhetoric and Writing at the University of Texas at Austin. He’s a Graduate Research Assistant for the AVAnnotate project, where he helps make audiovisual material more discoverable and accessible. Next year, he will be an Assistant Director for the Digital Writing & Research Lab. His research interests broadly include sound, digital rhetorics, and community. Currently, he’s interested in how researchers can responsibly engage with communities impacted by sound and the local rhetorical ecologies which materialize under sonic conditions.

—

REWIND!…If you liked this post, you may also dig:

Becoming a Bad Listener: Labyrinthitis, Vertigo, and “Passing”–Aaron Trammell

Live Through This: Sonic Affect, Queerness, and the Trembling Body–Airek Beauchamp

Listening to Tinnitus: Roles of Media When Hearing Breaks Down—Mack Hagood

My Time in the Bush of Drones: or, 24 Hours at Basilica Hudson–Robert Cashin Ryan

Echoes of the Latent Present: Listening to Lags, Delays, and Other Temporal Disjunctions–Matthew Tompkinson

Re-orienting Sound Studies’ Aural Fixation: Christine Sun Kim’s “Subjective Loudness”–Sarah Mayberry Scott

Recent Comments