In Defense of Auto-Tune

I am here today to defend auto-tune. I may be late to the party, but if you watched Lil Wayne’s recent schizophrenic performance on MTV’s VMAs you know that auto-tune isn’t going anywhere. The thoughtful and melodic opening song “How to Love” clashed harshly with the expletive-laden guitar-rocking “John” Weezy followed with. Regardless of how you judge that disjunction, what strikes me about the performance is that auto-tune made Weezy’s range possible. The studio magic transposed onto the live moment dared auto-tune’s many haters to revise their criticisms about the relationship between the live and the recorded. It suggested that this technology actually opens up possibilities, rather than marking a limitation.

Auto-tune is mostly synonymous with the intentionally mechanized vocal distortion effect of singers like T-Pain, but it has actually been used for clandestine pitch correction in the studio for over 15 years. Cher’s voice on 1998’s “Believe” is probably the earliest well-known use of the device to distort rather than correct, though at the time her producers claimed to have used a vocoder pedal, probably in an attempt to hide what was then a trade secret—the Antares Auto-Tune machine is widely used to correct imperfections in studio singing. The corrective function of auto-tune is more difficult to note than the obvious distortive effect because when used as intended, auto-tuning is an inaudible process. It blends flubbed or off-key notes to the nearest true semi-tone to create the effect of perfect singing every time. The more off-key a singer is, the harder it is to hide the use of the technology. Furthermore, to make melody out of talking or rapping the sound has to be pushed to the point of sounding robotic.

Antares Auto-Tune 7 Interface

The dismissal of auto-tuned acts is usually made in terms of a comparison between the modified recording and what is possible in live performance, like indie folk singer Neko Case’s extended tongue-lashing in Stereogum. Auto-tune makes it so that anyone can sing whether they have talent or not, or so the criticism goes, putting determination of talent before evaluation of the outcome. This simple critique conveniently ignores how recording technology has long shaped our expectations in popular music and for live performance. Do we consider how many takes were required for Patti LaBelle to record “Lady Marmalade” when we listen? Do we speculate on whether spliced tape made up for the effects of a fatiguing day of recording? Chances are that even your favorite and most gifted singer has benefited from some form of technology in recording their work. When someone argues that auto-tune allows anyone to sing, what they are really complaining about is that an illusion of authenticity has been dispelled. My question in response is: So what? Why would it so bad if anyone could be a singer through Auto-tuning technology? What is really so threatening about its use?

As Walter Benjamin writes in “The Work of Art in the Age of Mechanical Reproduction,” the threat to art presented by mechanical reproduction emerges from the inability for its authenticity to be reproduced—but authenticity is a shibboleth. He explains that what is really threatened is the authority of the original; but how do we determine what is original in a field where the influences of live performance and record artifact are so interwoven? Auto-tune represents just another step forward in undoing the illusion of art’s aura. It is not the quality of art that is endangered by mass access to its creation, but rather the authority of cultural arbiters and the ideological ends they serve.

Auto-tune supposedly obfuscates one of the indicators of authenticity, imperfections in the work of art. However, recording technology already made error less notable as a sign of authenticity to the point where the near perfection of recorded music becomes the sign of authentic talent and the standard to which live performance is compared. We expect the artist to perform the song as we have heard it in countless replays of the single, ignoring that the corrective technologies of recording shaped the contours of our understanding of the song.

In this way, we can think of the audible auto-tune effect is actually re-establishing authenticity by making itself transparent. An auto-tuned song establishes its authority by casting into doubt the ability of any art to be truly authoritative and owning up to that lack. Listen to the auto-tuned hit “Blame It” by Jaime Foxx, featuring T-Pain, and note how their voices are made nearly indistinguishable by the auto-tune effect.

It might be the case that anyone is singing that song, but that doesn’t make it less bumping and less catchy—in fact, I’d argue the slippage makes it catchier. The auto-tuned voice is the sound of a democratic voice. There isn’t much precedent for actors becoming successful singers, but “Blame It” provides evidence of the transcendent power of auto-tune allowing anyone to participate in art and culture making. As Benjamin reminds us, “The fact that the new mode of participation first appeared in a disreputable form must not confuse the spectator.” The fact that “anyone” can do it increases possibilities and casts all-encompassing dismissal of auto-tune as reactionary and elitist.

Mechanical reproduction may “pry an object from its shell” and destroy its aura and authority–demonstrating the democratic possibilities in art as it is repurposed–but I contend that auto-tune goes one step further. It pries singing free from the tyranny of talent and its proscriptive aesthetics. It undermines the authority of the arbiters of talent and lets anyone potentially take part in public musical vocal expression. Even someone like Antoine Dodson, whose rant on the local news, ended up a catchy internet hit thanks to the Songify project.

Auto-tune represents a democratic impulse in music. It is another step in the increasing access to cultural production, going beyond special classes of people in social or economic position to determine what is worthy. Sure, not everyone can afford the Antares Auto-Tune machine, but recent history has demonstrated that such technologies become increasingly affordable and more widely available. Rather than cold and soulless, the mechanized voice can give direct access to the pathos of melody when used by those whose natural talent is not for singing. Listen to Kanye West’s 808s & Heartbreak, or (again) Lil Wayne’s “How To Love.” These artists aren’t trying to get one over on their listeners, but just the opposite, they want to evoke an earnestness that they feel can only be expressed through the singing voice. Why would you want to resist a world where anyone could sing their hearts out?

—

Osvaldo Oyola is a regular contributor to Sounding Out! He is also an English PhD student at Binghamton University.

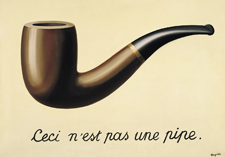

“This is Not a Sound”: The Treachery of Sound in Comic Books

In comics theorist Scott McCloud‘s seminal work Understanding Comics (1993), there comes a point following his convoluted description of Magritte’s “The Treachery of Images” where he asks the reader, “Do you hear what I’m saying?” In the next panel he adds, “If you do, have your ears check because no one said a word.” The joke is, of course, that while his comic doppelganger is depicted as talking through the use of word balloons, no words are being spoken. We are reading, not hearing. And yet, sound (or rather, its representation) remains a crucial part of reading and enjoying comic books.

Magritte was trying to get us to think about the treachery of visual representation, while McCloud points us of the treachery of aural representation. A stylized “SPLAT!” is certainly not a sound, but our instinctual understanding of sound helps us to interpret what is otherwise a silent medium in ways beyond the mere the descriptive effect of a sound’s depiction. The way comics use sound can teach us about the function of sound in understanding the visual and textual. As McCloud asserts, comics depend on the reader to create closure between parts of an imagined whole in order for disparate panels to make sense. While it second-nature for the comic reader to interpret the depiction of sound in comics, the closure enacted to make stylized textual elements into “a sound” is a central way that this is enacted.

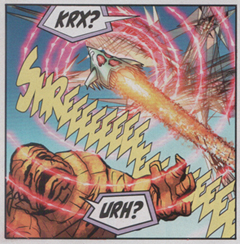

The most famous use of comic sound effect words is probably from the old 1960s Batman TV series—where the “SOCK!” and “BONG!” of superhero and sidekick reinforced the campy aesthetic of the program. It is telling that the Batman-theme (and the fight scenes in general) uses horn flares to emphasize those “POW!” and “BIFF!” moments. The suggestion is that the ostentatious representations of sound that these textual flare sound effect words provide are an empty signifier. There is no sound behind that sound. The weak-sounding slaps and smacks of knuckles on flesh would never suffice for the larger than life world of comic superheroes, and the more out-there comics get the more difficult it is to trace a relationship between the textual/visual representation and any sound in the real world. There is no point of comparison by which to understand the “SHREEEEEE!” of a launching “zirrer” in Kurt Busiek’s Astro City, but only the vague evocation of some loud shrill noise.

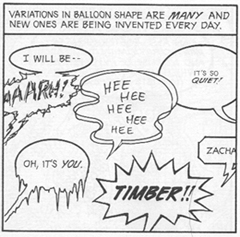

And yet, comic readers not only understand these representations as sound, but there are also a variety of visual clues given that help the reader interpret some quality of those sounds. The most ubiquitous example of sound in comics is, of course, the word balloon—so ubiquitous in fact that it is easy to take for granted the fact that comics have their own conventions for handling and describing sound without recourse to adjectives. The irony is that the shape and texture of word balloons (just like the shape and texture of sound effect words like “BOOM!”) that help to convey the quality of sound become nearly invisible to the reader. Just as any literate person sees a word they know and interprets it for what it is meant to represent and not a collection of individual letters, the dripping icicle-like shape of a word balloon is read as a cold tone or the sharp points of the balloon are read as loud and abrupt.

And yet, comic readers not only understand these representations as sound, but there are also a variety of visual clues given that help the reader interpret some quality of those sounds. The most ubiquitous example of sound in comics is, of course, the word balloon—so ubiquitous in fact that it is easy to take for granted the fact that comics have their own conventions for handling and describing sound without recourse to adjectives. The irony is that the shape and texture of word balloons (just like the shape and texture of sound effect words like “BOOM!”) that help to convey the quality of sound become nearly invisible to the reader. Just as any literate person sees a word they know and interprets it for what it is meant to represent and not a collection of individual letters, the dripping icicle-like shape of a word balloon is read as a cold tone or the sharp points of the balloon are read as loud and abrupt.

In her essay “The Comic Book’s Soundtrack” from The Language of Comics (2001), Catherine Khordoc provides a very good overview of the use of sound in comics using the example of Goscinny and Uderzo’s Asterix to provide examples of the various ways word balloons and the implanting of onomatopoeic words directly into the panel image itself are used to represent sounds in comic books. Yet, the function of the representation of sound in comic runs even deeper than simply translating the quality of sound itself; it also serves to help establish timeframes for panels (or sets of panels) and functions in establishing the closure the reader performs in making sense of both individual panels and their context within a sequence of panels. Discrete sounds—whether it’s the “FWOOSH!” of the Human Torch flaming on or long-winded pseudo-scientific explanation of the Negative Zone by Mr. Fantastic—require the passage of time to be intelligible. In order for sounds to be differentiated, they must have some form of beginning, middle and end (or in the parlance of synthesized sound, “attack, decay, sustain, release”). This means that in comics, a medium where space and time merge, representations of sound are crucial to making sense of action, in particular, to the passage of time within a singular panel—for while time can be shown to pass between two or more panels through the process of closure (implicitly understanding the movement or occurrence not depicted between panels that makes them sequential), a singular panel is not necessarily a discrete moment, as an entire conversation can occur within it, requiring readers to perform closure even within the scope of a single panel.

For example, in the second panel below, despite the static image, the passage of time suggested by the conversation about Spider-man’s wounds and payment leads the reader to make sense of the sequence between it and the panel that follows. It is the reader’s understanding that it takes time to talk and listen out loud that helps make the time of the panel apparent.

Perhaps the most telling evidence of the centrality of sound, at least to the superhero comic genre, was Marvel’s decision to include a synopsis and explanation of the action at the end of each issue of the “‘Nuff Said,” “silent” month of comics back in 2001—wherein there was no dialogue or captions.

There is still a lot to consider when it comes to sound in comics—not just the rhetoric of sound or sound as a signifier of time, but sound as identity. Representations of sound in comics can serve as a form of character signature, and I do not mean only famous lines like Superman’s “Up, up and away!” (which really emerged from Superman radio plays), but iconic sounds such as Spider-man’s web-shooters going “THWIPP!” or Wolverine’s claws, “SNIKT!” that over time have come to be more than just descriptive sound-words, but signifiers that are unique for the characters themselves. (See TV Trope’s page on signature sound effects)

In the end, this brief overview will hopefully serve as a starting point in generating more thoughts on not only how our familiarity with sound informs our reading and interpreting of comics, but how this (admittedly) very general idea can be applied to other ostensibly silent and primarily visual media. The use of sound in comics is a perfect example of how the transparency of sound can make it presence and function easy to overlook. Furthermore, the way in which it is used to orient the reader and help provide closure between and within panels, and identify characters clues us in to the importance of its role and the importance of considering where and how else it might function. I, for one, am going to keep thinking on it and looking for examples of in comics and hope that others join their thoughts to the discussion. Until then, as Stan Lee would “say,” Excelsior!

Recent Comments