SO! Thursday Stream Year in Re-Hear

The offer was, I confess, music to my ears. It was the around this time last year that Editor-in-Chief Jennifer Stoever and the SO! collective generously offered me the chance to come on board to help them draw in sound-minded editors and authors from the American Studies Association and Society for Cinema & Media Studies, and other academic associations, opening up a new space two or three Thursdays each month. The truth is I never even considered turning them down. Working together, we recruited talented folks to work as Guest Editors, crafting a number of special series posts that dig deep into mediated sonic worlds of music, radio, film, art and science.

The result has been a group of articles that I couldn’t be prouder of for their richness. Among the most widely-read articles I’ve worked on this year you’ll find Mike D’Errico’s controversial piece on gender and brostep, but also Margaret Schedel’s groundbreaking article on sonifying nanoparticles. Go ahead, try to find another sound studies venue – online or anyplace – with range like that. No luck? As I suspected. Welcome back.

Not only has working on SO! been an honor, it has also opened up new horizons for me, forged odd alliances and prompted strange harmonies – hallmarks of what exciting sound studies ought to be about. I learned something and relearned more every week. In that spirit, this “Year Re-hear” post celebrates the Thursday stream by listening back –not once, but three times — to where we’ve been.

—

First, the straight story.

Our year started with The Wobble Continuum, a series on race, gender and dubstep, edited by Justin D. Burton (Rider University) with posts by Mike D’Errico (UCLA), Christina Giacona (U of Oklahoma), and Burton. These articles brought new perspective on the “maximalist aesthetic” of electronic dance music and explored resistance to sonic racism, while examining sonic experience everywhere from a baseball stadium to a bus stop.

Then, beginning in February, we heard from Latin America through Radio de Acción a series on radio and the idea of region. Edited by Tom McEnaney (Cornell), with posts by Alejandra Bronfman (UBC), Karl Swinehart (Uchicago) and Carolina Guerrero (Radio Ambulante), RdA brought us fascinating stories of student activists taking over radio stations to oppose Fulgencio Batista in the 1950’s and of the founding of Radio Ambulante, at the forefront of Spanish-language creative narrative radio today.

When Spring came (remember Spring? sigh.) I edited Start a Band, reflecting on the legacy and music of the late Lou Reed, with posts by Jacob Smith (Northwestern) and Tim Anderson (Old Dominion). Tim and Jake offered penetrating accounts of how reissues of Velvet Underground records helped a generation learn to listen, and how their music quite literally gets under your skin, and sometimes even deeper.

Sculpting the Film Soundtrack, was our next series, an ambitious take on new directions in film sound design edited by Katherine Spring (Wilfrid Laurier), with posts by Randolph Jordan (Simon Fraser), Danijela Kulezic-Wilson (University College, Cork) and Benjamin Wright (University of Southern California). This series had extraordinary range, examining works by such figures as Hans Zimmer and Shane Carruth that break down old assumptions about soundtracks, while unsettling the act of listening itself.

From radio and film, we turned to art and science. First with Hearing the Unheard,

From radio and film, we turned to art and science. First with Hearing the Unheard,

a series edited by Seth Horowitz (NeuroPop) with posts by the sound artist China Blue (The Engine Institute), Milton A. Garcés (University of Hawaii at Manoa) and Margaret A. Schedel (Stonybrook). This series took us inside the ears of dogs, out into the vacuum of space billions of years ago, and deep inside the sound of underground lava. Then came our current series, Radio Art Reflections, edited by Magz Hall, which promises to undertake a trans-national history of radio art — check out the first post by artist Anna Friz (Canada) on radio art and acoustic ecology.

Where will this stream go next? In part, that’s up to you. If you have a concept for a special series, and a sense of some exciting authors for it, have a look at our Call for Guest Editors, we’ll extend the deadline a few days.

—

Remix!

In reviewing these posts, I was struck by how they form their own connections in ways we didn’t plan and probably couldn’t foresee a year ago. The Thursday stream echoes back on itself. Here, for your consideration, are three alternative hypothetical groupings of the exact same posts you see above:

Sound and Indigenous Peoples Today: a series featuring an examination of the circulation of A Tribe Called Red’s song “Braves“, a study of indigenous peoples of Vancouver’s Eastside on film, and an introduction to Aymara-language radio in Bolivia, with Christina Giacona, Randolph Jordan and Karl Swinehart.

The Microsonic: a series on itty bitty sounds, and how to get at them. Posts explore the sonic fragments in Upstream Color, the sonification of data from x-ray scatter, and the tactile sounds of Lou Reed with Danijela Kulezic-Wilson, Margaret A. Schedel, and Jacob Smith.

Sonic Breakdown: a series on the sound of breaking down and how sounds break things down, from the big budget film soundtrack to volcanic rock formations, and national boundaries in Caribbean radio history, with posts by Benjamin Wright, Milton Garces and Alejandra Bronfman.

—

Finally, why not let the sounds from these posts tell the story for a change?

Tickle your ears with some of the sounds we’ve featured in this stream over the last year, a little sound sandbox:

- Guest editor Seth Horowitz’s office, as an elephant might hear it

- Tape of a student takeover of Radio Reloj in Cuba in 1957

- “Lady Godiva’s Operation” by The Velvet Underground

- A tremor at Arenal, a volcano in Costa Rica

- electrosmog, a work of radio art by Kristen Roos for Radius in Chicago

- The sound of cartoons playing on a TV in a methadone clinic in Vancouver

- A sonifications of a variety of mappings of x-ray scattered particles by Meg Schedel

.

.

.

—

Thanks to Jennifer, Aaron, Liana, Will and everyone here at SO! for putting your faith in me this year. And thanks especially to all our writers and editors for being so enthusiastic, brilliant and patient.

The SO! family salutes you!

—

Featured Photo by Flickr user Jenene Chesbrough, Creative Commons License.

The Better to Hear You With, My Dear: Size and the Acoustic World

Today the SO! Thursday stream inaugurates a four-part series entitled Hearing the UnHeard, which promises to blow your mind by way of your ears. Our Guest Editor is Seth Horowitz, a neuroscientist at NeuroPop and author of The Universal Sense: How Hearing Shapes the Mind (Bloomsbury, 2012), whose insightful work on brings us directly to the intersection of the sciences and the arts of sound.

Today the SO! Thursday stream inaugurates a four-part series entitled Hearing the UnHeard, which promises to blow your mind by way of your ears. Our Guest Editor is Seth Horowitz, a neuroscientist at NeuroPop and author of The Universal Sense: How Hearing Shapes the Mind (Bloomsbury, 2012), whose insightful work on brings us directly to the intersection of the sciences and the arts of sound.

That’s where he’ll be taking us in the coming weeks. Check out his general introduction just below, and his own contribution for the first piece in the series. — NV

—

Welcome to Hearing the UnHeard, a new series of articles on the world of sound beyond human hearing. We are embedded in a world of sound and vibration, but the limits of human hearing only let us hear a small piece of it. The quiet library screams with the ultrasonic pulsations of fluorescent lights and computer monitors. The soothing waves of a Hawaiian beach are drowned out by the thrumming infrasound of underground seismic activity near “dormant” volcanoes. Time, distance, and luck (and occasionally really good vibration isolation) separate us from explosive sounds of world-changing impacts between celestial bodies. And vast amounts of information, ranging from the songs of auroras to the sounds of dying neurons can be made accessible and understandable by translating them into human-perceivable sounds by data sonification.

Four articles will examine how this “unheard world” affects us. My first post below will explore how our environment and evolution have constrained what is audible, and what tools we use to bring the unheard into our perceptual realm. In a few weeks, sound artist China Blue will talk about her experiences recording the Vertical Gun, a NASA asteroid impact simulator which helps scientists understand the way in which big collisions have shaped our planet (and is very hard on audio gear). Next, Milton A. Garcés, founder and director of the Infrasound Laboratory of University of Hawaii at Manoa will talk about volcano infrasound, and how acoustic surveillance is used to warn about hazardous eruptions. And finally, Margaret A. Schedel, composer and Associate Professor of Music at Stonybrook University will help readers explore the world of data sonification, letting us listen in and get greater intellectual and emotional understanding of the world of information by converting it to sound.

— Guest Editor Seth Horowitz

—

Although light moves much faster than sound, hearing is your fastest sense, operating about 20 times faster than vision. Studies have shown that we think at the same “frame rate” as we see, about 1-4 events per second. But the real world moves much faster than this, and doesn’t always place things important for survival conveniently in front of your field of view. Think about the last time you were driving when suddenly you heard the blast of a horn from the previously unseen truck in your blind spot.

Hearing also occurs prior to thinking, with the ear itself pre-processing sound. Your inner ear responds to changes in pressure that directly move tiny little hair cells, organized by frequency which then send signals about what frequency was detected (and at what amplitude) towards your brainstem, where things like location, amplitude, and even how important it may be to you are processed, long before they reach the cortex where you can think about it. And since hearing sets the tone for all later perceptions, our world is shaped by what we hear (Horowitz, 2012).

But we can’t hear everything. Rather, what we hear is constrained by our biology, our psychology and our position in space and time. Sound is really about how the interaction between energy and matter fill space with vibrations. This makes the size, of the sender, the listener and the environment, one of the primary features that defines your acoustic world.

You’ve heard about how much better your dog’s hearing is than yours. I’m sure you got a slight thrill when you thought you could actually hear the “ultrasonic” dog-training whistles that are supposed to be inaudible to humans (sorry, but every one I’ve tested puts out at least some energy in the upper range of human hearing, even if it does sound pretty thin). But it’s not that dogs hear better. Actually, dogs and humans show about the same sensitivity to sound in terms of sound pressure, with human’s most sensitive region from 1-4 kHz and dogs from about 2-8 kHz. The difference is a question of range and that is tied closely to size.

Most dogs, even big ones, are smaller than most humans and their auditory systems are scaled similarly. A big dog is about 100 pounds, much smaller than most adult humans. And since body parts tend to scale in a coordinated fashion, one of the first places to search for a link between size and frequency is the tympanum or ear drum, the earliest structure that responds to pressure information. An average dog’s eardrum is about 50 mm2, whereas an average human’s is about 60 mm2. In addition while a human’s cochlea is spiral made of 2.5 turns that holds about 3500 inner hair cells, your dog’s has 3.25 turns and about the same number of hair cells. In short: dogs probably have better high frequency hearing because their eardrums are better tuned to shorter wavelength sounds and their sensory hair cells are spread out over a longer distance, giving them a wider range.

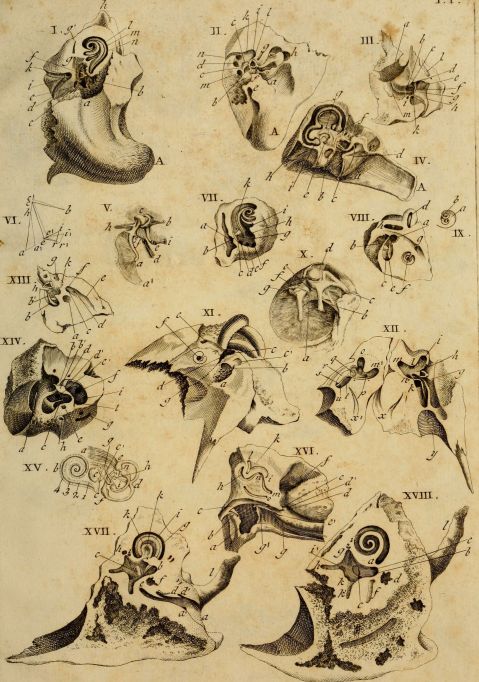

Interest in the how hearing works in animals goes back centuries. Classical image of comparative ear anatomy from 1789 by Andreae Comparetti.

Then again, if hearing was just about size of the ear components, then you’d expect that yappy 5 pound Chihuahua to hear much higher frequencies than the lumbering 100 pound St. Bernard. Yet hearing sensitivity from the two ends of the dog spectrum don’t vary by much. This is because there’s a big difference between what the ear can mechanically detect and what the animal actually hears. Chihuahuas and St. Bernards are both breeds derived from a common wolf-like ancestor that probably didn’t have as much variability as we’ve imposed on the domesticated dog, so their brains are still largely tuned to hear what a medium to large pseudo wolf-like animal should hear (Heffner, 1983).

But hearing is more than just detection of sound. It’s also important to figure out where the sound is coming from. A sound’s location is calculated in the superior olive – nuclei in the brainstem that compare the difference in time of arrival of low frequency sounds at your ears and the difference in amplitude between your ears (because your head gets in the way, making a sound “shadow” on the side of your head furthest from the sound) for higher frequency sounds. This means that animals with very large heads, like elephants, will be able to figure out the location of longer wavelength (lower pitched) sounds, but probably will have problems localizing high pitched sounds because the shorter frequencies will not even get to the other side of their heads at a useful level. On the other hand, smaller animals, which often have large external ears, are under greater selective pressure to localize higher pitched sounds, but have heads too small to pick up the very low infrasonic sounds that elephants use.

Audiograms (auditory sensitivity in air measured in dB SPL) by frequency of animals of different sizes showing the shift of maximum sensitivity to lower frequencies with increased size. Data replotted based on audiogram data by Sivian and White (1933). “On minimum audible sound fields.” Journal of the Acoustical Society of America, 4: 288-321; ISO 1961; Heffner, H., & Masterton, B. (1980). “Hearing in glires: domestic rabbit, cotton rat, feral house mouse, and kangaroo rat.” Journal of the Acoustical Society of America, 68, 1584-1599.; Heffner, R. S., & Heffner, H. E. (1982). “Hearing in the elephant: Absolute sensitivity, frequency discrimination, and sound localization.” Journal of Comparative and Physiological Psychology, 96, 926-944.; Heffner H.E. (1983). “Hearing in large and small dogs: Absolute thresholds and size of the tympanic membrane.” Behav. Neurosci. 97: 310-318. ; Jackson, L.L., et al.(1999). “Free-field audiogram of the Japanese macaque (Macaca fuscata).” Journal of the Acoustical Society of America, 106: 3017-3023.

But you as a human are a fairly big mammal. If you look up “Body Size Species Richness Distribution” which shows the relative size of animals living in a given area, you’ll find that humans are among the largest animals in North America (Brown and Nicoletto, 1991). And your hearing abilities scale well with other terrestrial mammals, so you can stop feeling bad about your dog hearing “better.” But what if, by comic-book science or alternate evolution, you were much bigger or smaller? What would the world sound like? Imagine you were suddenly mouse-sized, scrambling along the floor of an office. While the usual chatter of humans would be almost completely inaudible, the world would be filled with a cacophony of ultrasonics. Fluorescent lights and computer monitors would scream in the 30-50 kHz range. Ultrasonic eddies would hiss loudly from air conditioning vents. Smartphones would not play music, but rather hum and squeal as their displays changed.

And if you were larger? For a human scaled up to elephantine dimensions, the sounds of the world would shift downward. While you could still hear (and possibly understand) human speech and music, the fine nuances from the upper frequency ranges would be lost, voices audible but mumbled and hard to localize. But you would gain the infrasonic world, the low rumbles of traffic noise and thrumming of heavy machinery taking on pitch, color and meaning. The seismic world of earthquakes and volcanoes would become part of your auditory tapestry. And you would hear greater distances as long wavelengths of low frequency sounds wrap around everything but the largest obstructions, letting you hear the foghorns miles distant as if they were bird calls nearby.

But these sounds are still in the realm of biological listeners, and the universe operates on scales far beyond that. The sounds from objects, large and small, have their own acoustic world, many beyond our ability to detect with the equipment evolution has provided. Weather phenomena, from gentle breezes to devastating tornadoes, blast throughout the infrasonic and ultrasonic ranges. Meteorites create infrasonic signatures through the upper atmosphere, trackable using a system devised to detect incoming ICBMs. Geophones, specialized low frequency microphones, pick up the sounds of extremely low frequency signals foretelling of volcanic eruptions and earthquakes. Beyond the earth, we translate electromagnetic frequencies into the audible range, letting us listen to the whistlers and hoppers that signal the flow of charged particles and lightning in the atmospheres of Earth and Jupiter, microwave signals of the remains of the Big Bang, and send listening devices on our spacecraft to let us hear the winds on Titan.

Here is a recording of whistlers recorded by the Van Allen Probes currently orbiting high in the upper atmosphere:

When the computer freezes or the phone battery dies, we complain about how much technology frustrates us and complicates our lives. But our audio technology is also the source of wonder, not only letting us talk to a friend around the world or listen to a podcast from astronauts orbiting the Earth, but letting us listen in on unheard worlds. Ultrasonic microphones let us listen in on bat echolocation and mouse songs, geophones let us wonder at elephants using infrasonic rumbles to communicate long distances and find water. And scientific translation tools let us shift the vibrations of the solar wind and aurora or even the patterns of pure math into human scaled songs of the greater universe. We are no longer constrained (or protected) by the ears that evolution has given us. Our auditory world has expanded into an acoustic ecology that contains the entire universe, and the implications of that remain wonderfully unclear.

__

Exhibit: Home Office

This is a recording made with standard stereo microphones of my home office. Aside from usual typing, mouse clicking and computer sounds, there are a couple of 3D printers running, some music playing, largely an environment you don’t pay much attention to while you’re working in it, yet acoustically very rich if you pay attention.

.

This sample was made by pitch shifting the frequencies of sonicoffice.wav down so that the ultrasonic moves into the normal human range and cuts off at about 1-2 kHz as if you were hearing with mouse ears. Sounds normally inaudible, like the squealing of the computer monitor cycling on kick in and the high pitched sound of the stepper motors from the 3D printer suddenly become much louder, while the familiar sounds are mostly gone.

.

This recording of the office was made with a Clarke Geophone, a seismic microphone used by geologists to pick up underground vibration. It’s primary sensitivity is around 80 Hz, although it’s range is from 0.1 Hz up to about 2 kHz. All you hear in this recording are very low frequency sounds and impacts (footsteps, keyboard strikes, vibration from printers, some fan vibration) that you usually ignore since your ears are not very well tuned to frequencies under 100 Hz.

.

Finally, this sample was made by pitch shifting the frequencies of infrasonicoffice.wav up as if you had grown to elephantine proportions. Footsteps and computer fan noises (usually almost indetectable at 60 Hz) become loud and tonal, and all the normal pitch of music and computer typing has disappeared aside from the bass. (WARNING: The fan noise is really annoying).

.

The point is: a space can sound radically different depending on the frequency ranges you hear. Different elements of the acoustic environment pop up depending on the type of recording instrument you use (ultrasonic microphone, regular microphones or geophones) or the size and sensitivity of your ears.

![Spectrograms (plots of acoustic energy [color] over time [horizontal axis] by frequency band [vertical axis]) from a 90 second recording in the author’s home office covering the auditory range from ultrasonic frequencies (>20 kHz top) to the sonic (20 Hz-20 kHz, middle) to the low frequency and infrasonic (<20 Hz).](https://soundstudiesblog.com/wp-content/uploads/2014/08/figure3officerange.jpg?w=479&h=637)

Spectrograms (plots of acoustic energy [color] over time [horizontal axis] by frequency band [vertical axis]) from a 90 second recording in the author’s home office covering the auditory range from ultrasonic frequencies (>20 kHz top) to the sonic (20 Hz-20 kHz, middle) to the low frequency and infrasonic (<20 Hz).

Featured image by Flickr User Jaime Wong.

—

Seth S. Horowitz, Ph.D. is a neuroscientist whose work in comparative and human hearing, balance and sleep research has been funded by the National Institutes of Health, National Science Foundation, and NASA. He has taught classes in animal behavior, neuroethology, brain development, the biology of hearing, and the musical mind. As chief neuroscientist at NeuroPop, Inc., he applies basic research to real world auditory applications and works extensively on educational outreach with The Engine Institute, a non-profit devoted to exploring the intersection between science and the arts. His book The Universal Sense: How Hearing Shapes the Mind was released by Bloomsbury in September 2012.

—

REWIND! If you liked this post, check out …

Reproducing Traces of War: Listening to Gas Shell Bombardment, 1918– Brian Hanrahan

Learning to Listen Beyond Our Ears– Owen Marshall

This is Your Body on the Velvet Underground– Jacob Smith

Recent Comments