The Medium Is the Menace: AI and the Platforming of Hate Speech

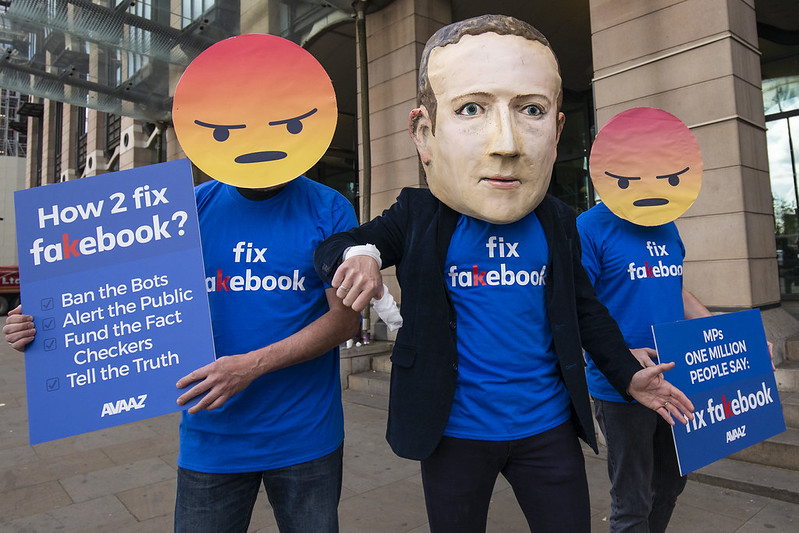

The essays collected in this series (link to the Introduction) trace how nonhuman listening operates through sound, speech, and platformed media across distinct but interconnected domains. Across these accounts, listening no longer secures meaning or relation; it becomes a site of contestation, where sound is mobilized, processed, and weaponized within systems that privilege circulation, recognition, and response over truth. Last week, Olga Zaitseva-Herz examines how nonhuman listening operates under conditions of war, where AI-generated voices and deepfakes destabilize the very grounds of auditory trust. Through the case of Ukraine, she shows how platforms and political actors alike exploit algorithmic listening systems to amplify affect, circulate disinformation, and transform voice into a tool of psychological warfare. Listening, in this context, becomes not a means of understanding but a terrain of uncertainty. Today, Houman Mehrabian turns to the dynamics of speech on social media, arguing that platforms do not simply fail to regulate hate but structurally amplify it through forms of proximity that render identity itself as a site of perceived threat. –Guest Editor Kathryn Huether

—

During the Cold War, when the world was divided into two geopolitical poles, the International Covenant on Civil and Political Rights was drafted to enshrine individual rights. Article 19 guarantees freedom of expression through any medium, “regardless of frontiers.” The media landscape has significantly changed since then—we have transitioned from an information system where newspapers, radio, and television were dominant communication technologies to one where digital and online media play a central role, especially in transcending national frontiers. The Internet has amplified the right to free speech dramatically. Take, for instance, the decentralized hacktivist collective Anonymous, gliding undetected past gatekeeping mechanisms to route confidential information to the public gaze or to rally digital protests, only to disappear again into the shadows of cyberspace. Yet, Article 19 also insists that this freedom carries “special duties and responsibilities”: expression may be restricted when necessary to protect or prevent harm. The Internet, however, has challenged the enforcement of such laws in unprecedented ways.

What has changed is not only how speech circulates, but how it is heard—now increasingly by automated systems that register patterns rather than consider context. As Kathryn Huether explains in the introduction of this series, this shift marks the emergence of a new form of “nonhuman listening”: a mode of perception in which speech is registered as data, classified and acted upon without ever being encountered as expression. Take, for instance, practices such as trolling, doxing, and flaming. Cyberbullies discover ever-new ways to propagate harmful content without raising the alarm bells of automated systems. Tamar Mitts explains how the digital ecosystem creates “safe havens” for online extremism: extremist groups persist by migrating to more permissive platforms, mobilizing aggrieved users to strengthen their group identity, or reformulating their messaging to slip past automated detection. As major platforms dial back their governance measures, those who disseminate toxic content grow ever more “resilient.”

Digital technologies have opened new pathways for bad actors to take advantage of the protections of free speech. While this helps explain the growing volume of hate speech online, it addresses only the surface, the content itself. Even with robust content moderation tools in place, the deeper problem lies in the design of these platforms. Their very structure enables polarized expression and, its most pernicious manifestation, hate speech—precisely what Article 19 and related human rights frameworks seek to prevent. This severely hinders meaningful dialogue in the increasingly interconnected and interdependent world that these technologies have created.

In the global social media economy, the United States sets the dominant tone. Its major platforms—Meta’s Facebook, Instagram, and WhatsApp, alongside Google’s YouTube, and others such as X, Pinterest, and Snapchat—shape what is circulated and amplified across the world. These companies operate under the protections of the First Amendment of the U.S. Constitution, which places relatively few restrictions on hate speech. By contrast, many other nations impose far stricter limits on online expression—from laws regulating hate speech in countries such as Germany and France to the extensive censorship regimes of states like China and Russia. Yet the question of free expression in the digital age exceeds the legal framework of any single nation, no matter how powerful the prohibition or permission. More fundamentally, the medium of digital communication itself has transformed what speech is, and how it functions.

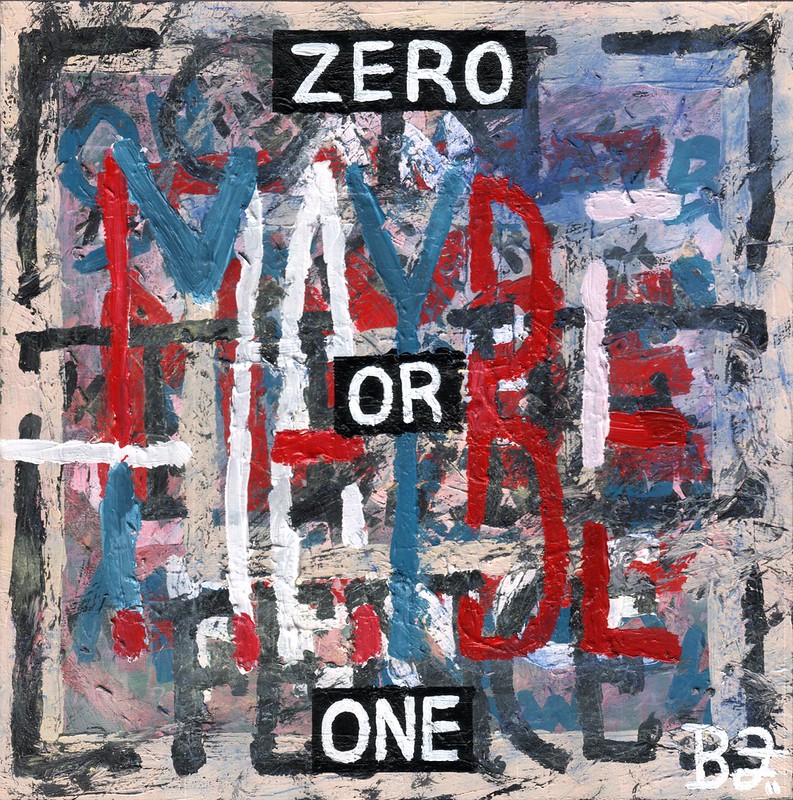

The era of private thinking and personal reasoning has given way to that of instant, public sharing, and everything shared on networking platforms is processed through algorithms running on binary coding. Algorithms should not be regarded simply as nonhuman—as alien intrusions into daily life—but rather as a sophisticated extension of a mode of human thinking that reduces complexity and nuance to mutually exclusive opposites. At the most basic level, these systems translate speech, images, and interaction into discrete units of data—encoded as sequences of zeroes and ones—and sort them through processes to classify. In doing so, they do not “listen” and interpret meaning in a human sense; they detect patterns and correlations across vast datasets. In this sense, nonhuman algorithms proliferate the dichotomy of “us” versus “them.” They entrench what Keith Stanovich calls “myside thinking”—a widespread inclination to interpret the world through the lens of prior beliefs and loyalties. Appearing in every stage of thinking, across disciplines, and in all demographic groups, “myside bias,” Stanovich argues, is more powerful than other types of bias because it involves “emotional commitment and ego preoccupation.” Its greatest danger is that it prevents communities from converging on shared facts, even when evidence is available.

Algorithmic systems amplify thought attuned to binaries and, in turn, cultivate speech that gravitates toward extremes. Opposition intensifies into antagonism, nuance dissolves into simplicity, and complexity flattens into stark contrasts. To grasp this dynamic, it is essential to examine the underlying mechanisms of nonhuman listening that nudge speech in this direction. An illuminating lens is offered by Judith Butler, whose account of “implicit” or “unspoken” modes of speech regulation reveal how discourse is shaped even before explicit prohibitions limit it. These are conditions of intelligibility, frameworks that determine—in advance—what registers as meaningful speech, what recedes as noise, and what is never heard at all.

Online, discussion is always up and running. Breaking the silence or ending the conversation is almost unheard of in the digital realm. Ironically, this feature of Internet-mediated communication can itself function as speech control. It recalls what Michel Foucault describes as endless “commentary,” in which discourse continually folds back on itself, repeating and reworking what has already been said. Silence becomes nearly impossible. In the words of Gilles Deleuze, the user becomes “undulating,” continuously “surfing” across interconnected spaces, each interaction rippling outward across the network. Repetition is vital for platforms that reward virality. Content creators, for instance, are encouraged to “repurpose” their old ideas and, in turn, encourage their audience to “co-create” their already-recycled ideas. In this sense, nothing truly begins or ends.

Algorithms are not designed to propel free movement; recommendation systems learn from simple behavioral cues—a like or a skip, a pause or a quick swipe away—to incentivize us to go with the flow of hyper-personalized data and to affiliate with echo chambers of like-minded users. Even generative AI replies to each prompt in light of the ones that came before, becoming increasingly “sycophantic.” This explains why a growing number of people, especially younger individuals, are turning to artificial intelligence for friendship. These technologies offer something that mimics attentive listening, a feeling that the user’s words do not go unheard. Artificial intelligence devices such as the Friend necklace are designed to make this type of connection effortless and always within reach.

Under these conditions, free speech comes to mean access to flows of information—the ability to move with them, rather than to analyze, interrupt, or challenge them. Listening becomes adaptive and reactive, attuned less to sound argumentation than to speedy circulation. Within insulated echo chambers, expressions are encountered not as opinions to be evaluated but as signals to be affirmed or rejected. Memes, emojis, and abbreviated forms of expression condense complicated positions into immediate affective cues, eliciting responses of pride, indignation, gloating, mockery, delight, disappointment, disdain. The list goes on. What circulates most readily is not sustained reasoning but intensified feeling, shared across networks of both human and nonhuman participants.

This is not to say that debate has no place in the digital world. On the contrary, platform environments are configured to reduce nearly every issue (controversial or not) into a rigidly polarized dispute. Algorithmic systems, optimized for engagement, sort content into recognizable positions, amplifying contrast and conflict. Issues are framed less as open questions than as preconfigured disputes, with sides already drawn and reiterated across countless iterations. One is either “woke” or dead set against it. Greta Thunberg’s activism is either inspiring or self-promoting. Online, users need only choose a side and signal agreement through simple actions—a like, a repost, a heart, an angry face. Digital debate becomes echoed: each side recycles familiar arguments that reinforce group identity rather than persuade others. This resembles the house war of the Montagues and the Capulets, with no hope of reconciliation.

Even truth is drawn into this binary logic, as its validity now lies in how closely it aligns with one’s viewpoint. Platforms like Truth Social—the social media site launched by Trump in 2022 and described as “free from political discrimination”—reinforce this dynamic by presenting “truth” as something to be claimed by one side, with opposing views dismissed as fake news.

The same pattern appears in responses to deepfakes. Also in 2022, a manipulated video of President Volodymyr Zelensky falsely urging Ukrainian troops to surrender circulated online. While widely debunked, its reception still followed partisan lines: dismissed as propaganda for some, and treated by others as plausible or strategically meaningful within existing narratives. Olga Zaitseva-Herz discusses other examples of AI-generated voices and videos used as psychological weapon in warfare. More broadly, deepfakes are often framed as satire or humor when they support one’s perspective, and condemned as disinformation when they do not. Despite the apparent complexity of digital media, this dynamic reduces debate to a series of rigid oppositions. Under these conditions, dialogue becomes difficult to sustain—or even non-existent—as positions are evaluated less through exchange than through alignment.

Dialogue is often proposed as the answer to hate speech. The United Nations Strategy and Plan of Action on Hate Speech describes it as “any kind of communication in speech, writing or behavior, that attacks or uses pejorative or discriminatory language with reference to a person or a group on the basis of who they are.” This definition does not fully capture the underlying dynamic. While hate speech targets the identity of others, it is often driven by the perception that those identities pose a threat to one’s own. Research on hate suggests that this perception is not tied to a single action but to a broader attribution of the other as inherently dangerous or malicious. Hate, in this sense, does not respond to behavior; it calcifies identity itself as a source of threat. Hate thus becomes, as Daniele Battista puts it, an ideal “communicative asset” for driving the digital economy.

Hate speech is the violent defense of an insecure self; it is Iago, ever sensitive to the closeness of the dissimilar other; it is yet another extreme manifestation of the us-versus-them mindset. But we should not confine our understanding of online hate speech to the level of content. The amplification of harmful communication is not merely due to mobilization of verbal violence by political figures or technical failures of content moderation systems—such as hate speech slipping through as free speech—but is more fundamentally a formal effect of the platforms themselves. Collapsing geographic and cultural distances, the Internet brings diverse users into unprecedented forms of closeness. This structure reflects what Marshall McLuhan diagnoses as the “implosive” character of modern media, in which boundaries contract and differences are forced into constant contact. Under these conditions, both users and automated systems are overwhelmed with volume, and listening—human and nonhuman alike—becomes reactive rather than responsive. The patient work of contextual understanding disappears beneath the flood of signals.

By their very design, these shared virtual spaces place the user’s sense of self under continuous pressure. In response, users align with particular influencers and subscribe to particular channels to “strengthen feelings of belonging and opposition.” Speech, then, tends to take on a defensive quality, reinforcing identity against perceived threat. Digital platforms do not simply host hate speech; they develop the very conditions in which it emerges. Prolonged interaction and sustained proximity in polarized environments make communication more likely to be shaped by anxiety than by dialogue. What follows is not a failure of communication, but a transformation of it: speech no longer seeks to understand the other, but to secure the self.

Returning to the framework of this series, we can understand the shift to digital mediation as one in which listening collapses into the reiterative reception of preconstituted positions and oppositions, precipitating immediate, affectively saturated reactions that merely reproduce them. Increasingly “detached from sensation, exposure, and accountability,” listening operates less as an encounter with speech than as a mechanism of bias confirmation by selectively sorting information.

Amid digital closeness in environments marked by binary thinking, the more users are “silenced by speech,” the more listening becomes passive. We need to distance ourselves from communication as an instrument of pacification, or worse, suppression. Dialogue begins with attentive listening—not only to the speech of others, but also for polarizing mechanisms that surround us both online and offline. More importantly, we need to appreciate the formal effects of a listening that is not reduced to a rehearsal for rebuttal, a listening that is an antidote to the restless compulsion to react to speech that our digital devices incessantly fuel. In suspending the immediacy of response, listening evolves into a delaying tactic, a deliberate deferral that carves out an interval within which patient reflection may find form.

—

Featured Image by Flickr User Jeff Gates, CC BY-NC-ND 4.0

—

Houman Mehrabian earned his doctorate in English from the University of Waterloo (2020), where he focused on the history and theory of rhetoric. Currently, he is an Assistant Professor in the Arts, Communications & Social Sciences department at University Canada West. His research interests include exploring the rhetorical and technological mechanisms that regulate speech. Bringing together perspectives from critical media studies, philosophy, and rhetorical theory, his work investigates how the structural design of digital platforms and their economic logics can amplify harmful discourse, and how appeals to more free speech in online environments can operate as rhetorical cover for the proliferation and normalization of hate speech. Through this lens, his research aims to better understand the interplay between technology, power, and communication in the digital age.

—

REWIND! . . .If you liked this post, you may also dig:

Hate & Non-Human Listening, an Introduction–Kathryn Huether

Mimicked Voices and Nonhuman Listening: AI Deepfakes, Speech, and Sonic Manipulation in the Digital War on Ukraine—Olga Zaitseva-Herz

Impaulsive: Bro-casting Trump, Part I–Andrew J. Salvati

- by Kathryn Huether

- in Article, artificial intelligence, Capitalism, Cultural Studies, Digital Humanities, Digital Media, Hate & Non-Human Listening Series, Humanism, Identity, immigration and migration, Information, Internets, Language, Listening, Politics, Public Debate, Race, Rhetoric, social media, sonification, Sound, sound studies, Speech, Voice

- 2 Comments

Hate & Non-Human Listening, an Introduction

In January 2026, WIRED reported that U.S. Immigration and Customs Enforcement (ICE) has begun using Palantir’s AI tools to process public tip-line submissions. The system does not simply store or relay these reports. It processes English-language submissions, condensing them into what is called a “BLUF”—a “bottom line up front” summary that allows agents to quickly assess and prioritize cases.

Efficiency is the dominant framing as the system promises speed, clarity, and control over overwhelming volumes of information. Yet such efficiency depends on a prior reduction as expression is detached from the conditions of its articulation and reconstituted as data. In this form, listening no longer risks misunderstanding, it eliminates it.

Nor does this infrastructure operate in isolation. It relies on distributed participation in which listening is recast as vigilance. A recent ICE public X (Twitter) post encouraged residents to report “suspicious activity,” assuring them that doing so would make their communities safer.

The language is familiar, even reassuring. But it depends on a prior act of interpretation: that certain voices, presences, or behaviors are already legible as threat. Listening here becomes pre-classification—identifying danger in advance and acting on that identification as if it were already known. Rather than an isolated case, this development signals a broader transformation in how immigration and enforcement are governed. As legal and policy analyses increasingly note, artificial intelligence is becoming “one of the fundamental operating tools of policing,” deployed across domains ranging from speech and text analysis to risk assessment and document verification. Systems such as USCIS’s Evidence Classifier, which tags and prioritizes key documents within case files, and platforms like ImmigrationOS, which aggregate data across agencies to guide enforcement decisions, do not simply process information—they reorganize it. What matters is not only what is said, but whether it aligns—across time, across records, across bureaucratic expectations. Listening becomes continuous and anticipatory, oriented toward detecting inconsistency, deviation, and risk before any claim can be made or contested.

A very different narrative circulates alongside these developments. A recent BBC article suggested that AI chatbots can function as unusually “good listeners”—patient, nonjudgmental, even compassionate. Users describe these systems as offering space for reflection, sometimes preferring them to human interlocutors. Yet what is at work is not attention or relation, but pattern recognition trained to simulate understanding. Taken together, these examples reveal a shared transformation. Across both enforcement systems and everyday interaction, listening is increasingly detached from sensation, exposure, and accountability, becoming a process of extraction and classification rather than relation. As Dorothy Santos argues in her account of speech AI, machines do not simply assist human listening; they assume its position, becoming “the listeners to our sonic landscapes” while also acting as the capturers, surveyors, and documenters of our utterances. What follows from this shift is not just a change in who listens, but in what listening is. Listening no longer names an encounter between subjects; it describes a technical operation distributed across infrastructures that register, store, and act on sound without ever hearing it.

This shift is what I call “nonhuman listening.”

Nonhuman listening names both an infrastructural condition and a set of practices through which listening is reorganized as a technical operation. It describes a mode of perception distributed across systems that capture, process, and act on sound without exposure to it as experience, as well as the procedures—classification, ranking, prediction—through which sound is rendered actionable in advance. At stake is not simply the emergence of new technologies, but a reorganization of what listening has long been understood to do. Listening unfolds across thresholds of perception, attention, and care, shaped by what can be sensed, cultivated, or ignored. From its earliest formulations, it has been understood not as passive reception but as an ethically charged capacity. Aristotle’s distinction between akousis (hearing) and akroasis (listening) marks this divide, reserving listening for forms of attention capable of judgment and response. In this sense, listening has always named both openness and control: a posture of receptivity toward others and a way of organizing the world.

Nonhuman listening amplifies an older logic: not all voices are heard, and not all forms of speech register as meaning and listening does not begin from neutrality. Norms organize it in advance, determining what registers as signal, who gets to hear, and whose speech counts as intelligible. Meaning and noise do not inhere in sound itself; they emerge through historically sedimented expectations about voice, difference, and belonging.

Sound studies has long challenged the assumption that listening inherently connects or humanizes. Listening does not operate as an immediate or intimate relation; it relies on frameworks that precondition perception. Jonathan Sterne shows that claims about sonic immediacy function less as empirical truths than as ideological formations—narratives that naturalize particular social arrangements while obscuring how listening renders some forms of speech legible and others unintelligible. Listening does not simply receive the world—it organizes it.

At the same time, theoretical and experimental approaches foreground the instability of this organization. Voices do not exist as stable entities prior to their mediation; they “show up as real,” as Matt Rahaim writes, through specific practices and infrastructures that render them intelligible, contested, or indeterminate. Jean-Luc Nancy conceptualizes listening as resonance, emphasizing exposure—the possibility that listening might unsettle the subject—while also underscoring that such openness never distributes evenly. John Cage and Pauline Oliveros treat listening as a disciplined practice that requires cultivation and can fail as easily as it attunes. Listening is not given; it is trained.

Across these accounts, listening operates within regimes of power. Jacques Attali locates listening within governance, where institutions determine what can be heard, what must be silenced, and what becomes disposable. Trauma and memory studies intensify these stakes. Henry Greenspan shows that listening to testimony never occurs as a singular or sufficient act, and that extractive modes of attention can reproduce violence rather than alleviate it. Ralina L. Joseph’s concept of radical listening reframes listening as an ethical orientation—one that demands accountability to power, difference, and fatigue, and that attends to how speakers wish to be heard. As she writes, “the easiest way to refuse to listen is to keep talking.”

Taken together, these accounts point to a more difficult claim: listening is not simply uneven—it is directional. It can orient toward exposure and relation, or toward certainty and verification. When listening turns toward certainty, it no longer encounters speech as an address. It apprehends it in advance while certain voices register not as claims or appeals, but as warnings or threats.

Such orientation has precedents that are neither abstract nor metaphorical. During the 1937 Parsley Massacre, Dominican soldiers used pronunciation as a test of belonging. Suspected Haitians were asked to say the word perejil (parsley); those whose speech did not conform to expected phonetic norms were identified as foreign and often killed. Listening here did not register meaning or intent. It functioned as classification—reducing speech to a signal of difference and acting on that difference as if it were already known.

This logic persists in contemporary enforcement practices, albeit in different registers. Recent encounters with U.S. immigration agents reveal how accent continues to operate as a proxy for suspicion and a trigger for intervention. In multiple reported incidents, individuals have been stopped or detained and asked to account for their citizenship on the basis of how they sound: “Because of your accent,” one agent stated when asked to justify the demand for documentation . In another case, an agent explicitly linked auditory difference to disbelief, telling a driver, “I can hear you don’t have the same accent as me,” before repeatedly questioning where he was born.

In these moments, listening again operates as pre-classification. Accent is not heard as variation, history, or movement, but as evidence—an audible marker of non-belonging that precedes and justifies further scrutiny. What is at stake is not mishearing, but a mode of listening trained to stabilize difference as risk. Speech becomes legible only insofar as it confirms or disrupts an already established expectation of who belongs.

Early analyses of digital surveillance anticipated a more radical transformation than they could yet fully name. Writing in 2014, Robin James identified an emerging “acousmatic” condition in which listening detaches from any identifiable listener and disperses across systems of data capture and analysis. The 2013 Snowden disclosures make clear that this shift was not theoretical but already operational. State surveillance had moved from targeted interception to total capture, amassing communications indiscriminately and deriving “suspicion” only after the fact, as a pattern extracted from within the dataset itself. Listening no longer responds to a known object; it produces the object it claims to detect. What registers as “suspicious” does not precede analysis but materializes through algorithmic filtering, where signal and noise become effects of the system’s design rather than properties of the world. Under these conditions, listening ceases to function as a sensory or interpretive act and instead operates as an infrastructural logic of sorting, ranking, and preemption. Contemporary platforms extend and normalize this logic. They do not hear sound; they process it, rendering it actionable without ever encountering it as experience.

The essays collected in this series extend this transformation across distinct but interconnected domains, tracing how nonhuman listening operates through sound, speech, and platformed media. Across these accounts, listening no longer secures meaning or relation; it becomes a site of contestation, where sound is mobilized, processed, and weaponized within systems that privilege circulation, recognition, and response over truth. Next week, Olga Zaitseva-Herz situates these dynamics within the context of digital warfare, where AI-generated voices, deepfakes, and synthetic media circulate as instruments of psychological manipulation, designed to provoke affective responses that travel faster than verification.

Contemporary speech technologies make this continuity visible at the level of language itself. As work in the Racial Bias in Speech AI series shows, particularly as Michelle Pfeifer demonstrates, speech technologies do not simply fail to recognize certain speakers; they formalize assumptions about what counts as intelligible language in the first place. In these systems, the voice is not encountered as expression but as input—something to be parsed, categorized, and aligned with existing datasets. When AI systems encounter African American Vernacular English—especially emergent idioms shaped by Black and queer communities—language is flattened into surface definitions, stripped of cultural grounding, or flagged as inappropriate. Speech is not heard as situated expressions; it is processed as deviation from an unmarked norm.

What emerges is a form of hostile listening: not the misrecognition of a human listener, but a condition in which recognition is structurally focused. Racialized language becomes perpetually at risk–mistrusted or excluded–not because it fails to communicate but because it exceeds the parameters through which the system can register meaning. Hate here is not expressive or intentional; it is procedural, embedded in the standards that determine what can be heard as language at all.

In this sense, the problem is not that listening has been replaced. It is that it continues—without exposure, without relation, without consequence for those who perform it. What appears as neutrality is the absence of risk. What appears as efficiency is the removal of encounters. Under these conditions, harm does not need to be spoken. It is heard into being in advance—stabilized as signal, confirmed as threat, and acted upon before it can be contested. The question that remains is not whether machines can learn to listen better. It is whether we can still recognize listening once it no longer requires us at all.

—

Kathryn Agnes Huether is a Postdoctoral Research Associate in Antisemitism Studies at UCLA’s Initiative to Study Hate and the Alan D. Leve Center for Jewish Studies. She earned her PhD in musicology with a minor in cultural studies from the University of Minnesota (2021) and holds a second master’s in religious studies from the University of Colorado Boulder. She has held visiting appointments at Bowdoin College and Vanderbilt University and was the 2021–2022 Mandel Center Postdoctoral Fellow at the United States Holocaust Memorial Museum.

Her research examines how sound mediates Holocaust memory, antisemitism, racial violence, and contemporary politics. She has published in Sound Studies and Yuval, has forthcoming work in the Journal of the Society for American Music and Music and Politics. She is a member of the Holocaust Educational Foundation of Northwestern University’s (HEFNU) Virtual Speakers Bureau and has been an invited educator at two of its regional institutes, and is current editor of ISH’s public-facing blog. Her first book, Sounding Hate: Sonic Politics in the Age of Platforms and AI, is in progress. Her second, Sounding the Holocaust in Film, is a forthcoming teaching compendium that brings together key concepts in Holocaust studies with methods from film music and sound studies.

—

Series Icon designed by Alex Calovi

—

REWIND! . . .If you liked this post, you may also dig:

Your Voice is (Not) Your Passport—Michelle Pfeifer

“Hey Google, Talk Like Issa”: Black Voiced Digital Assistants and the Reshaping of Racial Labor–Golden Owens

Beyond the Every Day: Vocal Potential in AI Mediated Communication –Amina Abbas-Nazari

Voice as Ecology: Voice Donation, Materiality, Identity–Steph Ceraso

ISSN 2333-0309

Translate

Recent Posts

- The Medium Is the Menace: AI and the Platforming of Hate Speech

- Mimicked Voices and Nonhuman Listening: AI Deepfakes, Speech, and Sonic Manipulation in the Digital War on Ukraine

- Hate & Non-Human Listening, an Introduction

- The Absurdity and Authoritarianism of Now: My Chemical Romance’s The Black Parade Resonates Queerly, Anew

- SO! Reads: Alexis McGee’s From Blues To Beyoncé: A Century of Black Women’s Generational Sonic Rhetorics

Archives

Categories

Search for topics. . .

Looking for a Specific Post or Author?

Click here for the SOUNDING OUT INDEX. . .all posts and podcasts since 2009, scrollable by author, date, and title. Updated every 5 minutes.

Recent Comments