SO! Amplifies: Allison Smartt, Sound Designer of MOM BABY GOD and Mixed-Race Mixtape

SO! Amplifies. . .a highly-curated, rolling mini-post series by which we editors hip you to cultural makers and organizations doing work we really really dig. You’re welcome!

SO! Amplifies. . .a highly-curated, rolling mini-post series by which we editors hip you to cultural makers and organizations doing work we really really dig. You’re welcome!

—

Currently on the faculty and the associate technical director of California Institute of the Arts Sharon Lund Disney School of Dance, Allison Smartt worked for several years in Hampshire’s dance program as intern-turned-program assistant. A sound engineer, designer, producer, and educator for theater and dance, she has created designs seen and heard at La MaMa, The Yard, Arts In Odd Places Festival, Barrington Stage Company, the Five College Consortium, and other venues.

Allison Smartt

She is also the owner of Smartt Productions, a production company that develops and tours innovative performances about social justice. Its repertory includes the nationally acclaimed solo-show about reproductive rights, MOM BABY GOD, and the empowering, new hip-hop theatre performance, Mixed-Race Mixtape. Her productions have toured 17 U.S. cities and counting.

Ariel Taub is currently interning at Sounding Out! responsible for assisting with layout, scoping out talent and in the process uncovering articles that may relate to or reflect work being done in the field of Sound Studies. She is a Junior pursuing a degree in English and Sociology from Binghamton University.

__

Recently turned on to several of the projects Allison Smartt has been involved in, I became especially fascinated with MOM BABY GOD 3.0, of which Smartt was sound designer and producer. The crew of MOM BABY GOD 3.o sets the stage for what to expect in a performance with the following introduction:

Take a cupcake, put on a name tag, and prepare to be thrown into the world of the Christian Right, where sexual purity workshops and anti-abortion rallies are sandwiched between karaoke sing-alongs, Christian EDM raves and pro-life slumber parties. An immersive dark comedy about American girl culture in the right-wing, written and performed by Madeline Burrows. One is thrown into the world of the Christian Right, where sexual purity workshops and anti-abortion rallies are sandwiched between karaoke sing-alongs, Christian EDM raves and pro-life slumber parties.

It’s 2018 and the anti-abortion movement has a new sense of urgency. Teens 4 Life is video-blogging live from the Students for Life of America Conference, and right-wing teenagers are vying for popularity while preparing for political battle. Our tour guide is fourteen-year-old Destinee Grace Ramsey, ascending to prominence as the new It-Girl of the Christian Right while struggling to contain her crush on John Paul, a flirtatious Christian boy with blossoming Youtube stardom and a purity ring.

MOM BABY GOD toured nationally to sold-out houses from 2013-2015 and was the subject of a national right-wing smear campaign. In a newly expanded and updated version premiering at Forum Theatre and Single Carrot Theatre in March 2017, MOM BABY GOD takes us inside the right-wing’s youth training ground at a more urgent time than ever.

I reached out to Smartt about these endeavors with some sound-specific questions. What follows is our April 2017 email exchange [edited for length].

Ariel Taub (AT): What do you think of the voices Madeline Burrows [the writer and solo actor of MOM BABY GOD] uses in the piece? How important is the role of sound in creating the characters?

Allison Smartt (AS): I want to accurately represent Burrows’s use of voice in the show. For those who haven’t seen it, she’s not an impersonator or impressionist conjuring up voices for solely comedy’s sake. Since she is a woman portraying a wide range of ages and genders on stage and voice is a tool in a toolbox she uses to indicate a character shift. Madeline has a great sense of people’s natural speaking rhythms and an ability to incorporate bits of others’ unique vocal elements into the characters she portrays. Physicality is another tool. Sound cues are yet another…lighting, costume, staging, and so on.

I do think there’s something subversive about a queer woman voicing ideology and portraying people that inherently aim to repress her existence/identity/reproductive rights.

Many times, when actors are learning accents they have a cue line that helps them jump into that accent. Something that they can’t help but say in a southern, or Irish, or Canadian accent. In MOM BABY GOD, I think of my sound design in a similar way. The “I’m a Pro-Life Teen” theme is the most obvious example. It’s short and sweet, with a homemade flair and most importantly: it’s catchy. The audience learns to immediately associate that riff with Destinee (the host of “I’m a Pro-Life Teen”), so much so that I stop playing the full theme almost immediately, yet it still commands the laugh and upbeat response from the audience.

AT: Does [the impersonation and transformation of people on the opposite side of a controversial issues into] characters [mark them as] inherently mockable? (I asked Smartt about this specifically because of the reaction the show elicited from some people in the Pro-Life group.)

AS: Definitely not. I think the context and intention of the show really humanizes the people and movement that Madeline portrays. The show isn’t cruel or demeaning towards the people or movement – if anything, our audience has a lot of fun. But it is essential that Madeline portray the type of leaders in the movement (in any movement really) in a realistic, yet theatrical way. It’s a difficult needle to thread and think she does it really well. A preacher has a certain cadence – it’s mesmerizing, it’s uplifting. A certain type of teen girl is bubbly, dynamic. How does a gruff (some may say manly), galvanizing leader speak? It’s important the audience feel the unique draw of each character – and their voices are a large part of that draw.

Madeline Burrows in character in MOM BABY GOD (National Tour 2013-2015). Photos by Jessica Neria

AT: What sounds [and sound production] were used to help carry the performance [of MOM BABY GOD]? What role does sound have in making plays [and any performance] cohesive?

AS: Sound designing for theatre is a mix of many elements, from pre-show music, sound effects and original music to reinforcement, writing cues, and sound system design. For a lot of projects, I’m also my own sound engineer so I also implement the system designs and make sure everything functions and sounds tip top.

Each design process is a little different. If it’s a new work in development, like MOM BABY GOD and Mixed-Race Mixtape, I am involved in a different way than if I’m designing for a completed work (and designing for dance is a whole other thing). There are constants, however. I’m always asking myself, “Are my ideas supporting the work and its intentions?” I always try to be cognizant of self-indulgence. I may make something really, really cool but that ultimately, after hearing it in context and conversations with the other artistic team members, is obviously doing too much more than supporting the work. A music journalism professor I had used to say, “You have to shoot that puppy.” Meaning, cut the cue you really love for the benefit of the overall piece.

I like to set myself limitations to work within when starting a design. I find that narrowing my focus to say…music only performed on harmonica or sound effects generated only from modes of transportation, help get my creative juices flowing (Sidenote: why is that a phrase? It give me the creeps)[. . .]I may relinquish these limitations later after they’ve helped me launch into creating a sonic character that feels complex, interesting, and fun.

AT: The show is described as being comprised of, “karaoke sing-alongs, Christian EDM raves and pro-life slumber parties,” each of these has its own distinct associations, how do “sing alongs” and “raves” and our connotations with those things add to the pieces?

Madeline Burrows in character in MOM BABY GOD (National Tour 2013-2015). Photos by Jessica Neria

AS: Since sound is subjective, the associations that you make with karaoke sing-alongs are probably slightly different from what I associated with karaoke sing-alongs. You may think karaoke sing-along = a group of drunk BFFs belting Mariah Carey after a long day of work. I may think karaoke sing-alongs = middle aged men and women shoulder to shoulder in a dive bar singing “Friends In Low Places” while clinking their glasses of whiskey and draft beer. The similarity in those two scenarios is people singing along to something, but the character and feeling of each image is very different. You bring that context with you as you read the description of the show and given the challenging themes of the show, this is a real draw for people usually resistant to solo and/or political theatre. The way the description is written and what it highlights intentionally invites the audience to feel invited, excited, and maybe strangely upbeat about going to see a show about reproductive rights.

As a sound designer and theatre artist, one of my favorite moments is when the audience collectively readjusts their idea of a karaoke sing-along to the experience we create for them in the show. I feel everyone silently say, “Oh, this is not what I expected, but I love it,” or “This is exactly what I imagined!” or “I am so uncomfortable but I’m going with it.” I think the marketing of the show does a great job creating excited curiosity, and the show itself harnesses that and morphs it into confused excitement and surprise (reviewers articulate this phenomenon much better that I could).

AT: In this video the intentionally black screen feels like deep space. What sounds [and techniques] are being used? Are we on a train, a space ship, in a Church? What can you [tell us] about this piece?

AS: There are so many different elements in this cue…it’s one of my favorites. This cue is lead in and background to Destinee’s first experience with sexual pleasure. Not to give too much away: She falls asleep and has a sex dream about Justin Bieber. I compiled a bunch of sounds that are anticipatory: a rocket launch, a train pulling into a station, a remix/slowed down version of a Bieber track. These lead into sounds that feel more harsh: alarm clocks, crumpling paper…I also wanted to translate the feeling of being woken up abruptly from a really pleasant dream…like you were being ripped out of heaven or something. It was important to reassociate for Destinee and the audience, sounds that had previously brought joy with this very confusing and painful moment, so it ends with heartbeats and church bells.

I shoved the entire arc of the show into this one sound cue. And Madeline and Kathleen let me and I love them for that.

from madelineburrows.com

AT: What do individuals bring of themselves when they listen to music? How is music a way of entering conversations otherwise avoided?

AS: The answer to this question is deeper than I can articulate but I’ll try.

Talking about bias, race, class, even in MOM BABY GOD introducing a pro-life video blog – broaching these topics are made easier and more interesting through music. Why? I think it’s because you are giving the listener multiple threads from which to sew their own tapestry…their own understanding of the thing. The changing emotions in a score, multiplicity of lyrical meaning, tempo, stage presence, on and on. If you were to just present a lecture on any one of those topics, the messages feel too stark, too heavy to be absorbed (especially to be absorbed by people who don’t already agree with the lecture or are approaching that idea for the first time). Put them to music and suddenly you open up people’s hearts.

Post- Mixed-Race Mixtape love, William Paterson University, 2016 Photo credit: Allison Smartt

As a sound designer, I have to be conscious of what people bring to their listening experience, but can’t let this rule my every decision. The most obvious example is when faced with the request to use popular music. Take maybe one of the most overused classics of the 20th century, “Hallelujah” by Leonard Cohen. If you felt an urge just now to stop reading this interview because you really love that song and how dare I naysay “Hallelujah” – my point has been made. Songs can evoke strong reactions. If you heard “Hallelujah” for the first time while seeing the Northern Lights (which would arguably be pretty epic), then you associate that memory and those emotions with that song. When a designer uses popular music in their design, this is a reality you have to think hard about.

Cassette By David Millan on Flickr.

It’s similar with sound effects. For Mixed-Race Mixtape, Fig wanted to start the show with the sound of a cassette tape being loaded into a deck and played. While I understood why he wanted that sound cue, I had to disagree. Our target demographic are of an age where they may have never seen or used a cassette tape before – and using this sound effect wouldn’t elicit the nostalgic reaction he was hoping for.

Regarding how deeply the show moves people, I give all the credit to Fig’s lyrics and the entire casts’ performance, as well as the construction of the songs by the musicians and composers. As well as to Jorrell, our director, who has focused the intention of all these elements to coalesce very effectively. The cast puts a lot of emotion and energy into their performances and when people are genuine and earnest on stage, audiences can sense that and are deeply engaged.

I do a lot of work in the dance world and have come to understand how essential music and movement are to the human experience. We’ve always made music and moved our bodies and there is something deeply grounding and joining about collective listening and movement – even if it’s just tapping your fingers and toes.

AT: How did you and the other artists involved come up with the name/ idea for Mixed-Race Mixtape? How did the Mixed-Race Mixtape come about?

AS: Mixed-Race Mixtape is the brainchild of writer/performer Andrew “Fig” Figueroa. I’ll let him tell the story.

Andrew “Fig” Figueroa, Hip-Hop artist, theatre maker, and arts educator from Southern California

A mixtape is a collection of music from various artists and genres on one tape, CD or playlist. In Hip-Hop, a mixtape is a rapper’s first attempt to show the world there skills and who they are, more often than not, performing original lyrics over sampled/borrowed instrumentals that compliment their style and vision. The show is about “mixed” identity and I mean, I’m a rapper so thank God “Mixed-Race” rhymed with “Mixtape.”

The show grew from my desire to tell my story/help myself make sense of growing up in a confusing, ambiguous, and colorful culture. I began writing a series of raps and monologues about my family, community and youth and slowly it formed into something cohesive.

AT: I love the quote, “the conversation about race in America is one sided and missing discussions of how class and race are connected and how multiple identities can exist in one person,” how does Mixed-Race Mixtape fill in these gaps?

AS: Mixed-Race Mixtape is an alternative narrative that is complex, personal, and authentic. In America, our ideas about race largely oscillate between White and Black. MRMT is alternative because it tells the story of someone who sits in the grey area of Americans’ concept of race and dispels the racist subtext that middle class America belongs to White people. Because these grey areas are illuminated, I believe a wide variety of people are able to find connections with the story.

AT: In this video people discuss the connection they [felt to the music and performance] even if they weren’t expecting to. What do you think is responsible for sound connecting and moving people from different backgrounds? Why are there the assumptions about the event that there are, that they wouldn’t connect to the Hip Hop or that there would be “good vibes.”

AS: Some people do feel uncertain that they’d be able to connect with the show because it’s a “hip-hop” show. When they see it though, it’s obvious that it extends beyond the bounds of what they imagine a hip-hop show to be. And while I’ve never had someone say they were disappointed or unmoved by the show, I have had people say they couldn’t understand the words. And a lot of times they want to blame that on the reinforcement.

I’d argue that the people who don’t understand the lyrics of MRMT are often the same ones who were trepidatious to begin with, because I think hip-hop is not a genre they have practice listening to. I had to practice really actively listening to rap to train my brain to process words, word play, metaphor, etc. as fast as rap can transmit them. Fig, an experienced hip-hop listener and artist amazes me with how fast he can understand lyrics on the first listen. I’m still learning. And the fact is, it’s not a one and done thing. You have to listen to rap more than once to get all the nuances the artists wrote in. And this extends to hip-hop music, sans lyrics. I miss so many really clever, artful remixes, samples, and references on the first listen. This is one of the reasons we released an EP of some of the songs from the show (and are in the process of recording a full album).

.

The theatre experience obviously provides a tremendously moving experience for the audience, but there’s more to be extracted from the music and lyrics than can be transmitted in one live performance.

AT: What future plans do you have for projects? You mentioned utilizing sounds from protests? How is sound important in protest? What stands out to you about what you recorded?

AS: I have only the vaguest idea of a future project. I participate in a lot of rallies and marches for causes across the spectrum of human rights. At a really basic level, it feels really good to get together with like minded people and shout your frustrations, hopes, and fears into the world for others to hear. I’m interested in translating this catharsis to people who are wary of protests/hate them/don’t understand them. So I’ve started with my iPhone. I record clever chants I’ve never heard, or try to capture the inevitable moment in a large crowd when the front changes the chant and it works its way to the back.

.

I record marching through different spaces…how does it sound when we’re in a tunnel versus in a park or inside a building? I’m not sure where these recordings will lead me, but I felt it was important to take them.

—

REWIND! . . .If you liked this post, you may also dig:

Beyond the Grandiose and the Seductive: Marie Thompson on Noise

Moonlight’s Orchestral Manoeuvers: A duet by Shakira Holt and Christopher Chien

Aural Guidings: The Scores of Ana Carvalho and Live Video’s Relation to Sound

A Brief History of Auto-Tune

This is the final article in Sounding Out!‘s April Forum on “Sound and Technology.” Every Monday this month, you’ve heard new insights on this age-old pairing from the likes of Sounding Out! veteranos Aaron Trammell and Primus Luta along with new voices Andrew Salvati and Owen Marshall. These fast-forward folks have shared their thinking about everything from Auto-tune to techie manifestos. Today, Marshall helps us understand just why we want to shift pitch-time so darn bad. Wait, let me clean that up a little bit. . .so darn badly. . .no wait, run that back one more time. . .jjuuuuust a little bit more. . .so damn badly. Whew! There! Perfect!–JS, Editor-in-Chief

This is the final article in Sounding Out!‘s April Forum on “Sound and Technology.” Every Monday this month, you’ve heard new insights on this age-old pairing from the likes of Sounding Out! veteranos Aaron Trammell and Primus Luta along with new voices Andrew Salvati and Owen Marshall. These fast-forward folks have shared their thinking about everything from Auto-tune to techie manifestos. Today, Marshall helps us understand just why we want to shift pitch-time so darn bad. Wait, let me clean that up a little bit. . .so darn badly. . .no wait, run that back one more time. . .jjuuuuust a little bit more. . .so damn badly. Whew! There! Perfect!–JS, Editor-in-Chief

—

A recording engineer once told me a story about a time when he was tasked with “tuning” the lead vocals from a recording session (identifying details have been changed to protect the innocent). Polishing-up vocals is an increasingly common job in the recording business, with some dedicated vocal producers even making it their specialty. Being able to comp, tune, and repair the timing of a vocal take is now a standard skill set among engineers, but in this case things were not going smoothly. Whereas singers usually tend towards being either consistently sharp or flat (“men go flat, women go sharp” as another engineer explained), in this case the vocalist was all over the map, making it difficult to always know exactly what note they were even trying to hit. Complicating matters further was the fact that this band had a decidedly lo-fi, garage-y reputation, making your standard-issue, Glee-grade tuning job decidedly inappropriate.

Undaunted, our engineer pulled up the Auto-Tune plugin inside Pro-Tools and set to work tuning the vocal, to use his words, “artistically” – that is, not perfectly, but enough to keep it from being annoyingly off-key. When the band heard the result, however, they were incensed – “this sounds way too good! Do it again!” The engineer went back to work, this time tuning “even more artistically,” going so far as to pull the singer’s original performance out of tune here and there to compensate for necessary macro-level tuning changes elsewhere.

The product of the tortuous process of tuning and re-tuning apparently satisfied the band, but the story left me puzzled… Why tune the track at all? If the band was so committed to not sounding overproduced, why go to such great lengths to make it sound like you didn’t mess with it? This, I was told, simply wasn’t an option. The engineer couldn’t in good conscience let the performance go un-tuned. Digital pitch correction, it seems, has become the rule, not the exception, so much so that the accepted solution for too much pitch correction is more pitch correction.

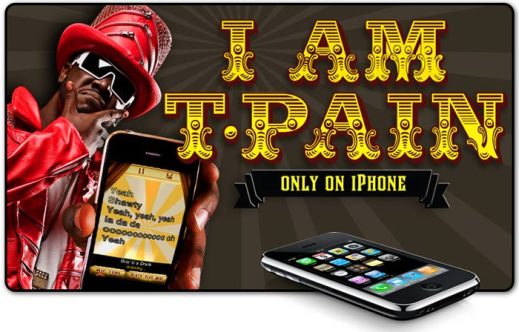

Since 1997, recording engineers have used Auto-Tune (or, more accurately, the growing pantheon of digital pitch correction plugins for which Auto-Tune, Kleenex-like, has become the household name) to fix pitchy vocal takes, lend T-Pain his signature vocal sound, and reveal the hidden vocal talents of political pundits. It’s the technology that can make the tone-deaf sing in key, make skilled singers perform more consistently, and make MLK sound like Akon. And at 17 years of age, “The Gerbil,” as some like to call Auto-Tune, is getting a little long in the tooth (certainly by meme standards.) The next U.S. presidential election will include a contingent of voters who have never drawn air that wasn’t once rippled by Cher’s electronically warbling voice in the pre-chorus of “Believe.” A couple of years after that, the Auto-Tune patent will expire and its proprietary status will dissolve into to the collective ownership of the public domain.

.

Growing pains aside, digital vocal tuning doesn’t seem to be leaving any time soon. Exact numbers are hard to come by, but it’s safe to say that the vast majority of commercial music produced in the last decade or so has most likely been digitally tuned. Future Music editor Daniel Griffiths has ballpark-estimated that, as early as 2010, pitch correction was used in about 99% of recorded music. Reports of its death are thus premature at best. If pitch correction is seems banal it doesn’t mean it’s on the decline; rather, it’s a sign that we are increasingly accepting its underlying assumptions and internalizing the habits of thought and listening that go along with them.

Headlines in tech journalism are typically reserved for the newest, most groundbreaking gadgets. Often, though, the really interesting stuff only happens once a technology begins to lose its novelty, recede into the background, and quietly incorporate itself into fundamental ways we think about, perceive, and act in the world. Think, for example, about all the ways your embodied perceptual being has been shaped by and tuned-in to, say, the very computer or mobile device you’re reading this on. Setting value judgments aside for a moment, then, it’s worth thinking about where pitch correction technology came from, what assumptions underlie the way it works and how we work with it, and what it means that it feels like “old news.”

As is often the case with new musical technologies, digital pitch correction has been the target for no small amount of controversy and even hate. The list of indictments typically includes the homogenization of music, the devaluation of “actual talent,” and the destruction of emotional authenticity. Suffice to say, the technological possibility of ostensibly producing technically “pitch-perfect” performances has wreaked a fair amount of havoc on conventional ways of performing and evaluating music. As Primus Luta reminded us in his SO! piece on the powerful-yet-untranscribable “blue notes” that emerged from the idiosyncrasies of early hardware samplers, musical creativity is at least as much about digging-into and interrogating the apparent limits of a technology as it is about the successful removal of all obstacles to total control of the end result.

Paradoxically, it’s exactly in this spirit that others have come to the technology’s defense: Brian Eno, ever open to the unexpected creative agency of perplexing objects, credits the quantized sound of an overtaxed pitch corrector with renewing his interest in vocal performances. SO!’s own Osvaldo Oyola, channeling Walter Benjamin, has similarly offered a defense of Auto-Tune as a democratizing technology, one that both destabilizes conventional ideas about musical ability and allows everyone to sing in-tune, free from the “tyranny of talent and its proscriptive aesthetics.”

Jonathan Sterne, in his book MP3, offers an alternative to normative accounts of media technology (in this case, narratives either of the decline or rise of expressive technological potential) in the form of “compression histories” – accounts of how media technologies and practices directed towards increasing their efficiency, economy, and mobility can take on unintended cultural lives that reshape the very realities they were supposed to capture in the first place. The algorithms behind the MP3 format, for example, were based in part on psychoacoustic research into the nature of human hearing, framed primarily around the question of how many human voices the telephone company could fit into a limited bandwidth electrical cable while preserving signal intelligibility. The way compressed music files sound to us today, along with the way in which we typically acquire (illegally) and listen to them (distractedly), is deeply conditioned by the practical problems of early telephony. The model listener extracted from psychoacoustic research was created in an effort to learn about the way people listen. Over time, however, through our use of media technologies that have a simulated psychoacoustic subject built-in, we’ve actually learned collectively to listen like a psychoacoustic subject.

Pitch-time manipulation runs largely in parallel to Sterne’s bandwidth compression story. The ability to change a recorded sound’s pitch independently of its playback rate had its origins not in the realm of music technology, but in efforts to time-compress signals for faster communication. Instead of reducing a signal’s bandwidth, pitch manipulation technologies were pioneered to reduce the time required to push the message through the listener’s ears and into their brain. As early as the 1920s, the mechanism of the rotating playback head was being used to manipulate pitch and time interchangeably. By spinning a continuous playback head relative to the motion of the magnetic tape, researchers in electrical engineering, educational psychology, and pedagogy of the blind found that they could increase playback rate of recorded voices without turning the speakers into chipmunks. Alternatively, they could rotate the head against a static piece of tape and allow a single moment of recorded sound to unfold continuously in time – a phenomenon that influenced the development of a quantum theory of information.

In the early days of recorded sound some people had found a metaphor for human thought in the path of a phonograph’s needle. When the needle became a head and that head began to spin, ideas about how we think, listen, and communicate followed suit: In 1954 Grant Fairbanks, the director of the University of Illinois’ Speech Research Laboratory, put forth an influential model of the speech-hearing mechanism as a system where the speaker’s conscious intention of what to say next is analogized to a tape recorder full of instructions, its drive “alternately started and stopped, and when the tape is stationary a given unit of instruction is reproduced by a moving scanning head”(136). Pitch time changing was more a model for thinking than it was for singing, and its imagined applications were thus primarily non-musical.

Take for example the Eltro Information Rate Changer. The first commercially available dedicated pitch-time changer, the Eltro advertised its uses as including “pitch correction of helium speech as found in deep sea; Dictation speed testing for typing and steno; Transcribing of material directly to typewriter by adjusting speed of speech to typing ability; medical teaching of heart sounds, breathing sounds etc.by slow playback of these rapid occurrences.” (It was also, incidentally, used by Kubrick to produce the eerily deliberate vocal pacing of HAL 9000). In short, for the earliest “pitch-time correction” technologies, the pitch itself was largely a secondary concern, of interest primarily because it was desirable for the sake of intelligibility to pitch-change time-altered sounds into a more normal-sounding frequency range.

.

This coupling of time compression with pitch changing continued well into the era of digital processing. The Eventide Harmonizer, one of the first digital hardware pitch shifters, was initially used to pitch-correct episodes of “I Love Lucy” which had been time-compressed to free-up broadcast time for advertising. Similar broadcast time compression techniques have proliferated and become common in radio and television (see, for example, Davis Foster Wallace’s account of the “cashbox” compressor in his essay on an LA talk radio station.) Speed listening technology initially developed for the visually impaired has similarly become a way of producing the audio “fine print” at the end of radio advertisements.

“H910 Harmonizer” by Wikimedia user Nalzatron, CC BY-SA 3.0

Though the popular conversation about Auto-Tune often leaves this part out, it’s hardly a secret that pitch-time correction is as much about saving time as it is about hitting the right note. As Auto-Tune inventor Andy Hildebrand put it,

[Auto-Tune’s] largest effect in the community is it’s changed the economics of sound studios…Before Auto-Tune, sound studios would spend a lot of time with singers, getting them on pitch and getting a good emotional performance. Now they just do the emotional performance, they don’t worry about the pitch, the singer goes home, and they fix it in the mix.

Whereas early pitch-shifters aimed to speed-up our consumption of recorded voices, the ones now used in recording are meant to reduce the actual time spent tracking musicians in studio. One of the implications of this framing is that emotion, pitch, and the performer take on a very particular relationship, one we can find sketched out in the Auto-Tune patent language:

Voices or instruments are out of tune when their pitch is not sufficiently close to standard pitches expected by the listener, given the harmonic fabric and genre of the ensemble. When voices or instruments are out of tune, the emotional qualities of the performance are lost. Correcting intonation, that is, measuring the actual pitch of a note and changing the measured pitch to a standard, solves this problem and restores the performance. (Emphasis mine. Similar passages can be found in Auto-Tune’s technical documentation.)

In the world according to Auto-Tune, the engineer is in the business of getting emotional signals from place to place. Emotion is the message, and pitch is the medium. Incorrect (i.e. unexpected) pitch therefore causes the emotion to be “lost.” While this formulation may strike some people as strange (for example, does it mean that we are unable to register the emotional qualities of a performance from singers who can’t hit notes reliably? Is there no emotionally expressive role for pitched performances that defy their genre’s expectations?), it makes perfect sense within the current affective economy and division of labor and affective economy of the recording studio. It’s a framing that makes it possible, intelligible, and at least somewhat compulsory to have singers “express emotion” as a quality distinct from the notes they hit and have vocal producers fix up the actual pitches after the fact. Both this emotional model of the voice and the model of the psychoacoustic subject are useful frameworks for the particular purposes they serve. The trick is to pay attention to the ways we might find ourselves bending to fit them.

.

—

Owen Marshall is a PhD candidate in Science and Technology Studies at Cornell University. His dissertation research focuses on the articulation of embodied perceptual skills, technological systems, and economies of affect in the recording studio. He is particularly interested in the history and politics of pitch-time correction, cybernetics, and ideas and practices about sensory-technological attunement in general.

—

Featured image: “Epic iPhone Auto-Tune App” by Flickr user Photo Giddy, CC BY-NC 2.0

—

REWIND!…If you liked this post, you may also dig:

REWIND!…If you liked this post, you may also dig:

“From the Archive #1: It is art?”-Jennifer Stoever

“Garageland! Authenticity and Musical Taste”-Aaron Trammell

“Evoking the Object: Physicality in the Digital Age of Music”-Primus Luta

Recent Comments