Re-orienting Sound Studies’ Aural Fixation: Christine Sun Kim’s “Subjective Loudness”

Editors’ note: As an interdisciplinary field, sound studies is unique in its scope—under its purview we find the science of acoustics, cultural representation through the auditory, and, to perhaps mis-paraphrase Donna Haraway, emergent ontologies. Not only are we able to see how sound impacts the physical world, but how that impact plays out in bodies and cultural tropes. Most importantly, we are able to imagine new ways of describing, adapting, and revising the aural into aspirant, liberatory ontologies. The essays in this series all aim to push what we know a bit, to question our own knowledges and see where we might be headed. In this series, co-edited by Airek Beauchamp and Jennifer Stoever you will find new takes on sound and embodiment, cultural expression, and what it means to hear. –AB

Editors’ note: As an interdisciplinary field, sound studies is unique in its scope—under its purview we find the science of acoustics, cultural representation through the auditory, and, to perhaps mis-paraphrase Donna Haraway, emergent ontologies. Not only are we able to see how sound impacts the physical world, but how that impact plays out in bodies and cultural tropes. Most importantly, we are able to imagine new ways of describing, adapting, and revising the aural into aspirant, liberatory ontologies. The essays in this series all aim to push what we know a bit, to question our own knowledges and see where we might be headed. In this series, co-edited by Airek Beauchamp and Jennifer Stoever you will find new takes on sound and embodiment, cultural expression, and what it means to hear. –AB

—

A stage full of opera performers stands, silent, looking eager and exhilarated, matching their expressions to the word that appears on the iPad in front of them. As the word “excited” dissolves from the iPad screen, the next emotion, “sad” appears and the performers’ expressions shift from enthusiastic to solemn and downcast to visually represent the word on the screen. The “singers” are performing in Christine Sun Kim’s conceptual sound artistic performance entitled, Face Opera.

The singers do not use audible voices for their dramatic interpretation, as they would in a conventional opera, but rather use their faces to convey meaning and emotion keyed to the text that appears on the iPad in front of them. Challenging the traditional notions of dramatic interpretation, as well as the concepts of who is considered a singer and what it means to sing, this art performance is just one way Kim calls into question the nature of sound and our relationship to it.

Audible sound is, of course, essential to sound studies though sound itself is not audist, as it can be experienced in a multitude of ways. The contemporary multi-modal turn in sound studies enables ways to theorize how more bodies can experience sound, including audible sound, motion, vibration, and visuals. All humans are somewhere on a spectrum between enabled and disabled and between hearing and deaf. As we grow older most people move farther toward the disabled and deaf ends of the spectrum. In order to experience sound for a lifetime, it is imperative to explore multi-modal ways of experiencing sound. For instance, the Deaf community rejects the term disabled, yet realizes it is actually normative constructs of hearing, sound, and music that disable Deaf people. But, as Kim demonstrates, Deaf people engage with sound all of the time. In this case, Deaf individuals are not disabled but rather, what I identify as difabled (differently-abled) in their relationship with sound. While this term is not yet used in disability scholarship, it is not completely unique, as there is a Difabled Twitter page dedicated to, “Ameliorating inclusion in technology, business and society.” Rejection of the word disabled inspires me to adopt difabled to challenge the cultural binary of ability and embrace a more multi-modal approach.

Kim’s art explores sound in a variety of modalities to decenter hearing as the only, or even primary, way to experience sound. A conceptual sound artist who was born profoundly deaf, Kim describes her move into the sound artistic landscape: “In the back of my mind, I’ve always felt that sound was your thing, a hearing person’s thing. And sound is so powerful that it could either disempower me and my artwork or it could empower me. I chose to be empowered.”

For sound to empower, however, cultural perception has to move beyond the ear – a move that sound studies is uniquely poised to enable. Using Kim’s art as a guide, I investigate potential places for Deaf within sound studies. I ask if there are alternative ways to listen in a field devoted to sound. Bridging sound studies and Deaf studies it is possible to see that sound is not ableist and audist, but sound studies traditionally has suffered from an aural fixation, a fetishization of hearing as the best or only way to experience sound.

Pushing beyond the understanding of hearing as the primary (or only) sound precept, some scholars have begun to recognize the centrality of the body’s senses in sound experience. For instance, in his research on reggae, Julian Henriques coined the term sonic dominance to refer to sound that is not just heard but that “pervades, or even invades the body” (9). This experience renders the sound experience as tactile, felt within the body. Anne Cranny-Francis, who writes on multi-modal literacies, describes the intimate relationship between hearing and sound, believing that “sound literally touches us,” This process of listening is described as an embodied experience that is “intimate” and “visceral.” Steph Ceraso calls this multi-modal listening. By opening up the body to listen in multi-modal ways, full-bodied, multi-sense experiences of sound are possible. Anthropologist Roshanak Kheshti believes that the differentiation of our senses created a division of labor for our senses – a colonizing process that maximizes the use-value and profit of each individual sense. She reminds her audience that “sound is experienced (felt) by the whole body intertwining what is heard by the ears with what is felt on the flesh, tasted on the tongue, and imagined in the psyche” (714), a process she calls touch listening.

Other scholars continue to advocate for a place for the body in sound studies. For instance, according to Nina Sun Eidsheim, in Sensing Sound, sound allows us to posit questions about objectivity and reality (1), as posed in the age-old question, “If a tree falls in the forest and no one is there to hear it, does it make a sound?” Eidsheim challenges the notion of a sound, particularly music, as fixed by exploring multiple ways sound may be sensed within the body. Airek Beauchamp, through his notion of sonic tremblings, detaches sound from the realm of the static by returning to the materiality of the body as a site of dynamic processes and experiences that “engages with the world via a series of shimmers and impulses.” Understanding the body as a place of engagement rather than censorship, Cara Lynne Cardinale calls for a critical practice of look-listening that reconceptualizes the modalities of the tongue and hands.

Vibrant Vibrations by Flickr User The Manic Macrographer (CC BY 2.0)

As these scholars have identified, privileging audible sound over other senses reinforces normative ideas of communication and presumes that individuals hear, speak, and experience sound in normative ways. These ableist and audist rhetorics are particularly harmful for individuals who are Deaf. Deaf community members actively resist these ableist and audist assumptions to show that sound is not just for hearing. Kim identifies as part of the Deaf community and uses her art to challenge the ableist and audist ideologies of the sound experience. Through exploring one of Christine Sun Kim’s performance pieces, Subjective Loudness, I argue that we can conceptualize sound studies in the absence of auditory sound through the two concepts Kim’s piece were named for, subjectivity and loudness.

In creating Subjective Loudness, Kim asked 200 Tokyo residents to help her create a musical score. Hearing participants were asked to use their bodies to replicate sounds of common 85 dB noises into microphones. The sounds Kim selected included: the swishing of a washing machine, the repetitive rotation of printing press, the chaos of a loud urban street, and the harsh static of a food blender. After the list was complete, Kim has the sounds translated into a musical score, sung by four of Kim’s closest friends. The noises then become music, which Kim lowers below normal human hearing range for a vibratory experience accessible to hearing and non-hearing individuals alike; The result is music that is not heard but rather felt. As vibrations shake the walls, windows, and furniture audience members feel the music.

Kim’s performance expands upon current understandings of the body in sound by incorporating multiple materialities of sound into one experience. Rather than simply looking at an existing sound in a new way, she develops and executes the sound experience for her participants. Kim types the names of common 85 dB sounds, what most hearing people may call “noise” on an iPad – a visual representation of the sound.

By asking participants to use their bodies to replicate these sounds – to change words into noise – Kim moves visual representation moves into the audible domain. This phase is contingent on each participant’s subjective experience with the particular sound, yet it also relies on the materiality of the human body to be able to replicate complex sounds. The audible sounds were then returned to a visual state as they were translated into a musical score. In this phase, noise is silenced as it is placed as musical notes on a page. The score is then sung, audibly, once again shifting visual into audible. Noise becomes music.

Yet even in the absence of hearing the performers sing, observers can see and perhaps feel the performance. Similar to Kim’s Face Opera, this performance is not just for the ear. The music is then silenced by reducing its volume beyond that of normal hearing range. Vibrations surround the participants for a tactile experience of sound. But participants aren’t just feeling the vibrations, they are instruments of vibration as well, exerting energy back into the space that then alters the sound experience for other bodies. The materiality of the body allows for a subjective experience of sound that Kim would not be able to as easily manipulate if she simply asked audience members to feel vibrations from a washing machine or printing press. But Kim doesn’t just tinker with the subjectivity of modality, she also plays with loudness.

Christine Sun Kim at Work, Image by Flickr User Joi Ito, (CC BY 2.0)

In this performance Kim creates a think interweaving of modalities. Part of this interplay involves challenging our understanding of loudness. For instance, participants recreate loud noises, but then the loud noise is reduced to silence as it is translated into a musical score. The volume has been dialed down, as has the intensity as the musical score isolates participates. The sound experience, as the score, is then sung, reconnecting the audience to a shared experience. Floating with the ebb and flow of the sound, participants are surrounded by sound, then removed from it, only to then be surrounded again. Finally, as the sound is reduced beyond hearing range, the vibrations are loud, not in volume but in intensity. The participants are enveloped in a sonorous envelope of sonic experience, one that is felt through and within the body. This performance combats a long-standing belief Kim had about her relationship with sound.

As a child, Kim was taught, “sound wasn’t a part of my life.” She recounted in a TED talk that her experience was like living in a foreign country, “blindly following its rules, behaviors, and norms.” But Kim recognized the similarities between sound and ASL. “In Deaf culture, movement is equivalent to sound,” Kim stated in the same talk. Equating music with ASL, Kim notes that neither a musical note nor an ASL sign represented on paper can fully capture what a music note or sign are. Kim uses a piano metaphor to make her point better understood to a hearing audience. “English is a linear language, as if one key is being pressed at a time. However, ASL is more like a chord, all ten fingers need to come down simultaneously to express a clear concept in ASL.” If one key were to change, the entire meaning would change. Subjective Loudness attempts to demonstrate this, as Kim moves visual to sound and back again before moving sound to vibration. Each one, individually, cannot capture the fullness of the word or musical note. Taken as a performative whole, however, it becomes easier to conceptualize vibration and movement as sound.

Christine Sun Kim speaking ASL, Image by Flickr User Joi Ito, (CC BY 2.0)

In Subjective Loudness, Kim’s performance has sonic dominance in the absence of hearing. “Sonic dominance,” Henriques writes, “is stuff and guts…[I]t’s felt over the entire surface of the skin. The bass line beats on your chest, vibrating the flesh, playing on the bone, and resonating in the genitals” (58). As Kim’s audience placed hands on walls, reaching out to to feel the music, it is possible to see that Kim’s performance allowed for full-bodied experiences of sound – a process of touch listening. And finally, incorporating Deaf and hearing individuals in her performance, Kim shows that all bodies can utilize multi-modal listening as a way to experience sound. Kim’s performances re-centers alternative ways of listening. Sound can be felt through vibration. Sound can be seen in visual representations such as ASL or visual art.

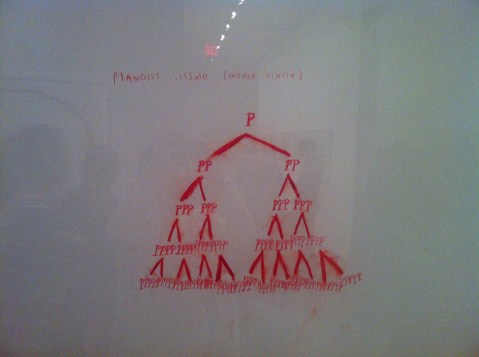

Image of Christine Sun Kim’s painting “Pianoiss….issmo” by Flickr User watashiwani (CC BY 2.0)

Through Subjective Loudness, it is possible to investigate subjectivity and loudness of sound experiences. Kim does not only explore sound represented in multi-modal ways, but weaves sound through the modalities, moving the audible to the visual to the tactile and often back again. This sound-play allows audiences to question current conceptions of sound, to explore sounds in multi-modalities, and to use our subjectivities in sharing our experiences of sound with others. Kim’s art performances are interactive by design because the materiality and subjectivity of bodies is what makes her art so powerful and recognizable. Toying with loudness as intensity, Kim challenges her audience to feel intensity in the absence of volume and spark the recognition that not all bodies experience sound in normative ways. Deaf bodies are vitally part of the soundscape, experiencing and producing sound. Kim’s work shows Deaf bodies as listening bodies, and amplifies the fact that Deaf bodies have something to say.

—

Featured image: Screen capture by Flickr User evan p. cordes, (CC BY 2.0)

—

Sarah Mayberry Scott is an Instructor of Communication Studies at Arkansas State University. Sarah is also a doctoral student in Communication and Rhetoric at the University of Memphis. Her current research focuses on disability and ableist rhetorics, specifically in d/Deafness. Her dissertation uses the work of Christine Sun Kim and other Deaf artists to explore the rhetoricity of d/Deaf sound performances and examine how those performances may continue to expand and diversify the sound studies and disability studies landscapes.

—

REWIND! . . .If you liked this post, you may also dig:

REWIND! . . .If you liked this post, you may also dig:

Introduction to Sound, Ability, and Emergence Forum –Airek Beauchamp

The Listening Body in Death— Denise Gill

Unlearning Black Sound in Black Artistry: Examining the Quiet in Solange’s A Seat At the Table — Kimberly Williams

Technological Interventions, or Between AUMI and Afrocuban Timba –Caleb Lázaro Moreno

“Sensing Voice”*–-Nina Sun Eidsheim

The Better to Hear You With, My Dear: Size and the Acoustic World

Today the SO! Thursday stream inaugurates a four-part series entitled Hearing the UnHeard, which promises to blow your mind by way of your ears. Our Guest Editor is Seth Horowitz, a neuroscientist at NeuroPop and author of The Universal Sense: How Hearing Shapes the Mind (Bloomsbury, 2012), whose insightful work on brings us directly to the intersection of the sciences and the arts of sound.

Today the SO! Thursday stream inaugurates a four-part series entitled Hearing the UnHeard, which promises to blow your mind by way of your ears. Our Guest Editor is Seth Horowitz, a neuroscientist at NeuroPop and author of The Universal Sense: How Hearing Shapes the Mind (Bloomsbury, 2012), whose insightful work on brings us directly to the intersection of the sciences and the arts of sound.

That’s where he’ll be taking us in the coming weeks. Check out his general introduction just below, and his own contribution for the first piece in the series. — NV

—

Welcome to Hearing the UnHeard, a new series of articles on the world of sound beyond human hearing. We are embedded in a world of sound and vibration, but the limits of human hearing only let us hear a small piece of it. The quiet library screams with the ultrasonic pulsations of fluorescent lights and computer monitors. The soothing waves of a Hawaiian beach are drowned out by the thrumming infrasound of underground seismic activity near “dormant” volcanoes. Time, distance, and luck (and occasionally really good vibration isolation) separate us from explosive sounds of world-changing impacts between celestial bodies. And vast amounts of information, ranging from the songs of auroras to the sounds of dying neurons can be made accessible and understandable by translating them into human-perceivable sounds by data sonification.

Four articles will examine how this “unheard world” affects us. My first post below will explore how our environment and evolution have constrained what is audible, and what tools we use to bring the unheard into our perceptual realm. In a few weeks, sound artist China Blue will talk about her experiences recording the Vertical Gun, a NASA asteroid impact simulator which helps scientists understand the way in which big collisions have shaped our planet (and is very hard on audio gear). Next, Milton A. Garcés, founder and director of the Infrasound Laboratory of University of Hawaii at Manoa will talk about volcano infrasound, and how acoustic surveillance is used to warn about hazardous eruptions. And finally, Margaret A. Schedel, composer and Associate Professor of Music at Stonybrook University will help readers explore the world of data sonification, letting us listen in and get greater intellectual and emotional understanding of the world of information by converting it to sound.

— Guest Editor Seth Horowitz

—

Although light moves much faster than sound, hearing is your fastest sense, operating about 20 times faster than vision. Studies have shown that we think at the same “frame rate” as we see, about 1-4 events per second. But the real world moves much faster than this, and doesn’t always place things important for survival conveniently in front of your field of view. Think about the last time you were driving when suddenly you heard the blast of a horn from the previously unseen truck in your blind spot.

Hearing also occurs prior to thinking, with the ear itself pre-processing sound. Your inner ear responds to changes in pressure that directly move tiny little hair cells, organized by frequency which then send signals about what frequency was detected (and at what amplitude) towards your brainstem, where things like location, amplitude, and even how important it may be to you are processed, long before they reach the cortex where you can think about it. And since hearing sets the tone for all later perceptions, our world is shaped by what we hear (Horowitz, 2012).

But we can’t hear everything. Rather, what we hear is constrained by our biology, our psychology and our position in space and time. Sound is really about how the interaction between energy and matter fill space with vibrations. This makes the size, of the sender, the listener and the environment, one of the primary features that defines your acoustic world.

You’ve heard about how much better your dog’s hearing is than yours. I’m sure you got a slight thrill when you thought you could actually hear the “ultrasonic” dog-training whistles that are supposed to be inaudible to humans (sorry, but every one I’ve tested puts out at least some energy in the upper range of human hearing, even if it does sound pretty thin). But it’s not that dogs hear better. Actually, dogs and humans show about the same sensitivity to sound in terms of sound pressure, with human’s most sensitive region from 1-4 kHz and dogs from about 2-8 kHz. The difference is a question of range and that is tied closely to size.

Most dogs, even big ones, are smaller than most humans and their auditory systems are scaled similarly. A big dog is about 100 pounds, much smaller than most adult humans. And since body parts tend to scale in a coordinated fashion, one of the first places to search for a link between size and frequency is the tympanum or ear drum, the earliest structure that responds to pressure information. An average dog’s eardrum is about 50 mm2, whereas an average human’s is about 60 mm2. In addition while a human’s cochlea is spiral made of 2.5 turns that holds about 3500 inner hair cells, your dog’s has 3.25 turns and about the same number of hair cells. In short: dogs probably have better high frequency hearing because their eardrums are better tuned to shorter wavelength sounds and their sensory hair cells are spread out over a longer distance, giving them a wider range.

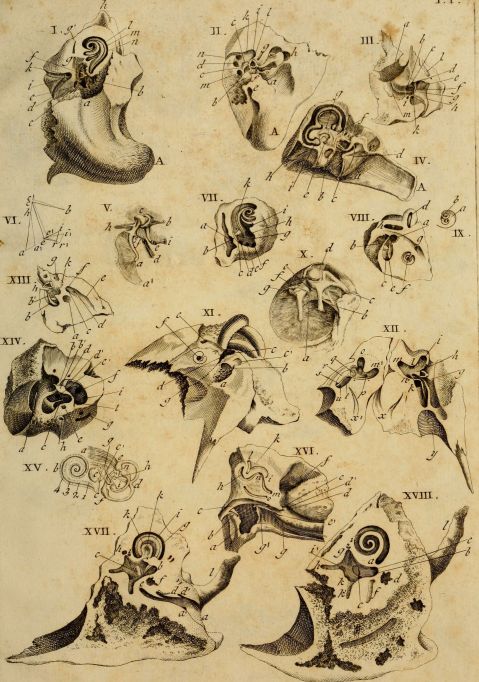

Interest in the how hearing works in animals goes back centuries. Classical image of comparative ear anatomy from 1789 by Andreae Comparetti.

Then again, if hearing was just about size of the ear components, then you’d expect that yappy 5 pound Chihuahua to hear much higher frequencies than the lumbering 100 pound St. Bernard. Yet hearing sensitivity from the two ends of the dog spectrum don’t vary by much. This is because there’s a big difference between what the ear can mechanically detect and what the animal actually hears. Chihuahuas and St. Bernards are both breeds derived from a common wolf-like ancestor that probably didn’t have as much variability as we’ve imposed on the domesticated dog, so their brains are still largely tuned to hear what a medium to large pseudo wolf-like animal should hear (Heffner, 1983).

But hearing is more than just detection of sound. It’s also important to figure out where the sound is coming from. A sound’s location is calculated in the superior olive – nuclei in the brainstem that compare the difference in time of arrival of low frequency sounds at your ears and the difference in amplitude between your ears (because your head gets in the way, making a sound “shadow” on the side of your head furthest from the sound) for higher frequency sounds. This means that animals with very large heads, like elephants, will be able to figure out the location of longer wavelength (lower pitched) sounds, but probably will have problems localizing high pitched sounds because the shorter frequencies will not even get to the other side of their heads at a useful level. On the other hand, smaller animals, which often have large external ears, are under greater selective pressure to localize higher pitched sounds, but have heads too small to pick up the very low infrasonic sounds that elephants use.

Audiograms (auditory sensitivity in air measured in dB SPL) by frequency of animals of different sizes showing the shift of maximum sensitivity to lower frequencies with increased size. Data replotted based on audiogram data by Sivian and White (1933). “On minimum audible sound fields.” Journal of the Acoustical Society of America, 4: 288-321; ISO 1961; Heffner, H., & Masterton, B. (1980). “Hearing in glires: domestic rabbit, cotton rat, feral house mouse, and kangaroo rat.” Journal of the Acoustical Society of America, 68, 1584-1599.; Heffner, R. S., & Heffner, H. E. (1982). “Hearing in the elephant: Absolute sensitivity, frequency discrimination, and sound localization.” Journal of Comparative and Physiological Psychology, 96, 926-944.; Heffner H.E. (1983). “Hearing in large and small dogs: Absolute thresholds and size of the tympanic membrane.” Behav. Neurosci. 97: 310-318. ; Jackson, L.L., et al.(1999). “Free-field audiogram of the Japanese macaque (Macaca fuscata).” Journal of the Acoustical Society of America, 106: 3017-3023.

But you as a human are a fairly big mammal. If you look up “Body Size Species Richness Distribution” which shows the relative size of animals living in a given area, you’ll find that humans are among the largest animals in North America (Brown and Nicoletto, 1991). And your hearing abilities scale well with other terrestrial mammals, so you can stop feeling bad about your dog hearing “better.” But what if, by comic-book science or alternate evolution, you were much bigger or smaller? What would the world sound like? Imagine you were suddenly mouse-sized, scrambling along the floor of an office. While the usual chatter of humans would be almost completely inaudible, the world would be filled with a cacophony of ultrasonics. Fluorescent lights and computer monitors would scream in the 30-50 kHz range. Ultrasonic eddies would hiss loudly from air conditioning vents. Smartphones would not play music, but rather hum and squeal as their displays changed.

And if you were larger? For a human scaled up to elephantine dimensions, the sounds of the world would shift downward. While you could still hear (and possibly understand) human speech and music, the fine nuances from the upper frequency ranges would be lost, voices audible but mumbled and hard to localize. But you would gain the infrasonic world, the low rumbles of traffic noise and thrumming of heavy machinery taking on pitch, color and meaning. The seismic world of earthquakes and volcanoes would become part of your auditory tapestry. And you would hear greater distances as long wavelengths of low frequency sounds wrap around everything but the largest obstructions, letting you hear the foghorns miles distant as if they were bird calls nearby.

But these sounds are still in the realm of biological listeners, and the universe operates on scales far beyond that. The sounds from objects, large and small, have their own acoustic world, many beyond our ability to detect with the equipment evolution has provided. Weather phenomena, from gentle breezes to devastating tornadoes, blast throughout the infrasonic and ultrasonic ranges. Meteorites create infrasonic signatures through the upper atmosphere, trackable using a system devised to detect incoming ICBMs. Geophones, specialized low frequency microphones, pick up the sounds of extremely low frequency signals foretelling of volcanic eruptions and earthquakes. Beyond the earth, we translate electromagnetic frequencies into the audible range, letting us listen to the whistlers and hoppers that signal the flow of charged particles and lightning in the atmospheres of Earth and Jupiter, microwave signals of the remains of the Big Bang, and send listening devices on our spacecraft to let us hear the winds on Titan.

Here is a recording of whistlers recorded by the Van Allen Probes currently orbiting high in the upper atmosphere:

When the computer freezes or the phone battery dies, we complain about how much technology frustrates us and complicates our lives. But our audio technology is also the source of wonder, not only letting us talk to a friend around the world or listen to a podcast from astronauts orbiting the Earth, but letting us listen in on unheard worlds. Ultrasonic microphones let us listen in on bat echolocation and mouse songs, geophones let us wonder at elephants using infrasonic rumbles to communicate long distances and find water. And scientific translation tools let us shift the vibrations of the solar wind and aurora or even the patterns of pure math into human scaled songs of the greater universe. We are no longer constrained (or protected) by the ears that evolution has given us. Our auditory world has expanded into an acoustic ecology that contains the entire universe, and the implications of that remain wonderfully unclear.

__

Exhibit: Home Office

This is a recording made with standard stereo microphones of my home office. Aside from usual typing, mouse clicking and computer sounds, there are a couple of 3D printers running, some music playing, largely an environment you don’t pay much attention to while you’re working in it, yet acoustically very rich if you pay attention.

.

This sample was made by pitch shifting the frequencies of sonicoffice.wav down so that the ultrasonic moves into the normal human range and cuts off at about 1-2 kHz as if you were hearing with mouse ears. Sounds normally inaudible, like the squealing of the computer monitor cycling on kick in and the high pitched sound of the stepper motors from the 3D printer suddenly become much louder, while the familiar sounds are mostly gone.

.

This recording of the office was made with a Clarke Geophone, a seismic microphone used by geologists to pick up underground vibration. It’s primary sensitivity is around 80 Hz, although it’s range is from 0.1 Hz up to about 2 kHz. All you hear in this recording are very low frequency sounds and impacts (footsteps, keyboard strikes, vibration from printers, some fan vibration) that you usually ignore since your ears are not very well tuned to frequencies under 100 Hz.

.

Finally, this sample was made by pitch shifting the frequencies of infrasonicoffice.wav up as if you had grown to elephantine proportions. Footsteps and computer fan noises (usually almost indetectable at 60 Hz) become loud and tonal, and all the normal pitch of music and computer typing has disappeared aside from the bass. (WARNING: The fan noise is really annoying).

.

The point is: a space can sound radically different depending on the frequency ranges you hear. Different elements of the acoustic environment pop up depending on the type of recording instrument you use (ultrasonic microphone, regular microphones or geophones) or the size and sensitivity of your ears.

![Spectrograms (plots of acoustic energy [color] over time [horizontal axis] by frequency band [vertical axis]) from a 90 second recording in the author’s home office covering the auditory range from ultrasonic frequencies (>20 kHz top) to the sonic (20 Hz-20 kHz, middle) to the low frequency and infrasonic (<20 Hz).](https://soundstudiesblog.com/wp-content/uploads/2014/08/figure3officerange.jpg?w=479&h=637)

Spectrograms (plots of acoustic energy [color] over time [horizontal axis] by frequency band [vertical axis]) from a 90 second recording in the author’s home office covering the auditory range from ultrasonic frequencies (>20 kHz top) to the sonic (20 Hz-20 kHz, middle) to the low frequency and infrasonic (<20 Hz).

Featured image by Flickr User Jaime Wong.

—

Seth S. Horowitz, Ph.D. is a neuroscientist whose work in comparative and human hearing, balance and sleep research has been funded by the National Institutes of Health, National Science Foundation, and NASA. He has taught classes in animal behavior, neuroethology, brain development, the biology of hearing, and the musical mind. As chief neuroscientist at NeuroPop, Inc., he applies basic research to real world auditory applications and works extensively on educational outreach with The Engine Institute, a non-profit devoted to exploring the intersection between science and the arts. His book The Universal Sense: How Hearing Shapes the Mind was released by Bloomsbury in September 2012.

—

REWIND! If you liked this post, check out …

Reproducing Traces of War: Listening to Gas Shell Bombardment, 1918– Brian Hanrahan

Learning to Listen Beyond Our Ears– Owen Marshall

This is Your Body on the Velvet Underground– Jacob Smith

Recent Comments