Can’t Nobody Tell Me Nothin: Respectability and The Produced Voice in Lil Nas X’s “Old Town Road”

It’s been ten weeks now that we’ve all been kicking back in our Wranglers. allowing Lil Nas X’s infectious twang in “Old Town Road” to shower us in yeehaw goodness from its perch atop the Billboard Hot 100. Entrenched as it is on the pop chart, though, “Old Town Road”’s relationship to Billboard got off to a shaky start, first landing on the Hot Country Songs list only to be removed when the publication determined the hit “does not embrace enough elements of today’s country music to chart in its current version.” There’s a lot to unpack in a statement like that, and folks have been unpacking it quite consistently, especially in relation to notions of genre and race (in addition to Matthew Morrison’s recommended reads, I’d add Karl Hagstrom-Miller’s Segregating Sound, which traces the roots of segregated music markets). Using the context of that ongoing discussion about genre and race, I’m listening here to a specific moment in “Old Town Road”— the line “can’t nobody tell me nothin”—and the way it changes from the original version to the Billy Ray Cyrus remix. Lil Nas X uses the sound of his voice in this moment to savvily leverage his collaboration with a country music icon, and by doing so subtly drawing out the respectability politics underlying Billboard’s racialized genre categorization of his song.

After each of Lil Nas X’s two verses in the original “Old Town Road,” we hear the refrain “can’t nobody tell me nothin.” The song’s texture is fairly sparse throughout, but the refrains feature some added elements. The 808-style kick drum and rattling hihats continue to dominate the soundscape, but they yield just enough room for the banjo sample to come through more clearly than in the verse, and it plucks out a double-time rhythm in the refrain. The vocals change, too, as Lil Nas X performs a call-and-response with himself. The call, “can’t nobody tell me nothin,” is center channel, just as his voice has been throughout the verse, but the response, “can’t tell me nothin,” moves into the left and right speaker, a chorus of Lil Nas X answering the call. Listen closely to these vocals, and you’ll also hear some pitch correction. Colloquially known as “autotune,” this is an effect purposely pushed to extreme limits to produce garbled or robotic vocals and is a technique most often associated with contemporary hip hop and R&B. Here, it’s applied to this melodic refrain, most noticeably on “nothin” in the call and “can’t” in the response,

After Billboard removed the song from the Hot Country chart in late March, country star Billy Ray Cyrus tweeted his support for “Old Town Road,” and by early April, Lil Nas X had pulled him onto the remix that would come to dominate the Hot 100. The Cyrus remix is straightforward: Cyrus takes the opening chorus, then Lil Nas X’s original version plays through from the first verse to the last chorus, at which point Cyrus tacks on one more verse and then sings the hook in tandem with Lil Nas X to close the song. Well, it’s straightforward except that, while Lil Nas X’s material sounds otherwise unaltered from the original version, the pitch correction is smoothed out so that the garble from the previous version is gone.

In order to figure out what happened to the pitch correction from the first to second “Old Town Road,” I’m bringing in a conceptual framework I’ve been tinkering with the last couple of years: the produced voice. Within this framework, all recorded voices are produced in two specific ways: 1) everyone performs their bodies in relation to gender, race, ability, sex, and class norms, and 2) everyone who sings on record has their voice altered or affected with various levels of technology. To think about a produced voice is to think about how voices are shaped by recording technologies and social technologies at the same time. Listening to the multiple versions of “Old Town Road” draws my attention specifically to the always collaborative nature of produced voices.

In performativity terms—and here Judith Butler’s idea in “Performative Acts and Gender Constitution: An Essay in Phenomenology and Feminist Theory” that “one is not simply a body, but, in some very key sense, one does one’s body” (521) is crucial—a collaboratively produced voice is a little nebulous, as it’s not always clear who I’m collaborating with to produce my voice. Sometimes I can (shamefully, I assure you) recognize myself changing the way my voice sounds to fit into some sort of, say, gendered norm that my surroundings expect. As a white man operating in a white supremacist, cisheteropatriarchal society, the deeper my voice sounds, the more authority adheres to me. (Well, only to a point, but that’s another essay). Whether I consciously or subconsciously make my voice deeper, I am definitely involved in a collaboration, as the frequency of my voice is initiated in my body but dictated outside my body. Who I’m collaborating with is harder to establish – maybe it’s the people in the room, or maybe my produced voice and your listening ears (read Jennifer Stoever’s The Sonic Color Line for more on the listening ear) are all working in collaboration with notions of white masculine authority that have long-since been baked into society by teams of chefs whose names we didn’t record.

In studio production terms, a voice’s collaborators are often hard to name, too, but for different reasons. For most major label releases, we could ask who applied the effects that shaped the solo artist’s voice, and while there’s a specific answer to that question, I’m willing to bet that very few people know for sure. Even where we can track down the engineers, producers, and mix and master artists who worked on any given song, the division of labor is such that probably multiple people (some who aren’t credited anywhere as having worked on the song) adjusted the settings of those vocal effects at some point in the process, masking the details of the collaboration. In the end, we attribute the voice to a singular recording artist because that’s the person who initiated the sound and because the voice circulates in an individualistic, capitalist economy that requires a focal point for our consumption. But my point here is that collaboratively produced voices are messy, with so many actors—social or technological—playing a role in the final outcome that we lose track of all the moving pieces.

Not everyone is comfortable with this mess. For instance, a few years ago long-time David Bowie producer Tony Visconti, while lamenting the role of technology in contemporary studio recordings, mentioned Adele as a singer whose voice may not be as great as it is made to sound on record. Adele responded by requesting that Visconti suck her dick. And though the two seemed at odds with each other, they were being equally disingenuous: Visconti knows that every voice he’s produced has been manipulated in some way, and Adele, too, knows that her voice is run through a variety of effects and algorithms that make her sound as epically Adele as possible. Visconti and Adele align in their desire to sidestep the fundamental collaboration at play in recorded voices, keeping invisible the social and political norms that act on the voice, keeping inaudible the many technologies that shape the voice.

Propping up this Adele-Visconti exchange is a broader relationship between those who benefit from social gender/race scripts and those who benefit from masking technological collaboration. That is, Adele and Visconti both benefit, to varying degrees, from their white femininity and white masculinity, respectively; they fit the molds of race and gender respectability. Similarly, they both benefit from discourses surrounding respectable music and voice performance; they are imbued with singular talent by those discourses. And on the flipside of that relationship, where we find artists who have cultivated a failure to comport with the standards of a respectable singing voice, we’ll also find artists whose bodies don’t benefit from social gender/race scripts: especially Black and Brown artists—non-binary, women, and men. Here I’m using “failure” in the same sense Jack Halberstam does in The Queer Art of Failure, where failing is purposeful, subversive. To fail queerly isn’t to fall short of a standard you’re trying to meet; it’s to fall short of a standard you think is bullshit to begin with. This kind of failure would be a performance of non-conformity that draws attention to the ways that systemic flaws – whether in social codes or technological music collaborations – privilege ways of being and sounding that conform with white feminine and white masculine aesthetic standards. To fail to meet those standards is to call the standards into question.

So, because respectably collaborating a voice into existence involves masking the collaboration, failing to collaborate a voice into existence would involve exposing the process. This would open up the opportunity for us to hear a singer like Ma$e, who always sings and never sings well, as highlighting a part of the collaborative vocal process (namely pitch correction, either through training or processing the voice) by leaving it out. To listen to Ma$e in terms of failed collaboration is to notice which collaborators didn’t do their work. In Princess Nokia’s doubled and tripled and quadrupled voice, spread carefully across the stereo field, we hear a fully exposed collaboration that fails to even attempt to meet any standards of respectable singing voices. In the case of the countless trap artists whose voices come out garbled through the purposeful misapplication of pitch correction algorithms, we can hear the failure of collaboration in the clumsy or over-eager use of the technology. This performed pitch correction failure is the sound I started with, Lil Nas X on the original lines “can’t nobody tell me nothin.” It’s one of the few times we can hear a trap aesthetic in “Old Town Road,” outside of its instrumental.

In each of these instances, the failure to collaborate results in the failure to achieve a respectably produced voice: a voice that can sing on pitch, a voice that can sing on pitch live, a voice that is trained, a voice that is controlled, a voice that requires no intervention to be perceived as “good” or “beautiful” or “capable.” And when respectable vocal collaboration further empowers white femininity or white masculinity, failure to collaborate right can mean failing in a system that was never going to let you pass in the first place. Or failing in a system that applies nebulous genre standards that happen to keep a song fronted by a Black artist off the country charts but allow a remix of the same song to place a white country artist on the hip hop charts.

The production shift on “can’t nobody tell me nothin” is subtle, but it brings the relationship between social race/gender scripts and technological musical collaboration into focus a bit. It isn’t hard to read “does not embrace enough elements of today’s country music” as “sounds too Black,” and enough people called bullshit on Billboard that the publication has had to explicitly deny that their decision had anything to do with race. Lil Nas X’s remix with Billy Ray Cyrus puts Billboard in a really tricky rhetorical position, though. Cyrus’s vocals—more pinched and nasally than Lil Nas X’s, with more vibrato on the hook (especially on “road” and “ride”), and framed without the hip hop-style drums for the first half of his verse—draw attention to the country elements already at play in the song and remove a good deal of doubt about whether “Old Town Road” broadly comports with the genre. But for Billboard to place the song back on the Country chart only after white Billy Ray Cyrus joined the show? Doing so would only intensify the belief that Billboard’s original decision was racially motivated. In order for Billboard to maintain its own colorblind respectability in this matter, in order to keep their name from being at the center of a controversy about race and genre, in order to avoid being the publication believed to still be divvying up genres primarily based on race in 2019, Billboard’s best move is to not move. Even when everyone else in the world knows “Old Town Road” is, among other things, a country song, Billboard’s country charts will chug along as if in a parallel universe where the song never existed.

The production shift on “can’t nobody tell me nothin” is subtle, but it brings the relationship between social race/gender scripts and technological musical collaboration into focus a bit. It isn’t hard to read “does not embrace enough elements of today’s country music” as “sounds too Black,” and enough people called bullshit on Billboard that the publication has had to explicitly deny that their decision had anything to do with race. Lil Nas X’s remix with Billy Ray Cyrus puts Billboard in a really tricky rhetorical position, though. Cyrus’s vocals—more pinched and nasally than Lil Nas X’s, with more vibrato on the hook (especially on “road” and “ride”), and framed without the hip hop-style drums for the first half of his verse—draw attention to the country elements already at play in the song and remove a good deal of doubt about whether “Old Town Road” broadly comports with the genre. But for Billboard to place the song back on the Country chart only after white Billy Ray Cyrus joined the show? Doing so would only intensify the belief that Billboard’s original decision was racially motivated. In order for Billboard to maintain its own colorblind respectability in this matter, in order to keep their name from being at the center of a controversy about race and genre, in order to avoid being the publication believed to still be divvying up genres primarily based on race in 2019, Billboard’s best move is to not move. Even when everyone else in the world knows “Old Town Road” is, among other things, a country song, Billboard’s country charts will chug along as if in a parallel universe where the song never existed.

As Lil Nas X shifted Billboard into a rhetorical checkmate with the release of the Billy Ray Cyrus remix, he also shifted his voice into a more respectable rendition of “can’t nobody tell me nothin,” removing the extreme application of pitch correction effects. This seems the opposite of what we might expect. The Billy Ray Cyrus remix is defiant, thumbing its nose at Billboard for not recognizing the countryness of the tune to begin with. Why, in a defiant moment, would Lil Nas X become more respectable in his vocal production? I hear the smoothed-out remix vocals as a palimpsest, a writing-over that, in the traces of its editing, points to the fact that something has been changed, therefore never fully erasing the original’s over-affected refrain. These more respectable vocals seem to comport with Billboard’s expectations for what a country song should be, showing up in more acceptable garb to request admittance to the country chart, even as the new vocals smuggle in the memory of the original’s more roboticized lines.

As Lil Nas X shifted Billboard into a rhetorical checkmate with the release of the Billy Ray Cyrus remix, he also shifted his voice into a more respectable rendition of “can’t nobody tell me nothin,” removing the extreme application of pitch correction effects. This seems the opposite of what we might expect. The Billy Ray Cyrus remix is defiant, thumbing its nose at Billboard for not recognizing the countryness of the tune to begin with. Why, in a defiant moment, would Lil Nas X become more respectable in his vocal production? I hear the smoothed-out remix vocals as a palimpsest, a writing-over that, in the traces of its editing, points to the fact that something has been changed, therefore never fully erasing the original’s over-affected refrain. These more respectable vocals seem to comport with Billboard’s expectations for what a country song should be, showing up in more acceptable garb to request admittance to the country chart, even as the new vocals smuggle in the memory of the original’s more roboticized lines.

While the original vocals failed to achieve respectability by exposing the recording technologies of collaboration, the remix vocals fail to achieve respectability by exposing the social technologies of collaboration, feigning compliance and daring its arbiter to fail it all the same. The change in “Old Town Road”’s vocals from original to remix, then, stacks collaborative exposures on top of one another as Lil Nas X reminds the industry gatekeepers that can’t nobody tell him nothin, indeed.

_

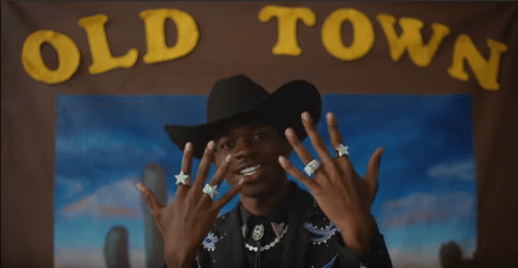

Featured image, and all images in this post: screenshots from “Lil Nas X – Old Town Road (Official Movie) ft. Billy Ray Cyrus” posted by YouTube user Lil Nas X

_

Justin aDams Burton is Assistant Professor of Music at Rider University. His research revolves around critical race and gender theory in hip hop and pop, and his book, Posthuman Rap, is available now. He is also co-editing the forthcoming (2018) Oxford Handbook of Hip Hop Music Studies. You can catch him at justindburton.com and on Twitter @j_adams_burton. His favorite rapper is one or two of the Fat Boys.

_

REWIND! . . .If you liked this post, you may also dig:

REWIND! . . .If you liked this post, you may also dig:

Vocal Anguish, Disinformation, and the Politics of Eurovision 2016-Maria Sonevytsky

Cardi B: Bringing the Cold and Sexy to Hip Hop-Ashley Luthers

“To Unprotect and Subserve”: King Britt Samples the Sonic Archive of Police Violence-Alex Werth

Echo and the Chorus of Female Machines

Editor’s Note: February may be over, but our forum is still on! Today I bring you installment #5 of Sounding Out!‘s blog forum on gender and voice. Last week Art Blake talked about how his experience shifting his voice from feminine to masculine as a transgender man intersects with his work on John Cage. Before that, Regina Bradley put the soundtrack of Scandal in conversation with race and gender. The week before I talked about what it meant to have people call me, a woman of color, “loud.” That post was preceded by Christine Ehrick‘s selections from her forthcoming book, on the gendered soundscape. We have one more left! Robin James will round out our forum with an analysis of how ideas of what women should sound like have roots in Greek philosophy.

Editor’s Note: February may be over, but our forum is still on! Today I bring you installment #5 of Sounding Out!‘s blog forum on gender and voice. Last week Art Blake talked about how his experience shifting his voice from feminine to masculine as a transgender man intersects with his work on John Cage. Before that, Regina Bradley put the soundtrack of Scandal in conversation with race and gender. The week before I talked about what it meant to have people call me, a woman of color, “loud.” That post was preceded by Christine Ehrick‘s selections from her forthcoming book, on the gendered soundscape. We have one more left! Robin James will round out our forum with an analysis of how ideas of what women should sound like have roots in Greek philosophy.

This week Canadian artist and writer AO Roberts takes us into the arena of speech synthesis and makes us wonder about what it means that the voices are so often female. So, lean in, close your eyes, and don’t be afraid of the robots’ voices. –Liana M. Silva, Managing Editor

—

I used Apple’s SIRI for the first time on an iPhone 4S. After hundreds of miles in a van full of people on a cross-country tour, all of the music had been played and the comedy mp3s entirely depleted. So, like so many first time SIRI users, we killed time by asking questions that went from the obscure to the absurd. Passive, awaiting command, prone to glitches: there was something both comedic and insidious about SIRI as female-gendered program, something that seemed to bind up the technology with stereotypical ideas of femininity.

Speech synthesis is the artificial simulation of the human voice through hardware or software, and SIRI is but one incarnation of the historical chorus of machines speaking what we code to be female. Starting from the early 20th century Voder, to the Cold-War era Silvia and Audrey, up to Amazon’s newly released Echo, researchers have by and large developed these applications as female personae. Each program articulates an individual timbre and character, soothing soft spoken or matter of fact; this is your mother, sister, or lover, here to affirm your interests while reminding you about that missed birthday. She is easy to call up in memory, tones rounded at the edges, like Scarlett Johansson’s smoky conviviality as Samantha in Spike Jonze’s Her, a bodiless purr. Simulated speech articulates a series of assumptions about what neutral articulation is, what a female voice is, and whose voice technology can ventriloquize.

The ways computers hear and speak the human voice are as complex as they are rapidly expanding. But in robotics gender is charted down to actual wavelength, actively policed around 100-150 HZ (male) and 200-250 HZ (female). Now prevalent in entertainment, navigation, law enforcement, surveillance, security, and communications, speech synthesis and recognition hold up an acoustic mirror to the dominant cultures from which they materialize. While they might provide useful tools for everything from time management to self-improvement, they also reinforce cisheteronormative definitions of personhood. Like the binary code that now gives it form, the development of speech recognition separated the entire spectrum of vocal expression into rigid biologically based categories. Ideas of a real voice vs. fake voice, in all their resonances with passing or failing one’s gender performance, have through this process been designed into the technology itself.

A SERIES OF MISERABLE GRUNTS

“Kempelen Speakingmachine” by Fabian Brackhane (Quintatoen), Saarbrücken – Own work. Licensed under Public Domain via Wikimedia Commons –

The first voice to be synthesized was a reed and bellows box invented by Wolfgang Von Kempelen in 1791 and shown off in the courts of the Hapsburg Empire. Von Kempelen had gained renown for his chess-playing Turk, a racist cartoon of an automaton that made waves amongst the nobles until it was revealed that underneath the tabletop was a small man secretly moving the chess player’s limbs. Von Kempelen’s second work, the speaking machine, wowed its audiences thoroughly. The player wheedled and squeezed the contraption, pushing air through its reed larynx to replicate simple words like mama and papa.

Synthesizing the voice has always required some level of making strange, of phonemic abstraction. Bell Laboratories originally developed The Voder, the earliest incarnation of the vocoder, as a cryptographic device for WWII military communications. The machine split the human voice into a spectral representation, fragmenting the source into number of different frequencies that were then recombined into synthetic speech. Noise and unintelligibility shielded the Allies’ phone calls from Nazi interception. The Vocoder’s developer, Ralph Miller, bemoaned the atrocities the machine performed on language, reducing it to a “series of miserable grunts.”

From website Binary Heap-

In his history of the The Vocoder, How to Wreck a Nice Beach, Dave Tompkins tells how the apparatus originally took up an entire wall and was played solely by female phone operators, but the pitch of the female voice was said to be too high to be heard by the nascent technology. In fact, when it debuted at the 1939 World’s Fair, only men were chosen to experience the roboticization of their voice. The Voder was, in fact, originally created to only hear pitches in the range of 100-150 HZ, a designed exclusion from the start. So when the Signal Corps of the Army convinced President Eisenhower to call his wife via Voder from North Africa, Miller and the developers panicked for fear she wouldn’t be heard. Entering the Pentagon late at night, Mamie Eisenhower spoke into the telephone and a fragmented version of her words travelled across the Atlantic. Resurfacing in angular vocoded form, her voice urged her husband to come home, and he had no problem hearing her. Instead of giving the developers pause to question their own definitions of gender, this interaction is told as a derisive footnote of in the history of the sound and technology: the punchline being that the first lady’s voice was heard because it was as low as a man’s.

WAKE WORDS

In fall 2014 Amazon launched Echo, their new personal assistant device. Echo is a 12-inch long plain black cone that stands upright on a tabletop, similar in appearance to a telephoto camera lens. Equipped with far field mics, Echo has a female voice, connected to the cloud and always on standby. Users engage Echo with their own chosen ‘wake’ word. The linguistic similarity to a BDSM safe word could have been lost on developers. Although here inverted, the word is used to engage rather than halt action, awakening an instrument that lays dormant awaiting command.

Amazon’s much-parodied promotional video for Echo is narrated by the innocent voice of the youngest daughter in a happy, straight, white, middle-class family. While the son pitches Oedipal jabs at the father for his dubious role as patriarchal translator of technology, each member of the family soon discovers the ways Echo is useful to them. They name it Alexa and move from questions like: “Alexa how many teaspoons in a tablespoon” and “How tall is Mt. Everest?” to commands for dance mixes and cute jokes. Echo enacts a hybrid role as mother, surrogate companion, and nanny of sorts not through any real aspects of labor but through the intangible contribution of information. As a female-voiced oracle in the early pantheon of the Internet of Things, Echo’s use value is squarely placed in the realm of cisheteronormative domestic knowledge production. Gone are the tongue-in-cheek existential questions proffered to SIRI upon its release. The future with Echo is clean, wholesome, and absolutely SFW. But what does it mean for Echo to be accepted into the home, as a female gendered speaking subject?

Concerns over privacy and surveillance quickly followed Echo’s release, alarms mostly sounding over its “always on” function. Amazon banks on the safety and intimacy we culturally associate with the female voice to ease the transition of robots and AI into the home. If the promotional video painted an accurate picture of Echo’s usage, it would appear that Amazon had successfully launched Echo as a bodiless voice over the uncanny valley, the chasm below littered with broken phalanxes of female machines. Masahiro Mori coined the now familiar term uncanny valley in 1970 to describe the dip in empathic response to humanoid robots as they approach realism.

If we listen to the litany of reactions to robot voices through the filters of gender and sexuality it reveals the stark inclines of what we might think of as a queer uncanny valley. Paulina Palmer wrote in The Queer Uncanny about reoccurring tropes in queer film and literature, expanding upon what Freud saw as a prototypical aspect of the uncanny: the doubling and interchanging of the self. In the queer uncanny we see another kind of rift: that between signifier and signified embodied by trans people, the tearing apart of gender from its biological basis. The non-linear algebra of difference posed by queer and trans bodies is akin to the blurring of divisions between human and machine represented by the cyborg. This is the coupling of transphobic and automatonophobic anxieties, defined always in relation to the responses and preoccupations of a white, able bodied, cisgendered male norm. This is the queer uncanny valley. For the synthesized voice to function here, it must ease the chasm, like Echo: sutured by a voice coded as neutral, but premised upon the imagined body of a white, heterosexual, educated middle class woman.

22% Female

My own voice spans a range that would have dismayed someone like Ralph Miller. I sang tenor in Junior High choir until I was found out for straying, and then warned to stay properly in the realms of alto, but preferably soprano range. Around the same time I saw a late night feature of Audrey Hepburn in My Fair Lady, struggling to lose her crass proletariat inflection. So I, a working class gender ambivalent kid, walked around with books on my head muttering The Rain In Spain Falls Mainly on the Plain for weeks after. I’m generally loud, opinionated and people remember me for my laugh. I have sung in doom metal and grindcore punk bands, using both screeching highs and the growling “cookie monster” vocal technique mostly employed by cismales.

My own voice spans a range that would have dismayed someone like Ralph Miller. I sang tenor in Junior High choir until I was found out for straying, and then warned to stay properly in the realms of alto, but preferably soprano range. Around the same time I saw a late night feature of Audrey Hepburn in My Fair Lady, struggling to lose her crass proletariat inflection. So I, a working class gender ambivalent kid, walked around with books on my head muttering The Rain In Spain Falls Mainly on the Plain for weeks after. I’m generally loud, opinionated and people remember me for my laugh. I have sung in doom metal and grindcore punk bands, using both screeching highs and the growling “cookie monster” vocal technique mostly employed by cismales.

Given my own history of toying with and estrangement from what my voice is supposed to sound like, I was interested to try out a new app on the market, the Exceptional Voice App (EVA ), touted as “The World’s First and Only Transgender Voice Training App.” Functioning as a speech recognition program, EVA analyzes the pitch, respiration, and character of your voice with the stated goal of providing training to sound more like one’s authentic self. Behind EVA is Kathe Perez, a speech pathologist and businesswoman, the developer and provider of code to the circuit. And behind the code is the promise of giving proper form to rough sounds, pitch-perfect prosody, safety, acceptance, and wholeness. Informational and training videos are integrated with tonal mimicry for phrases like hee, haa, and ooh. User progress is rated and logged with options to share goals reached on Twitter and Facebook. Customers can buy EVA for Gals or EVA for Guys. I purchased the app online for my iPhone for $5.97.

Given my own history of toying with and estrangement from what my voice is supposed to sound like, I was interested to try out a new app on the market, the Exceptional Voice App (EVA ), touted as “The World’s First and Only Transgender Voice Training App.” Functioning as a speech recognition program, EVA analyzes the pitch, respiration, and character of your voice with the stated goal of providing training to sound more like one’s authentic self. Behind EVA is Kathe Perez, a speech pathologist and businesswoman, the developer and provider of code to the circuit. And behind the code is the promise of giving proper form to rough sounds, pitch-perfect prosody, safety, acceptance, and wholeness. Informational and training videos are integrated with tonal mimicry for phrases like hee, haa, and ooh. User progress is rated and logged with options to share goals reached on Twitter and Facebook. Customers can buy EVA for Gals or EVA for Guys. I purchased the app online for my iPhone for $5.97.

My initial EVA training scores informed me I was 22% female; a recurring number I receive in interfaces with identity recognition software. Facial recognition programs consistently rate my face at 22% female. If I smile I tend to get a higher female response than my neutral face, coded and read as male. Technology is caught up in these translations of gender: we socialize women to smile more than men, then write code for machines to recognize a woman in a face that smiles.

As for EVA’s usage, it seems to be a helpful pedagogical tool with more people sharing their positive results and reviews on trans forums every day. With violence against trans people persisting—even increasing—at alarming rates, experienced worst by trans women of color, the way one’s voice is heard and perceived is a real issue of safety. Programs like EVA can be employed to increase ease of mobility throughout the world. However, EVA is also out of reach to many, a classed capitalist venture that tautologically defines and creates users with supply. The context for EVA is the systems of legal, medical, and scientific categories inherited from Foucault’s era of discipline; the predetermined hallucination of normal sexuality, the invention of biological criteria to define the sexes and the pathologization of those outside each box, controlled by systems of biopower.

Despite all these tools we’ll never really know how we sound. It is true that the resonant chamber of our own skull provides us with a different acoustic image of our own voice. We hate to hear our voice recorded because suddenly we catch a sonic glimpse of what other people hear: sharper more angular tones, higher pitch, less warmth. Speech recognition and synthesis work upon the same logic, the shifting away from interiority; a just off the mark approximation. So the question remains what would a gender variant voice synthesis and recognition sound like? How much is reliant upon the technology and how much depends upon individual listeners, their culture, and what they project upon the voice? As markets grow, so too have more internationally accented English dialects been added to computer programs with voice synthesis. Thai, Indian, Arabic and Eastern European English were added to Mac OSX Lion in 2011. Can we hope to soon offer our voices to the industry not as a set of data to be mined into caricatures, but as a way to assist in the opening up in gender definitions? We would be better served to resist the urge to chime in and listen to the field in the same way we suddenly hear our recorded voice played back, with a focus on the sour notes of cold translation.

—

Featured image: “Golden People love Gold Jewelry Robots” by Flickr user epSos.de, CC BY 2.0

—

AO Roberts is a Canadian intermedia artist and writer based in Oakland whose work explores gender, technology and embodiment through sound, installation and print. A founding member of Winnipeg’s NGTVSPC feminist artist collective, they have shown their work at galleries and festivals internationally. They have also destroyed their vocal chords, played bass and made terrible sounds in a long line of noise projects and grindcore bands, including VOR, Hoover Death, Kursk and Wolbachia. They hold a BFA from the University of Manitoba and a MFA in Sculpture from California College of the Arts.

—

REWIND!…If you liked this post, you may also dig:

REWIND!…If you liked this post, you may also dig:

Hearing Queerly: NBC’s “The Voice”—Karen Tongson

On Sound and Pleasure: Meditations on the Human Voice—Yvon Bonefant

I Been On: BaddieBey and Beyoncé’s Sonic Masculinity—Regina Bradley

Recent Comments