Me & My Rhythm Box

I’m fortunate to have quite a few friends with eclectic musical tastes, who continually expose me some of the best, albeit often obscure, sources for inspiration. They arrive as random selections sent with a simple “you’d appreciate this” note attached. Good friends that they are, they rarely miss the mark. Most intriguing is when a cluster of things from different people carry a similar theme, converging to a need on my part for some sort of musical action.

The Inspiration

A few years back I received a huge dump of gigabytes of audio and video. Within it were some concert footage and performances this friend and I had been discussing; I consumed those quickly in an effort to keep that conversation going. Tucked amidst that dump however, was a copy of the movie Liquid Sky. I asked the friend about it because the description of the plot–“heroin-pushing aliens invade 80’s New York”–led me to believe it wasn’t really my thing (not a big fan of needles). Although my friend insisted I’d enjoy it, it took me several months if not a whole year before I finally pressed play.

Even though Liquid Sky was not my favorite movie by any measure, it was immediately apparent to my ears why my friend insisted I check it out. The film’s score was performed completely on a Fairlight CMI, capturing the synthesized undercurrent of the early 80’s New York music scene, more popularly seen in the cult classic Downtown 81, starring Jean Michel Basquiat. While the performances in that movie are perhaps closer to my tastes, none of them compare to one scene from Liquid Sky that I fell in love with, instantly:

The song grabbed me so much, I quickly churned out a cover version.

Primus Luta “Me & My Rhythm Box (V1)”

While felt good to make, there remained something less than satisfying about it. The cover had captured my sound, but at a moment of transition. More specifically, the means by which I was trying to achieve my sound at the time had shifted from a DAW-in-the-box aesthetic to a live performance feel, one that I had already begun writing about here on Sounding Out! in 2013. Interestingly, the inspiration to cover the song pushed me back to my in-the-box comfort zone.

It was good, but I knew I could do more.

As I said, these inspirations tend to group around a theme. Prior to receiving the Liquid Sky dump, I had received an email out of the blue from Hank Shocklee, producer and member of the Bomb Squad. I’ve been a longtime fan, and we had the opportunity to meet a few years prior. Since then he’s played a bit of a mentoring role for me. In the email he asked if I wanted to join an experimental electronic jazz project he was pulling together as the drummer.

I was taken aback. Hank Shocklee asking me to be his drummer. Honestly, I was shook.

Not that I didn’t know why he might think to ask me, but immediately I started to question whether I was good enough. Rather than dwell on those feelings, though, I started stepping up my game. While the project itself never came to fruition, Shocklee’s email led me to building my drmcrshr set of digital instruments.

A year or so later, I ran into Shocklee again when he was in Philadelphia for King Britt’s Afrofuturism event with mutual friend artist HPrizm. By this time I had already recorded the “Me and My Rhythm Box” cover. Serendipitously, HPrizm ended up dropping a sample from it in the midst of his set that night. A month or so later, HPrizm and I met up in the studio with longtime collaborator Takuma Kanaiwa to record a live set on which I played my drmcrshr instruments.

A year or so later, I ran into Shocklee again when he was in Philadelphia for King Britt’s Afrofuturism event with mutual friend artist HPrizm. By this time I had already recorded the “Me and My Rhythm Box” cover. Serendipitously, HPrizm ended up dropping a sample from it in the midst of his set that night. A month or so later, HPrizm and I met up in the studio with longtime collaborator Takuma Kanaiwa to record a live set on which I played my drmcrshr instruments.

Not too long after, I received an email from NYC-based electronic musician Elucid, saying he was digging for samples on this awesome soundtrack. . .Liquid Sky.

The final convergence point had been hanging over my head for a while. Having finished the first part of my “Toward a Practical Language series on Live Performance” series, I knew I wanted the next part to focus on electronic instruments, but wasn’t yet sure how to approach it. I had an inkling about a practicum on the actual design and development of an electronic instrument, but I didn’t yet have a project in mind.

As all of these things, people, and sounds came together–Liquid Sky, Shocklee, HPrizm, Elucid–it became clear that I needed to build a rhythm box.

The History

What stands out in Paula Sheppard’s performance from Liquid Sky is the visual itself. She stands in the warehouse performance space surrounded by 80’s scenesters posing with one hand in the air, mic in the other while strapped to her side is her rhythm box, the Roland CR-78, wires dangling from it to connect to the venue’s sound system. She hits play to start the beat launching into the ode for the rhythm machine.

Contextually, it’s far more performance art than music performance. There isn’t much evidence from the clip that the CR-78 is any more than a prop, as the synthesizer lines indicate the use of a backing track. The commentary in the lyrics however, hone in on an intent to present the rhythm box as the perfect musical companion, reminiscent of comments Raymond Scott often made about his desire to make a machine to replace musicians.

My rhythm box is sweet

Never forgets a beat

It does its rule

Do you want to know why?

It is pre-programmed

Rhythm machines such as the CR-78 were originally designed as accompaniment machines, specifically for organ players. They came pre-programmed with a number of traditional rhythm patterns–the standards being rock, swing, waltz and samba–though the CR-78 had many more variations. Such machines were not designed to be instruments themselves, rather musicians would play other instruments to them.

In 1978 when the CR-78 was introduced, rhythm machines were becoming quite sophisticated. The CR-78 included automatic fills that could be set to play at set intervals, providing natural breaks for songs. As with a few other machines, selecting multiple rhythms could combine patterns into new rhythms. The CR-78 also had mute buttons and a small mixer, which allowed slight customization of patterns, but what truly set the CR-78 apart was the fact that users could program their own patterns and even save them.

TR-808 (top) and TR-909

By the time it appeared in Liquid Sky, the CR-78 had already been succeeded by other CR lines culminating in the CR-8000. Roland also had the TR series including the TR-808 and the TR-909, which was released in 1982, the same year Liquid Sky premiered.

In 1980 however, Roger Linn’s LM-1 premiered. What distinguished the LM-1 from other drum machines was that it used drum samples–rather than analog sounds–giving it more “real” sounding drum rhythms (for the time). The LM-1 and its predecessor, the Linn Drum both had individual drum triggers for its sounds that could be programmed into user sequences or played live. These features in particular marked the shift from rhythm machines to drum machines.

In the post-MIDI decades since, we’ve come to think less and less about rhythm machines. With the rise of in-the-box virtual instruments, the idea of drum programming limitations (such as those found on most rhythm machines) seems absurd or arcane to modern tastes. People love the sounds of these older machines, evidenced by the tons of analog drum samples and virtual and hardware clones/remakes on the market, but they want the level of control modern technologies have grown them accustomed to.

Controlling the Roland CR-5000 from an Akai MPC-1000 using a custom built converter

The general assumption is that rhythm machines aren’t traditionally playable, and considering how outdated their rhythms tend to seem, lacking in the modern sensibility. My challenge thus, became clearer: I sought out to build a rhythm machine that would challenge this notion, while retaining the spirit of the traditional rhythm box.

Challenges and Limitations

At the outset, I wanted to base my rhythm machine on analog circuitry. I had previously built a number of digital drum machines–both sample and synthesis-based–for my Heads collection. Working in the analog arena allowed me to approach the design of my instrument in a way that respected the limitations my rhythm machine predecessors worked with and around.

By this time I had spent a couple of years mentoring with Jeff Blenkinsopp at The Analog Lab in New York, a place devoted to helping people from all over the world gain “further understanding the inner workings of their musical equipment.” I had already designed a rather complex analog signal processor, so I felt comfortable in the format. However, I hadn’t truly honed my skills around instrument design. In many ways, I wanted this project to be the testing ground for my own ability to create instruments, but prior experience taught me that going into such a complex project without the proper skills would be self defeating. Even more, my true goal was centered more around functionality rather than details like circuit board designs for individual sounds.

To avoid those rabbit holes–at least temporarily, I’ve since gone full circuit design on my analog sound projects–I chose to use DIY designs from the modular synth community as the basis for my rhythm box. That said, I limited myself to designs that featured analog sound sources, and only allowed myself to use designs that were available as PCB only. I would source all my own parts, solder all of my boards and configure them into the rhythm machine of my dreams.

Features

The wonderful thing about the modular synth community is that there is a lot of stuff out there. The difficult thing about the modular synth community is that there’s a lot of stuff out there. If you’ve got enough rack space, you can pretty much put together a modular that will perform whatever functionality you want. How modules patch together fundamentally defines your instrument, making module selection the most essential process. I was aiming to build a more semi-modular configuration, forgoing the patch cables, but that didn’t make my selection any easier. I wanted to have three sound sources (nominally: kick, snare and hi-hat), a sequencer and some sort of filter, which would all flow into a simple monophonic mixer design of my own.

For the sounds I chose a simple kick module from Barton, and the Jupiter Storm unit from Hex Inverter. The sound of the kick module was rooted enough in the classic analog sound while offering enough modulation points to make it mutable. The triple square wave design of the Jupiter Storm really excited me as It had the range to pull off hi-hat and snare sounds in addition to other percussive and drone sounds, plus it featured two outputs giving me all three of my voices on in two pcb sets.

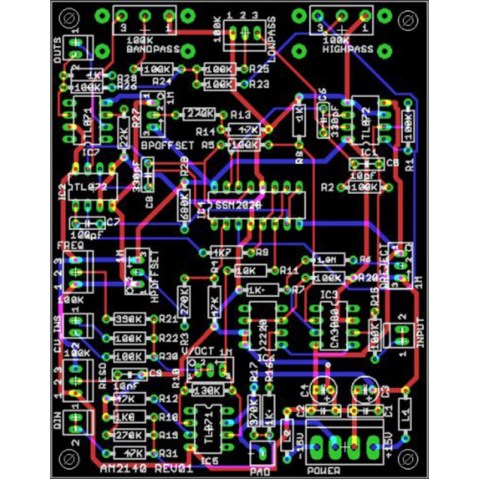

Filters are often considered the heart of a modular set up, as they way they shape the sound tends to define its character. In choosing one for my rhythm machine the main thing I wanted was control over multiple frequency bands. Because there would be three different sound sources I needed to be able to tailor the filter for a wide spectrum of sounds. As such I chose the AM2140 Resonant Filter.

I had no plans to include triggers for the sounds on my rhythm machine so the sequencer was going to be the heart of the performance as it would be responsible for any and all triggering of sounds. Needing to control three sounds simultaneously without any stored memory was quite a tall order, but fortunately I found the perfect solution in the amazing Turing Machine modules. With its expansion board the Turing machine can put out four different patterns based on it’s main pattern creator which can create fully random patterns or patterns that mutate as they progress.

The Results

I spent a couple of weeks after getting all the pcb’s parts and hardware together, wiring and rewiring connections until I got comfortable with how all of these parts were interacting with each other. I was fortunate to happen upon a vintage White Instruments box, which formally housed an attenuation meter, that was perfect for my machine. After testing with cardboard I laid out my own faceplates, which and put everything in the box. As soon as I plugged it in and started playing, I knew I had succeeded.

Early test of RIDM before it went in the Box

I call it the RIDM Box (Rhythmically Intelligent Drum Machine Box). I’ve been playing it now for over two years, to the point where today I would say it is my primary instrument. Almost immediately afterward I built a companion piece called the Snare Bender which works both as a standalone and as a controller for the RIDM Box. That one I did from scratch hand wired with no layouts.

While this is by no means a standard approach to modern electronic instrument design (if a standard approach even exists), what I learned through the process is really the value of looking back. With so much of modern technology being future forward in its approach, the assumption is that we’re at better starting positions for innovation than our predecessors. While we have so many more resources at our disposal, I think the limitations of the past were often more conductive to truly innovative approaches. By exploring those limitations with modern eyes a doorway opened up for me, the result of which is an instrument like no other, past or present.

I will probably continue playing the two of these instruments together for a while, but ultimately I’m leaning toward a new original design which takes the learnings from these projects and fully flushes out the performing instrument aspect of analog design. In the meantime, my process would not be complete if I did not return to the original inspiration. So I’ll leave you with the RIDM Box version of “Me & My Rhythm Box”—available on my library sessions release for the instrument.

—

Primus Luta is a husband and father of three. He is a writer and an artist exploring the intersection of technology and art, and their philosophical implications.

—

REWIND!…If you liked this post, you may also dig:

REWIND!…If you liked this post, you may also dig:

Heads: Reblurring The Lines–Primus Luta

Into the Woods: A Brief History of Wood Paneling on Synthesizers*–Tara Rodgers

Afrofuturism, Public Enemy, and Fear of a Black Planet at 25—andré carrington

Trap Irony: Where Aesthetics Become Politics

This beat ‘bout to get murdered

Thought this was Future when I heard it

Desiigner sounds kinda like Future. Probably you’ve noticed? Everyone else has. While some reactions are a register of genuine surprise that “Panda” isn’t a Future song (cf Uncle Murda epigraph), many are a combination of reflexive skepticism about Desiigner’s authenticity (He’s never even been to Atlanta!!)–or even the authenticity of New York as a hip hop city–alongside a sort of schadenfreude over his ability to notch a higher rated song than Future has ever managed (“Panda” hit #1 for two weeks in May 2016). This latter observation is certainly true: Southern trap god Future has cracked the Billboard Hot 100 top 10 just once, as a featured artist on Lil Wayne’s “Love Me,” and his other appearances in the top 30 are similarly collaboration. (My discussion of trap focuses here on the hip hop wing of trap. The related but not identical EDM genre also called “trap” lies outside the scope of this particular analysis.) But pointing to the chart “failure” of Future’s singles is also entirely disingenuous, as all four of his official album releases have landed in the Billboard 200 top 10, including a #1 for 2015’s DS2 and 2016’s EVOL. In other words, Future isn’t exactly struggling to be relevant, which is why the nearly reflexive journalistic pairing of “Desiigner sounds like Future” and “Desiigner’s song is more successful than any Future song” gets my critical side-eye popping. The reception of Desiigner as a fake-but-more-successful Future strikes me as a dig at trap music as an easily replicable and therefore unserious genre. Here, I’m listening closely to the ways Desiigner’s vocals sound like Future as an entry point to trap’s political work: a sonic aesthetics of dis-organized polity, of sonic blackness in a post-racial society that I call trap irony.

Sounds Like Future

Though I’ve found several instances of writers comparing Desiigner to Future, that comparison usually includes little detailed support about the Future-istic elements of Desiigner’s sound. There are a number of sonic cues in “Panda” that could lead listeners to mistake the singer for Future, but I’m going to focus on the most obvious similarity: Desiigner’s recorded vocals share timbral and affective similarities to some of Future’s recorded vocals. When critics say Desiigner sounds like Future, the vocals are likely their main point of reference, so I’ve identified five points of sonic similarity between Desiigner and Future.

- Desiigner’s voice on “Panda” is detuned, resonating slightly off pitch with the instrumental, a technique so common in Future songs that I could link to any number of examples. Here are four, all released in the last two years, as a representative sample: “Stick Talk,” “Where Ya At (feat. Drake),” “March Madness,” and “Codeine Crazy.”

- Second, Desiigner delivers his vocals with a flat affect, conveying little emotion through inflection. Listen to the sections in the video above where he repeats the word “panda” [0:33-39, 1:38-46, 2:44-52, 3:51-58]. These repetitions precede each verse and then punctuate the end of the song. Rhythmically they signal what should be a turn-up— a run of at least a measure’s worth of eighth notes just before the full beat drops. But Desiigner’s recitation is emotionless, each instance of the word sounding just like the last. Throughout the rest of the song, if a listener didn’t understand the words, it would be hard to guess what Desiigner is rapping about based on any emotive signals. Love? Aggression? Loss? The vocal performance is reportorial, dispassionate. Future adopts a similar technique in up-tempo songs. His repetition of the words “jumpman” (1:08-10) and “noble” (1:28-30) in “Jumpman” and the word “wicked” (0:13-24) in “Wicked” provide parallels to Desiigner’s recitation of “panda.” And in “Ain’t No Time,” Future delivers lines about his clothes and money as casually as he predicts his enemies ending up outlined in chalk (0:13-26); just as in “Panda,” a listener who didn’t catch the lyrics to “Ain’t No Time” wouldn’t be able to attach any particular emotional content to the song.

- Speaking of not catching lyrics, Desiigner and Future are both notoriously mushmouths: enunciation is optional. A number of online videos and fluff posts revolve around the fact that it’s hard to make out what Desiigner or Future is saying.

- Both Desiigner’s and Future’s performed voices seem to sit low in their registers, produced by opening the backs of their throats and elongating their vocal chords. For context, both artists seem to speak in the same register their recorded vocals fall in, and each is also likely to perform their vocals a little higher in a live setting.

- The bulk of “Panda”’s verses are in “Migos flow.” Named for the ATL trap trio who popularized it in their song, “Versace,” Migos flow is a triplet figure that rises from low to high, 3-1-2 (where 1 is the downbeat). The first twenty seconds of the “Versace” link above is a constant string of Migos flow. It’s pervasive throughout “Panda,” but 0:49-52 stacks two Migos flow lines back-to-back. Future’s verse on Drake’s “Digital Dash” (0:18-2:00) is a good example of an extended Migos flow.

In other words, Desiigner does sound like Future in some significant ways. But that’s not all he sounds like. Detuned vocals isn’t just a Future thing. Adam Krims theorizes this as part of the “hip hop sublime,” and it’s especially common among Southern rappers (for example, Young Jeezy sounded like Future before Future even did) (73-74). Many trap artists rap in a way that confounds efforts to understand what they’re saying; Young Thug, for instance, employs a vocal style distinct from Future and Desiigner but is equally difficult to understand. And the Migos flow, as partially demonstrated in this video, is not Future’s (or Migos’s) proprietary style. It’s been adopted by several (especially Southern) rappers, most recently in conjunction with trap. The elements I describe in the previous paragraph point to some specific ways Desiigner sounds like Future, which in turn points to ways that Desiigner sounds, more broadly, like trap.

The “Panda” beat, which comes from UK producer Menace, bears this out. Southern trap, as can be heard by surveying the songs linked above, features instrumentals with deep, tuned kick drums, usually dry 808 snares, high and bright synth lines, and punctuation from low brass and strings (0:40-1:33 in “Panda,” for the latter). This low/high frequency spread, with the mid-range mostly open, characterizes a good deal of trap music; the freed mid-range leaves more room for the bass to be amplified to soul-rattling levels without crowding out the rest of the instrumental. Also, one of the most iconic sonic elements of trap is the rattling hihat, cruising through subdivisions of the beat at inhuman rates (for instance, Metro Boomin’s hats at 0:16 in the aforementioned “Digital Dash” rattle but good when the full beat drops). Here’s the thing about “Panda,” though: those hats don’t rattle. Instead, they enter oh-so-quietly at 1:06 and bang out a steady eighth note pattern punctuated with a crash cymbal on every fourth beat until the end of the verse.

Sounds Like Trap

The missing hihats are an important piece of “Panda”’s sonic puzzle, and point to some broader observations about trap aesthetics as politics, what I’m calling trap irony. Trap music moves through society in ways it shouldn’t. The image of the trap is a house with only one way in and out, yet trap aesthetics produce a music that seems to constantly find a secret exit, a path not offered, a way around established norms. Materially, the bulk of trap music circulates through and out of Atlanta on mixtapes, beyond the purview of major record labels and, in part because it isn’t controlled by labels, at an astonishing rate—for instance, from January 2015-February 2016, Future released four mixtapes and two official albums. Moreover, trap reverberates as sonic blackness in a society whose mainstream has been explicitly peddling a post-racial ideology for nearly a decade. Trap aesthetics become trap politics.

Sonic blackness, as Nina Sun Eidsheim defines it and as Regina Bradley has expanded it, is the interplay of vocal timbre and current norms about what constitutes blackness; it’s a moving target that nonetheless shapes and is shaped by a society’s notions of race and racialization (Eidsheim, 663-64). In the case of trap, I argue that its sonic blackness is apparent in the context of post-racial ideology. Post-race politics depends on the notion that racism has ended and that race doesn’t matter anymore. In this framework, as Jared Sexton argues in Amalgamation Schemes, multiracialism, the blending of many races together until distinct racial backgrounds are purportedly indecipherable, becomes the ideal. The problem Sexton finds with multiracialism as a discourse is that it doesn’t account for the historical racial hierarchies that institutionalize whiteness as ideal; rather, multiracialism “is a tendency to neutralize the political antagonism set loose by the critical affirmation of blackness” (65).

Trap irony describes the way trap picks up recognizable markers of hip hop blackness (urban spaces, violence, drugs, sexual voracity, conspicuous consumption) so that its existence becomes an affirmation of blackness in a post-racial milieu. In fact, ironies abound in trap. Kemi Adeyemi has written about the use of lean, the codeine-based concoction of choice for many Dirty Southern rappers, as “generat[ing] productively intoxicated states that counter the violent realities of a particularly black everyday life” (first emphasis mine). LH Stallings has argued for the hip hop strip club — trap’s home away from home — to be understood as an always already queer space despite its surface heteronormativity. I’ve elsewhere used Stallings’s “black ratchet imagination” to think about party politics in the south, the way a group like Rae Sremmurd use party music as a refusal to produce and re-produce for the benefit of whiteness. The flat affect of rappers like Desiigner and Future is a similar shirking of emotional labor; where an artist like Kendrick Lamar brings fire and brimstone, Future shows up with dispassionate Autotune warble. Intoxicated but productive, heteronormative but queer, partying but political, affected but flat: in each case, we can hear trap irony navigating the complex assemblages of blackness in a purportedly post-racial society.

The last piece of the “Panda” puzzle is another trap irony, the sonification of a dis-organized polity, a bloc that doesn’t voice its interests as one. Listening to “Panda,” it’s hard to notice that the rattling hihat, integral to so much ATL trap, is missing. That’s because Desiigner vocalizes it himself. Throughout the track, he adds a handful of background vocals that trigger at seemingly random points. Unlike the flat affect of his flow, Desiigner’s vocal ad-libs are full of energy, as if he’s egging himself on. One of these vocals is “brrrrrrrrrrrrrrrah,” a tongue roll of varying lengths that replaces the missing hihat rattle. Listen back to the other trap songs I’ve linked in this essay, or check out nearly any track from trap artists like Young Thug, Rae Sremmurd, or Kevin Gates, and you’ll hear the pervasiveness of the hyped trap background vocals.

Trap background vocals, like the aesthetics, politics, and economy of trap itself, is a messy business. Desiigner’s background vocals on “Panda” move in meter and sometimes lock into a sequence, but he triggers enough different ones at unexpected moments that a listener can’t know exactly what sound to expect next nor when it will occur. Desiigner sounds like Future, which is to say he sounds like trap, which is to say he sounds like blackness, and his background vocals, which he turns up loud, are emblematic of the aesthetics and politics of trap. Trap irony means that a genre that renders blackness audible in 2016 does so not through a multiracial neutralization of the critical affirmation of blackness, but by setting loose a disparate set of recognizably black voices sounding from all directions, rattling across the soundscape, routing themselves through any path that doesn’t lead to the designated entry/exit point of the trap.

—

Justin D Burton is Assistant Professor of Music at Rider University, and a regular writer at Sounding Out!. His research revolves around critical race and gender theory in hip hop and pop, and his current book project is called Posthuman Pop. He is co-editor with Ali Colleen Neff of the Journal of Popular Music Studies 27:4, “Sounding Global Southernness,” and with Jason Lee Oakes of the Oxford Handbook of Hip Hop Music Studies (2017). You can catch him at justindburton.com and on Twitter @justindburton. His favorite rapper is Right Said Fred.

—

REWIND!…If you liked this post, you may also dig:

REWIND!…If you liked this post, you may also dig:

Slow, Loud, and Bangin’: Paul Wall talks “slab god” sonics–Doug Doneson

“The (Magic) Upper Room: Sonic Pleasure Politics in Southern Hip Hop“–Regina Bradley

“Tomahawk Chopped and Screwed: The Indeterminacy of Listening“–Justin Burton

Recent Comments