“People’s lives are at stake”: A conversation about Law, Listening, and Sound between James Parker and Lawrence English

Lawrence English is composer, media artist and curator based in Australia. Working across an eclectic array of aesthetic investigations, English’s work prompts questions of field, perception and memory. He investigates the politics of perception, through live performance and installation, to create works that ponder subtle transformations of space and ask audiences to become aware of that which exists at the edge of perception.

James Parker is a senior lecturer at Melbourne Law School, where he is also Director of the research program ‘Law, Sound and the International’ at the Institute for International Law and the Humanities. James’ research addresses the many relations between law, sound and listening, with a particular focus at the moment on sound’s weaponisation. His monograph Acoustic Jurisprudence: Listening to the Trial of Simon Bikindi (OUP 2015) explores the trial of Simon Bikindi, who was accused by the International Criminal Tribunal for Rwanda of inciting genocide with his songs (30% discount available with the code ALAUTHC4). James is also a music critic and radio broadcaster. He will be co-curating an exhibition and parallel public program on Eavesdropping at the Ian Potter Museum of Art in Melbourne between July and October 2018.

Lawrence English: James, thanks for taking the time to correspond with me. I was interested in having this conversation with you as we’re both interested in sound, but perhaps approaching its potential applications and implications in somewhat different ways. And yet we have a good deal of potential cross over in our sonic interests too. Particularly in the way that meaning is sought and extracted from our engagements with sound. How that meaning is constructed and what is extracted and amplified from those possible, meaningful readings of sound in time and place. I read with great interest your work on acoustic jurisprudence, specifically how you almost build a case for an ontological position that’s relational between sound and the law. I wondered if you could perhaps start with a summary of this framework you’re pushing towards? I am interested to know how it is you have approached this potentiality in the meaning of sound and the challenges that lie in working around an area that is still so diffuse, at least in a legal setting.

James Parker: Let me begin by saying a sincere thank you for the invitation. As a long-time fan of your work, it’s a pleasure. It’s also symptomatic in a way, because – so far at least – the art world has been much more interested in my research than the legal academy. When I’m in a law faculty and I say that my work is about law’s relationship(s) with sound, people are mostly surprised, sometimes they’re interested, but they rarely care very much. I don’t mean this as a slight. It’s just that their first instinct is always that I’m doing something esoteric: that my work doesn’t really ‘apply’ to them as someone interested in refugee law, contract, torts, evidence, genocide, or whatever the case may be. As you point out though, that’s not the way I see it at all. One of the things I’ve tried to show in my work is just how deeply law, sound and listening are bound up with each other. This is true in all sorts of different ways, whether or not the relationship is properly ‘ontological’.

Image by Flickr User Frank Hebbert, (CC BY-NC-ND 2.0)

At the most obvious level, the soundscape (both our sonic environment and how we relate to it) is always also a lawscape. Our smartphones, loudspeakers, radios and headsets are all proprietary, as is the music we listen to on them and the audio-formats on which that music is encoded. Law regulates and fails to regulate the volume and acoustic character of our streets, skies, workplaces, bedrooms and battlefields. Courts and legislatures claim to govern the kinds of vocalizations we make – what we can say or sing, where and when – and who gets to listen. As yet another music venue, airport, housing development or logging venture receives approval, new sounds enter the world, others leave it and things are subtly reconstituted as a result.

What’s striking when you look at the legal scholarship, however, is that how sound is conceived for such purposes gets very little attention. There are exceptions: in the fields of copyright and anti-noise regulation particularly. But for the most part, legal thought and practice is content to work with ‘common sense’ assumptions which would be immediately discredited by anyone who spends their time thinking hard about what sound is and does. So as legal academics, legislators, judges, and so on, we need to be much better at attending to law’s ‘sonic imagination’. When an asylum seeker is denied entry to the UK because of the way he pronounces the Arabic word for ‘tomato’ (which actually happened…the artist Lawrence Abu Hamdan has done some fantastic work on this), what set of relations between voice, accent and citizenship is at stake? When a person is accused of inciting genocide with their songs (in this instance a Rwandan musician called Simon Bikindi), what theory or theories of music manifest themselves in the decision-making body’s discourse and in the application of its doctrine? These are really important questions, it seems to me. To put it bluntly: people’s lives are at stake.

microphone where attorneys present arguments at the Iowa Court of Appeals, Image by Flickr User Phil Roeder, (CC BY-NC-ND 2.0)

Another way of thinking about the law-sound relation would be to think about the role played by sound in legal practice: in courtrooms, legislatures etc. For a singer to be tried for genocide, for instance, his songs must be heard. Audio and audio- video recordings must be entered as evidence and played aloud to the court; a witness or two may sing. How? When? Why? The judicial soundscape is surprisingly diverse, it turns out. Gavels knock (at least in some jurisdictions), oaths are sworn, judgment is pronounced; and all of this increasingly into microphones, through headsets, and transmitted via audio-video link to prisons and elsewhere. This stuff matters. It warrants thinking about.

Outside the courtroom, sound is often the medium of law’s articulation: what materialises it, gives it reality, shape, force and effect. Think of the police car’s siren, for instance, or a device like the LRAD, which I know you’re also interested in. Or in non-secular jurisdictions, we could think equally of the church bells in Christianity, the call to prayer in Islam or the songlines of Aboriginal Australia. The idea that law today is an overwhelmingly textual and visual enterprise is pretty commonplace. But it’s an overstatement. Sound remains a key feature of law’s conduct, transmission and embodiment.

“Area Man Cheers for LRAD Arrival,” Pittsburgh G-20 summit protests, 2009, Image by Flickr User Jeeves, (CC BY-NC-ND 2.0)

And to bring me back to where I started, I feel like artists and musicians are generally better tuned in to this than us lawyers.

English: Given the fact that the voice, and I suppose I mean both literally and metaphorically, reigns so heavily in the development and execution of the law it’s surprising that the discourses around sound aren’t a little more engaged. That being said, it’s not that surprising really, as I’d argue that until recently the broader conversations around sound and listening have been rather sparse. It’s only really in the past three decades have we started to see a swell of critical writings around these topics. The past decade particularly has produced a wealth of thought that addresses sound.

Early Dictophone, Museum of Communication, Image by Flickr User Andy Dean, (CC BY-NC-ND 2.0)

I suppose though that really this situation you describe in the law is tied back into the questions that surround the recognition of sound and the complexities of audition more generally. I can’t help but feel that sound has suffered historically from a lack of theoretical investigation. Partly this is due to the late development of tools that provided the opportunity for sound to linger beyond its moment of utterance. That recognition of the subjectivity of audition, revealed in those first recordings of the phonograph must have been a powerful moment. In that second, suddenly, it was apparent that how we listen, and what it is we extract from a moment to moment encounter with sound is entirely rooted in our agency and intent as a listener. The phonograph’s capturing of audio, by contrast, is without this socio-cultural agency. It’s a receptacle that’s technologically bound in the absolute.

I wonder if part of the anxiety, if that’s the word that could be used, around the way that sound is framed in a legal sense is down to its impermanence. That until quite recently we had to accept the experience of sound, as entirely tethered to that momentary encounter. I sense that the law is slow to adapt to new forms and structures. Where do you perceive the emergence of sound as a concern for law? At what point did the law, start to listen?

Parker: Wow, there’s so much in this question. In relation to your point about voice, of course lawyers do ‘get it’ on some level. If you speak to a practitioner, they’re sure to have an anecdote or two about the sonorous courtroom and the (dark) arts of legal eloquence. You may even get an academic to recognise that a theory of voice is somehow implicit in contemporary languages of democracy, citizenship and participatory politics: this familiar idea that (each of us a little sovereign) together we manifest the collective ‘voice’ of the people. But you’ll be hard pushed to find anyone in the legal academy actually studying any of this (outside the legal academy, I can thoroughly recommend Mladen Dolar’s incredible A Voice and Nothing More, which is excellent – if brief – on the voice’s legal and political dimensions). One explanation, as you say, might be that it’s only relatively recently that a discourse has begun to emerge around sound across the academy: in which case law’s deafness would be symptomatic of a more general inattention to sound and listening. That’s part of the story, I think. But it’s also true that the contemporary legal academy has developed such an obsession with doctrine and the promise of law reform that really any inquiry into law’s material or metaphysical aspects is considered out of the ordinary. In this sense, voice is just one area of neglect amongst many.

As for your points about audio-recording, it’s certainly true that access to recordings makes research on sound easier in some respects. There’s no way I could have written my book on the trial of Simon Bikindi, for instance, without access to the audio-archive of all the hearings. Having said that, I’m pretty suspicious of this idea that the phonograph, or for that matter any recording/reproductive technology could be ‘without agency’ as you put it. It strikes me that the agency of the machine/medium is precisely one of the things we should be attending to.

English: I may have been a little flippant. I agree there’s nothing pure about any technology and we should be suspicious of any claims towards that. It seems daily we’re reminded that our technologies and their relationships with each other pose a certain threat, whether that be privacy through covert recording or potential profiling as suggested by the development of behavioral recognition software with CCTV cameras.

Parker: Not just that. CCTV cameras are being kitted out with listening devices now too. There was a minor controversy about their legality and politics earlier this year in Brisbane in fact.

“Then They Put That Up There”–Shotspotter surveillance mic on top of the Dolores Mission, Image by Flickr User Ariel Dovas, (CC BY-NC-ND 2.0)

English: Thinking about sound technologies, at the most basic level, the pattern of the microphone, cardioid, omni and the like, determines a kind of possibility for the articulation of voice, and its surrounds. I think the microphone conveys a very strong political position in that its design lends itself so strongly to the power of singular voice. That has manifest itself in everything from media conferences and our political institutions, through to the inane power plays of ‘lead singers’ in 1980s hard rock. The microphone encourages, both in its physical and acoustic design, a certain singular focus. It’s this singularity that some artists, say those working with field recording, are working against. This has been the case in some of the field recordings I have undertaken over the years. It has been a struggle to address my audition and contrast it with that of the microphone. How is it these two rather distinct fields of audition might be brought into relief? I imagine these implications extend into the courtroom.

Parker: Absolutely. Microphones have been installed in courtrooms for quite a while now, though not necessarily (or at least not exclusively) for the purposes of amplification. Most courts are relatively small, so when mics do appear it’s typically for archival purposes, and especially to assist in the production of trial transcripts. This job used to be done by stenographers, of course, but increasingly it’s automated.

Stenographer, Image by Flickr User Mike Gifford, (CC BY-NC-ND 2.0)

So no, in court, microphones don’t tend to be so solipsistic. In fact, in some instances they can help facilitate really interesting collective speaking and listening practices. At institutions like the International Criminal Tribunal for Rwanda, or for the Former Yugoslavia, for instance, trials are conducted in multiple languages at once, thanks to what’s called ‘simultaneous interpretation’. Perhaps you’re familiar with how this works from the occasional snippets you see of big multi-national conferences on the news, but the technique was first developed at the Nuremberg Trials at the end of WWII.

What happens is that when someone speaks into a microphone – whether it’s a witness, a lawyer or a judge – what they’re saying gets relayed to an interpreter watching and listening on from a soundproof booth. After a second or two’s delay, the interpreter starts translating what they’re saying into the target language. And then everyone else in the courtroom just chooses what language they want to listen to on the receiver connected to their headset. Nuremberg operated in four languages, the ICTR in three. And of course, this system massively affects the nature of courtroom eloquence. Because of the lag between the original and interpreted speech, proceedings move painfully slowly.

Council of Human Rights of the United Nations investigates possible violations committed during the Israeli offensive in the band Gaza, 27 December to 18 January 2008. Image courtesy of the United Nations Geneva via Flickr (CC BY-NC-ND 2.0)

Courtroom speech develops this odd rhythm whereby everyone is constantly pausing mid-sentence and waiting for the interpretation to come through. And the intonation of interpreted speech is obviously totally different from the original too. Not only is a certain amount of expression or emotion necessarily lost along the way, the interpreter will have an accent, they’ll have to interpret speech from both genders, and then – because the interpreter is performing their translation on the fly (this is extraordinarily difficult to do by the way… it takes years of training) – inevitably they end up placing emphasis on odd words, which can make what they say really difficult to follow. As a listener, you have to concentrate extremely hard: learn to listen past the pauses, force yourself to make sense of the stumbling cadence, strange emphasis and lack of emotion.

On one level, this is a shame of course: there’s clearly a ‘loss’ here compared with a trial operating in a single language. But if it weren’t for simultaneous interpretation, these Tribunals couldn’t function at all. You could say the same about the UN as a whole actually.

English: I agree. Though for what it’s worth it does seem as though we’re on a pathway to taking the political and legal dimensions of sound more seriously. Your research is early proof of that, as are cases such as Karen Piper’s suit against the city of Pittsburgh in relation to police use of an LRAD. As far as the LRAD is concerned, along with other emerging technologies like the Hypershield and the Mosquito, it’s as though sound’s capacity for physical violence, and the way this is being harnessed by police and military around the world somehow brings these questions more readily into focus.

Parker: I think that’s right. There’s definitely been a surge of interest in sound’s ‘weaponisation’ recently. In terms of law suits, in addition to the case brought by Karen Piper against the City of Pittsburgh, LRAD use has been litigated in both New York and Toronto, and there was a successful action a couple of years back in relation to a Mosquito installed in a mall in Brisbane.

On the more scholarly end of things, Steve Goodman’s work on ‘sonic warfare’ has quickly become canonical, of course. But I’d also really recommend J. Martin Daughtry’s new book on the role of sound and listening in the most recent Iraq war. Whereas Goodman focuses on the more physiological end of things – sound’s capacity both to cause physical harm (deafness, hearing loss, miscarriages etc) and to produce more subtle autonomic or affective responses (fear, desire and so on) – Daughtry is also concerned with questions of psychology and the ways in which our experience even of weaponised sound is necessarily mediated by our histories as listeners. For Daughtry, the problem of acoustic violence always entails a spectrum between listening and raw exposure.

These scholarly interventions are really important, I think (even though neither Goodman nor Daughtry are interested in drawing out the legal dimensions of their work). Because although it’s true that sound’s capacity to wound provides a certain urgency to the debate around the political and legal dimensions of the contemporary soundscape, it’s important not to allow this to become the only framework for the discussion. And that certainly seems to have happened with the LRAD.

Of course, the LRAD’s capacity to do irreparable physiological harm matters. Karen Piper now has permanent hearing loss, and I’m sure she’s not the only one. But that shouldn’t be where the conversation around this and other similar devices begins and ends. The police and the military have always been able to hurt people. It’s the LRAD’s capacity to coerce and manage the location and movements of bodies by means of sheer acoustic force – and specifically, by exploiting the peculiar sensitivity of the human ear to mid-to-high pitch frequencies at loud volumes – that’s new. To me, the LRAD is at its most politically troubling precisely to the extent that it falls just short of causing injury. Whether or not lasting injury results, those in its way will have been subjected to the ‘sonic dominance’ of the state.

So we should be extremely wary of the discourse of ‘non-lethality’ that is being mobilised by to justify these kinds of technologies: to convince us that they are somehow more humane than the alternatives: the lesser of two evils, more palatable than bullets and batons. The LRAD renders everyone before it mute biology. It erases subjectivity to work directly on the vulnerable ear. And that strikes me as something worthy of our political and legal attention.

English: I couldn’t agree more. These conversations need to push further outward into the blurry unknown edges if we’re to realise any significant development in how the nature of sound is theorised and analysed moving forward into the 21st century. Recently I have been researching the shifting role of the siren, from civil defence to civil assault. I’ve been documenting the civil defence sirens in Los Angeles county and using them as a starting point from which to trace this shift towards a weaponisation of sound. The jolt out of the cold war into the more spectre-like conflicts of this century has been like a rupture and the siren is one of a number of sonic devices that I feel speak to this redirection of how sound’s potential is considered and applied in the everyday.

—

Featured Image: San Francisco 9th Circuit Court of Appeals Jury Box, Image by Flickr User Thomas Hawk, (CC BY-NC-ND 2.0)

—

REWIND! . . .If you liked this post, you may also dig:

Sounding Out! Podcast #60: Standing Rock, Protest, Sound and Power (Part 1)–Marcella Ernest

The Noises of Finance–N. Adriana Knouf

Learning to Listen Beyond Our Ears: Reflecting Upon World Listening Day–Owen Marshall

Beyond the Grandiose and the Seductive: Marie Thompson on Noise

Catastrophic Listening

Welcome back to Hearing the UnHeard, Sounding Out‘s series on how the unheard world affects us, which started out with my post on the hearing ranges of animals, and now continues with this exciting piece by China Blue.

Welcome back to Hearing the UnHeard, Sounding Out‘s series on how the unheard world affects us, which started out with my post on the hearing ranges of animals, and now continues with this exciting piece by China Blue.

From recording the top of the Eiffel Tower to the depths of the rising waters around Venice, from building fields of robotic crickets in Tokyo to lofting 3D printed ears with binaural mics in a weather balloon, China Blue is as much an acoustic explorer as a sound artist. While she makes her works publicly accessible, shown in museums and galleries around the world, she searches for inspiration in acoustically inaccessible sources, sometimes turning sensory possibilities on their head and sonifying the visual or reformatting sounds to make the inaudible audible.

In this installment of Hearing the UnHeard, China Blue talks about cataclysmic sounds we might not survive hearing and her experiences recording simulated asteroid strikes at NASA’s Ames Vertical Gun Range.

— Guest Editor Seth Horowitz

—

Fundamentally speaking, sound is the result of something banging into something else. And since everything in the universe, from the slow recombination of chemicals to the hypervelocity impacts of asteroids smashing into planet surfaces, is ultimately the result of things banging into things, the entire universe has a sonic signature. But because of the huge difference in scale of these collisions, some things remain unheard without very specialized equipment. And others, you hope you never hear.

Unheard sounds can be hidden subtly beneath your feet like the microsounds of ants walking, or they can be unexpectedly harmonic like the seismic vibrations of a huge structure like the Eiffel Tower. These are sounds that we can explore safely, using audio editing tools to integrate them into new musical or artistic pieces.

Luckily, our experience with truly primal sounds, such as the explosive shock waves of asteroid impacts that shaped most of our solar system (including the Earth) is rarer. Those who have been near a small example of such an event, such as the residents of Chelyabinsk, Russia in 2013 were probably less interested in the sonic event and more interested in surviving the experience.

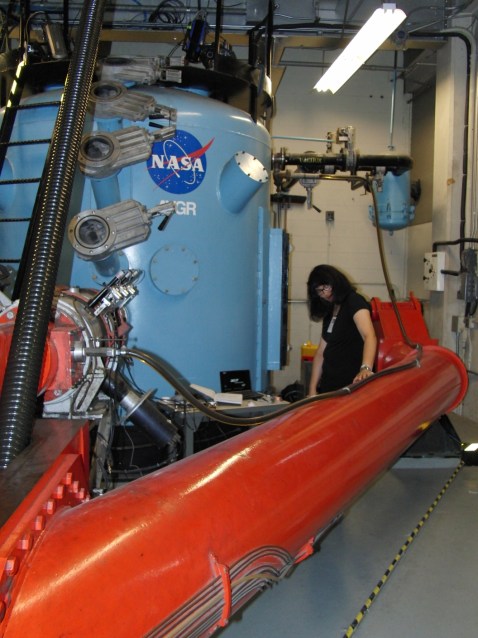

But there remains something seductive about being able to hear sounds such as the cosmic rain of fire and ice that shaped our planet billions of years ago. A few years ago, when I became fascinated with sounds “bigger” than humans normally hear, I was able to record simulations of these impacts in one of the few places on Earth where you can, at NASA Ames Vertical Gun Range.

The Vertical Gun at Ames Research Center (AVGR) was designed to conduct scientific studies of lunar impacts. It consists of a 25 foot long gun barrel with a powder chamber at one end and a target chamber, painted bright blue, that looks like the nose of an upended submarine, about 8 feet in diameter and height at the other. The walls of the chamber are of thick steel strong enough to let its interior be pumped down to vacuum levels close to that of outer space, or back-filled with various gases to simulate different planetary atmospheres. Using hydrogen and/or up to half a pound of gun powder, the AVGR can launch projectiles at astonishing speeds of 500 to 7,000 m/s (1,100 to 16,000 mph). By varying the gun’s angle of elevation, projectiles can be shot into the target so that it simulates impacts from overhead or at skimming angles.

In other words, it’s a safe way to create cataclysmic impacts, and then analyze them using million frame-per-second video cameras without leaving the security of Earth.

My husband, Dr. Seth Horowitz who is an auditory neuroscientist and another devotee of sound, is close friends with one of the principal investigators of the Ames Vertical Gun, Professor Peter Schultz. Schultz is well known for his 2005 project to blow a hole in the comet Tempel 1 to analyze its composition, and for his involvement in the LCROSS mission that smashed into the south pole of the moon to look for evidence of water. During one conversation discussing the various analytical techniques they use to understand impacts, I asked, “I wonder what it sounds like.” As sound is the propagation of energy by matter banging into other matter, this seemed like the ultimate opportunity to record a “Big Bang” that wouldn’t actually get you killed by flying meteorite shards. Thankfully, my husband and I were invited to come to Ames to find out.

I had a feeling that the AVGR would produce fascinating new sounds that might provide us with different insights into impacts than the more common visual techniques. Because this was completely new research, we used a number of different microphones that were sensitive to different ranges and types of sound and vibrations to provide us with a selection of recording results. As an artist I found the research to be the dominant part of the work because the processes of capturing and analyzing the sounds were a feat unto themselves. As we prepared for the experiment, I thought about what I could do with these sounds. When I eventually create a work out of them, I anticipate using them in an installation that would trigger impact sounds when people enter the room, but I have not yet mounted this work since I suspect that this would be too frightening for most exhibition spaces to want.

Part of my love (and frustration) for sound work is figuring out how to best capture that fleeting moment in which the sound is just right, when the sound evokes a complex response from its listeners without having to even be explained. The sound of Mach 10 impacts and its effects on the environment had such possibilities. In pursuit of the “just right,” we wired up the gun and chamber with multiple calibrated acoustic and seismic microphones, then fed them into a single high speed multichannel recorder, pressed “record” and made for the “safe” room while the Big Red Button was pressed, launching the first impactor. We recorded throughout the day, changing the chamber’s conditions from vacuum to atmosphere.

When we finally got to listen that afternoon, we heard things we never imagined. Initial shots in vacuum were surprisingly dull. The seismic microphones picked up the “thump” of the projectile hitting the sand target and a few pattering sounds as secondary particles struck the surfaces. There were of course no sounds from the boundary or ultrasonic mics due to the lack of air to propagate sound waves. While they were scientifically useful–they demonstrated that we could identify specific impact events launched from the target—they weren’t very acoustically dramatic.

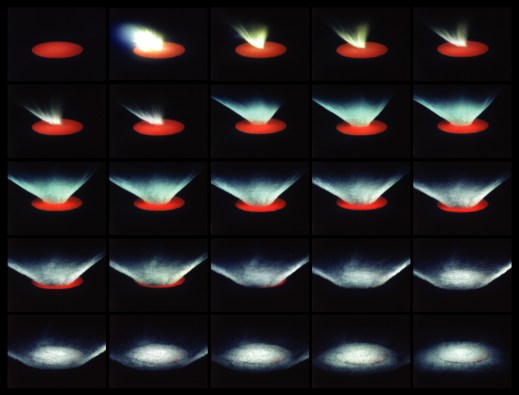

When a little atmosphere was added, however, we began picking up subtle sounds, such as the impact and early spray of particles from the boundary mic and the fact that there was an air leak from the pitch shifted ultrasonic mic. But when the chamber was filled with an earthlike atmosphere and the target dish filled with tiny toothpicks to simulate trees, building the scenario for a tiny Tunguska event (a 1908 explosion of an interstellar object in Russia, the largest in recorded history), the sound was stunning:

.

After the initial explosion, there was a sandstorm as the particles of sand from the target flew about at Mach 5 (destroying one of the microphones in the process), and giving us a simulation of a major asteroid explosion.

66 million years ago, in a swampy area by the Yucatan Penninsula, something like this probably occurred, when a six mile wide rock burned through the atmosphere to strike the water, ending the 135 million year reign of the dinosaurs. Perhaps it sounded a little like this simulation:

.

Any living thing that heard this – dinausaurs, birds, frogs insects – is long gone. By thinking about the event through new sounds, however, we can not only create new ways to analyze natural phenomena, but also extend the boundaries of our ability to listen across time and space and imagine what the sound of that impact might have been like, from an infrasonic rumble to a killing concussion.

It would probably terrify any listener to walk in to an art exhibition space filled with simply the sounds of simulated hypervelocity impacts, replete with loud, low frequency sounds and infrasonic vibrations. But there is something to that terror. Such sounds trigger ancient evolutionary pathways which are still with us because they were so good at helping us survive similar events by making us run, putting as much distance between us and the cataclysmic source, something that lingers even in safe reproductions, resynthesized from controlled, captured sources.

__

China Blue is a two time NASA/RI Space Grant recipient and an internationally exhibiting artist who was the first person to record the Eiffel Tower in Paris, France and NASA’s Vertical Gun. Her acoustic work has led her to be selected as the US representative at OPEN XI, Venice, Italy and at the Tokyo Experimental Art Festival in Tokyo, Japan, and was the featured artist for the 2006 annual meeting of the Acoustic Society of America. Reviews of her work have been published in the Wall Street Journal, New York Times, Art in America, Art Forum, artCritical and NY Arts, to name a few. She has been an invited speaker at Harvard, Yale, MIT, Berkelee School of Music, Reed College and Brown University. She is the Founder and Executive Director of The Engine Institute www.theengineinstitute.org.

__

Featured Image of a high-speed impact recorded by AVGR. Image by P. H. Schultz. Via Wikimedia Commons.

__

REWIND! If you liked this post, check out …

Cauldrons of Noise: Stadium Cheers and Boos at the 2012 London Olympics— David Hendy

Learning to Listen Beyond Our Ears– Owen Marshall

Living with Noise— Osvaldo Oyola

Recent Comments