Sounding Out! Unplugged: “Power in Listening” (August 2026)

Hello listeners + readers!

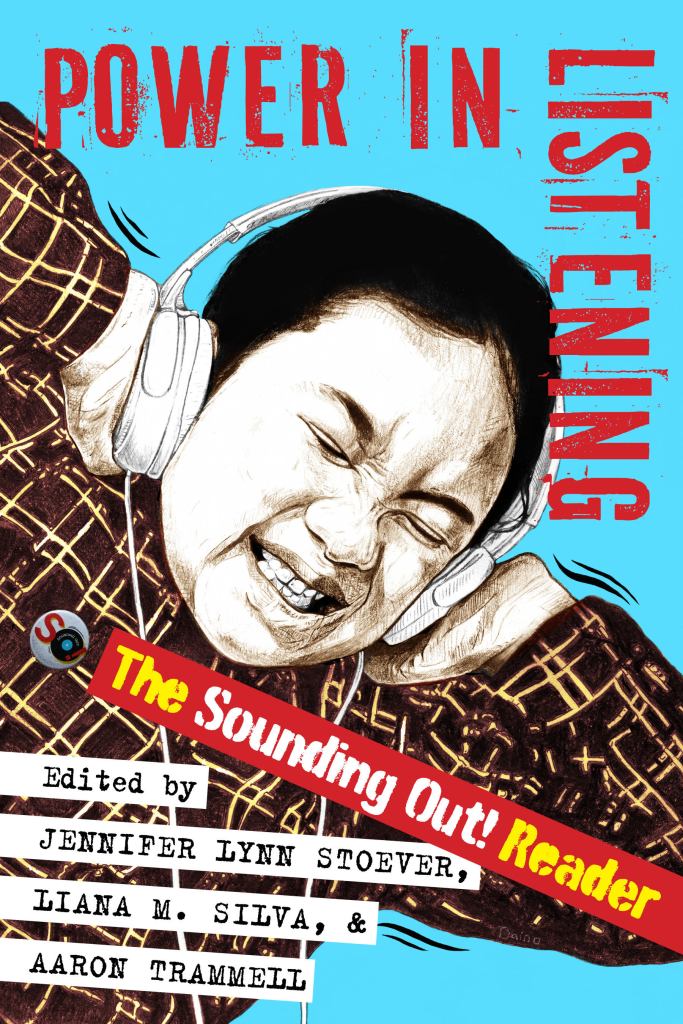

We usually take July as a BYE month to celebrate our yearly blog-o-versary, but this year, we are going bigger! Team SO! is pausing for the full summer–June, July, AND August–to catch our breath in advance of the publication of the official Sounding Out! print anthology, Power in Listening (New York University Press) on August 25, 2026 (although you can pre-order now, if you’d like at Indiepubs, direct from NYU, and other book outlets). This book is a long time coming and we are really proud of what we have put together. It’s a fresh mix of brand new essays with fan-favorites that have been revised, expanded and fully updated to the present, with an introduction by the editorial collective and a forward by SO!‘s very own Neil Verma.

Power in Listening is a love letter to everyone who has participated in the ongoing collective project of the blog over our first 15 years and a fantastic way to kick off the future together. Like the blog, it’s sharp, accessible, gorgeously written, diverse, and ready for the classroom, the library, the beach, public transit, the coffee shop, the couch on a rainy day, the club . . .wherever you love to read, but now you can be unplugged too, which we all need more than ever. Scroll down for more details about the book, including a full author list!

Enjoy the coming months–the book will be out there catching eyes in August and the blog will grab your ears once again this September! Please help us spread the word about the book– we’d love it if you’d tell two friends, so that they two friends, and so on, and so on, and so on. . .or you can share social media, whatever works for you!

Thank you and SO! looking forward–see you in September!

JLS, SO! Ed-in-Chief

P.S. Details to come on release parties, conference events, speaking engagements, podcasts, broadcasts, and all that good stuff! If you’d like us to come out your way to talk about the book, the blog, and all things sound, we have a Google form for that! Contact NYU Press at this link if you are interested in reviewing the book on your publication: https://nyupress.org/resourcesold/for-media/

—

How listening shapes power

Power in Listening explores how listening shapes—and is shaped by—power. From the politics of “sad girl” Spotify playlists to the sonic architectures of surveillance and the gendered voices of Siri and Alexa, this collection investigates how sound and listening inform identity, embodiment, and social life. How does Beyoncé’s remix of her “elevator incident” expose the surveillance of Black bodies? How do deaf listeners use multiple senses to navigate sound? How are Latina voices racialized through ideas of volume and tone?

Building from the groundbreaking Sounding Out! blog, Power in Listening curates 36 new, revised, and expanded essays from scholars, artists, DJs, and activists across more than twenty disciplines. Together, they trace how auditory culture intersects with race, gender, sexuality, technology, and media—from radio and tape to streaming and AI.

Accessible yet rigorous, this reader reveals sound studies in motion: a field that listens as a form of inquiry, protest, and care. Each essay connects theory and everyday experience, offering tools to hear the world—and each other—more critically. Power in Listening invites readers to experience listening as a social practice, a political act, and a method of understanding one’s place within a resonant and contested public sphere.

—

Authors

Neil Verma, Nichole Prucha, Rami Stucky, Max Abner, Ola Mohammed, Christie Zwahlen, Art Blake, Liana Silva, Maria Chaves Daza, Tara Betts, Marlén Ríos, Kimberly Williams, Samantha Ege, Aaron Trammell, Christina Giacona, Andrew Salvati, Kemi Adeyemi, Enongo Lumumba-Kasongo, Andreas Pape, AO Roberts, Milena Droumeva, Steph Ceraso, Linda O’Keeffe, Michael Levine, Amanda Gutierrez, Asa Mendelsohn, Rebecca Lentjes, Priscilla Peña Ovalle, Justin Burton, Gustavus Stadler, Dolores Inés Casillas, Jennifer Lynn Stoever, Chris Chien, Benjamin Tausig, Hubert Gendron-Blais, Maile Costa Colbert, and Dustin Tahmahkera

Section Titles and Topics

- Sonic Presents

- Putting The “I” in Listening: Memoir as Method

- The Sound You Make Is Not Your Own: Our Social Voices

- “Hop With It, Rock With It”: Listening to Popular Culture

- Bits and Screeches: Technology and Sound

- Hitting the Streets: Space, Place, and Sound

- Panaudicism: Sound and Surveillance

- Listening While White: Sound and Racial Privilege

- “Can You Hear Me Now?”: Sound, Agency, and Activism

—

What folks are saying. . .

Spotlighting the work of emerging scholars under innovative rubrics like space, gender, time, race, and power, Power in Listening curates an impressive array of authors and disciplinary approaches of the highest caliber. This is a welcome, fresh take on the field of sound studies. ~Roshanak Kheshti, author of Modernity’s Ear: Listening to Race and Gender in World Music

From voice and memoir to technology, space, race, surveillance, and activism, Power in Listening centers captivating soundworkers. and shows how listening can unsettle hierarchies and make new worlds audible. This sharply curated collection brings together newly revised classics from the blog as well as bold new essays that treat listening not as neutral perception, but as a site of power, struggle, pleasure, and possibility. Smart, generous, and unapologetically loud, this book doesn’t just reflect a field. It changes how you hear it. ~Karen Tongson, author of Norm Porn: Queer Viewers and the TV That Soothes Us

Not only chronicles the dynamism of the field of sound studies, but also beckons readers to find the listening experience to be an unmistakably political social practice. Power in Listening is an exceptional achievement, uniting scholars and artists across countless disciplines to foster conversations and new scholarship for years to come. ~Iván Ramos, author of Unbelonging: Inauthentic Sounds in Mexican and Latinx Aesthetics

—

Jennifer Lynn Stoever is Associate Professor of English at Binghamton University, founding Editor-in-Chief of Sounding Out!, and author of The Sonic Color Line: Race and the Cultural Politics of Listening.

Liana Silva is Managing Editor of Sounding Out! She is a teacher, writer, reader, and editor living in Houston, TX. She graduated from Binghamton University’s Department of English in 2012. In the past she was Editor-in-Chief of the professional publication Women in Higher Education.

Aaron Trammell is Assistant Professor of Informatics and Core Faculty in Visual Studies at UC Irvine and author of Repairing Play: A Black Phenomenology and The Privilege of Play. He is Editor-in-Chief of the journal Analog Games Studies and was an honoree of the hobby game industry’s prestigious Diana Jones Award.

You Got Me Feelin’ Emotions: Singing Like Mariah

Mariah Carey’s New Year’s Eve 2016 didn’t go so well. The pop diva graced a stage in the middle of Times Square as the clock ticked down to 2017 on Dick Clark’s Rockin New Year’s Eve, hosted by Ryan Seacrest. After Carey’s melismatic rendition of “Auld Lang Syne,” the instrumental for “Emotions” kicked in and Carey, instead of singing, informed viewers that she couldn’t hear anything. What followed was five minutes of heartburn. Carey strutted across the stage, hitting all her marks along with her dancers but barely singing. She took a stab at a phrase here and there, mostly on pitch, unable to be sure. And she narrated the whole thing, clearly perturbed to be hung out to dry on such a cold night with millions watching. I imagine if we asked Carey about her producer after the show, we’d get a “I don’t know her.”

These things happen. Ashlee Simpson’s singing career, such as it was, screeched to a halt in 2004 on the stage of Saturday Night Live when the wrong backing track cued. Even Queen Bey herself had to deal with lip syncing outrage after using a backing track at former President Barack Obama’s second inauguration. So the reaction to Carey, replete with schadenfreude and metaphorical pearl-clutching, was unsurprising, if also entirely inane. (The New York Times suggested that Carey forgot the lyrics to “Emotions,” an occurrence that would be slightly more outlandish than if she forgot how to breathe, considering it’s one of her most popular tracks). But yeah, this happens: singers—especially singers in the cold—use backing tracks. I’m not filming a “leave Mariah alone!!” video, but there’s really nothing salacious in this performance. The reason I’m circling around Mariah Carey’s frosty New Year’s Eve performance is because it highlights an idea I’m thinking about—what I’m calling the “produced voice” —as well as some of the details that are a subset of that idea; namely, all voices are produced.

I mean “produced” in a couple of ways. One is the Judith Butler way: voices, like gender (and, importantly, in tandem with gender), are performed and constructed. What does my natural voice sound like? I dunno. AO Roberts underlines this in a 2015 Sounding Out! post: “we’ll never really know how we sound,” but we’ll know that social constructions of gender helped shape that sound. Race, too. And class. Cultural norms make physical impacts on us, perhaps in the particular curve of our spines as we learn to show raced or gendered deference or dominance, perhaps in the texture of our hands as we perform classed labor, or perhaps in the stress we apply to our vocal cords as we learn to sound in appropriately gendered frequency ranges or at appropriately raced volumes. That cultural norms literally shape our bodies is an important assumption that informs my approach to the “produced voice.” In this sense, the passive construction of my statement “all voices are produced” matters; we may play an active role in vibrating our vocal cords, but there are social and cultural forces that we don’t control acting on the sounds from those vocal cords at the same moment.

Another way I mean that all voices are produced is that all recorded singing voices are shaped by studio production. This can take a few different forms, ranging from obvious to subtle. In the Migos song “T-Shirt,” Quavo’s voice is run through pitch-correction software so that the last word of each line of his verse (ie, the rhyming words: “five,” “five,” “eyes,” “alive”) takes on an obvious robotic quality colloquially known as the AutoTune effect. Quavo (and T-Pain and Kanye and Future and all the other rappers and crooners who have employed this effect over the years) isn’t trying to hide the production of his voice; it’s a behind-the-glass technique, but that glass is transparent. Less obvious is the way a voice like Adele’s is processed. Because Adele’s entire persona is built around the natural power of her voice, any studio production applied to it—like, say, the cavernous reverb and delay on “Hello” —must land in a sweet spot that enhances the perceived naturalness of her voice.

Vocal production can also hinge on how other instruments in a mix are processed. Take Remy Ma’s recent diss of Nicki Minaj, “ShETHER.” “ShETHER”’s instrumental, which is a re-performance of Nas’s “Ether,” draws attention to the lower end of Remy’s voice. “Ether” and “ShETHER” are pitched in identical keys and Nas’s vocals fall in the same range as Remy’s. But the synth that bangs out the looping chord progression in “ShETHER” is slightly brighter than the one on “Ether,” with a metallic, digital high end the original lacks. At the same time, the bass that marks the downbeat of each measure is quieter in “ShETHER” than it is in “Ether.” The overall effect, with less instrumental occupying “ShETHER”’s low frequency range and more digital overtones hanging in the high frequency range, causes Remy Ma’s voice to seem lower, manlier, than Nas’s voice because of the space cleared for her vocals in the mix. The perceived depth of Remy’s produced voice toys with the hypermasculine nature of hip hop beefs, and queers perhaps the most famous diss track in the genre. While engineers apply production effects directly to the vocal tracks of Quavo and Adele to make them sound like a robot or a power diva, the Remy Ma example demonstrates how gender play can be produced through a voice by processing what happens around the vocals.

Let’s return to Times Square last New Year’s Eve to consider the produced voice in a hybrid live/recorded setting. Carey’s first and third songs “Auld Lang Syne” and “We Belong Together”) were entirely back-tracked—meaning the audience could hear a recorded Mariah Carey even if the Mariah Carey moving around on our screen wasn’t producing any (sung) vocals. The second, “Emotions,” had only some background vocals and the ridiculously high notes that young Mariah Carey was known for. So, had the show gone to plan, the audience would’ve heard on-stage Mariah Carey singing along with pre-recorded studio Mariah Carey on the first and third songs, while on-stage Mariah Carey would’ve sung the second song entirely, only passing the mic to a much younger studio version of herself when she needed to hit some notes that her body can’t always, well, produce anymore. And had the show gone to plan, most members of the audience wouldn’t have known the difference between on-stage and pre-recorded Mariah Carey. It would’ve been a seamless production. Since nothing really went to plan (unless, you know, you’re into some level of conspiracy theory that involves self-sabotage for the purpose of trending on Twitter for a while), we were all privy to a component of vocal production—the backing track that aids a live singer—that is often meant to go undetected.

The produced-ness of Mariah Carey’s voice is compelling precisely because of her tremendous singing talent, and this is where we circle back around to Butler. If I were to start in a different place–if I were, in fact, to write something like, “Y’all, you’ll never believe this, but Britney Spears’s singing voice is the result of a good deal of studio intervention”–well, we wouldn’t be dealing with many blown minds from that one, would we? Spears’s career isn’t built around vocal prowess, and she often explores robotic effects that, as with Quavo and other rappers, make the technological intervention on her voice easy to hear. But Mariah Carey belongs to a class of singers—along with Adele, Christina Aguilera, Beyoncé, Ariana Grande—who are perceived to have naturally impressive voices, voices that aren’t produced so much as just sung. The Butler comparison would be to a person who seems to fit quite naturally into a gender category, the constructed nature of that gender performance passing nearly undetected. By focusing on Mariah Carey, I want to highlight that even the most impressive sung voices are produced, and that means that we can not only ask questions about the social and cultural impact of gender, race, class, ability, sexuality, and other norms may have on those voices, but also how any sung voice (from Mariah Carey’s to Quavo’s) is collaboratively produced—by singer, technician, producer, listener—in relation to those same norms.

Being able to ask those questions can get us to some pretty intriguing details. At the end of the third song, “We Belong Together,” she commented “It just don’t get any better” before abandoning the giant white feathers that were framing her onstage. After an awkward pause (during which I imagine Chris Tucker’s “Don’t cut to me!” face), the unflappable Ryan Seacrest noted, “No matter what Mariah does, the crowd absolutely loves it. You can’t go wrong with Ms. Carey, and those hits, those songs, everybody knows.” Everybody knows. We didn’t need to hear Mariah Carey sing “Emotions” that night because we could fill it all in–everybody knows that song. Wayne Marshall has written about listeners’ ability to fill in the low frequencies of songs even when we’re listening on lousy systems—like earbuds or cell phone speakers—that can’t really carry it to our ears. In the moment of technological failure, whether because a listener’s speakers are terrible or a performer’s monitors are, listeners become performers. We heard what was supposed to be there, and we supplied the missing content.

Sound is intimate, a meeting of bodies vibrating in time with one another. Yvon Bonenfant, citing Stephen Connor’s idea of the “vocalic body,” notes this physicality of sound as a “vibratory field” that leaves a vocalizer and “voyages through space. Other people hear it. Other people feel it.” But in the case of “Emotions” on New Year’s Eve, I heard a voice that wasn’t there. It was Mariah Carey’s, her vocalic body sympathetically vibrated into being. The question that catches me here is this: what happens in these moments when a listener takes over as performer? In my case, I played the role of Mariah Carey for a moment. I was on my couch, surrounded by my family, but I felt a little colder, like I was maybe wearing a swimsuit in the middle of Times Square in December, and my heart rate ticked up a bit, like maybe I was kinda panicked about something going wrong, and I heard Mariah Carey’s voice—not, crucially, my voice singing Mariah Carey’s lyrics—singing in my head. I could feel my vocal cords compressing and stretching along with Carey’s voice in my head, as if her voice were coming from my body. Which, in fact it was—just not my throat—as this was a collaborative and intimate production, my body saying, “Hey, Mariah, I got this,” and performing “Emotions” when her body wasn’t.

By stressing the collaborative nature of the produced voice, I don’t intend to arrive at some “I am Mariah” moment that I could poignantly underline by changing my profile picture on Facebook. Rather, I’m thinking of ways someone else’s voice is could lodge itself in other bodies, turning listeners into collaborators too. The produced voice, ultimately, is a way to theorize unlikely combinations of voices and bodies.

—

Featured image: By all-systems-go at Flickr, CC BY-SA 2.0, via Wikimedia Commons

—

Justin Adams Burton is Assistant Professor of Music at Rider University, and a regular writer at Sounding Out! You can catch him at justindburton.com.

—

REWIND! . . .If you liked this post, you may also dig:

Gendered Sonic Violence, from the Waiting Room to the Locker Room-Rebecca Lentjes

I Can’t Hear You Now, I’m Too Busy Listening: Social Conventions and Isolated Listening–Osvaldo Oyola

One Nation Under a Groove?: Music, Sonic Borders, and the Politics of Vibration-Marcus Boon

Recent Comments