The Absurdity and Authoritarianism of Now: My Chemical Romance’s The Black Parade Resonates Queerly, Anew

My Chemical Romance fans are speculating about new music after the band wiped their X account clean and wrapped up the Latin America leg of their tour this month. Whether another album is on the way or not, MCR’s magnum opus – The Black Parade – continues to endure two decades later. The sound now resonates through MCR’s commentary on fascism, critiquing the nation while holding the crowd accountable for our participation or passivity.

As the band tours The Black Parade for its 20th anniversary, the members have rejected nostalgia in favor of a new storyline, playing alter-ego characters who have been conditioned to perform for a dictator. The plot, slightly shifting night to night, encourages fans to follow along.

In the first run of the tour, each concert paused midway for an “election.” Four hooded figures walked out to the center stage. Nearby, an army official watched. Singer Gerard Way asked the audience to vote: Support these candidates or reject them? Each person in the crowd had a sign. One side: YEA. The other side: NAY.

“Now we need to hear you,” Way says. The audience roars, jeering and cheering.

No matter the spectators’ will, the result is the same: execution.

The warped election – and the sound of The Black Parade – reflects the absurdism of authoritarianism. Here is a democracy that ends in death, regardless of how you vote. Viewers log into livestreams to watch the fanfare repeat.

MCR’s commentary echoes the ramping authoritarianism and upheaval in the United States:

- The Supreme Court cleared the way for immigration agents to use race as a reason to stop and check someone’s citizenship documents. Daily ICE arrests reached a decade high last year. 2025 also marked the deadliest year for people in ICE custody in two decades.

- Protests have swept the nation, with outrage further increasing after federal agents killed two Minneapolis demonstrators this January.

- Cuts and changes to Medicaid and SNAP are on their way. The longest government shutdown also ended without extending subsidies for health care.

- The Epstein files were released, showing ties between powerful leaders and sex offender Jeffrey Epstein.

- The Department of Governmental Efficiency, named after an outdated Internet meme (DOGE), has resulted in the elimination of at least 290,000 jobs.

- The U.S. withdrew from the Paris Agreement and World Health Organization. The country also pulled out of 66 international organizations.

- Trump continues to enact steep tariffs after a Supreme Court ruling against his policy.

- Layoffs soared past 1.1 million job cuts in 2025, the highest since 2020’s COVID-19 shutdown.

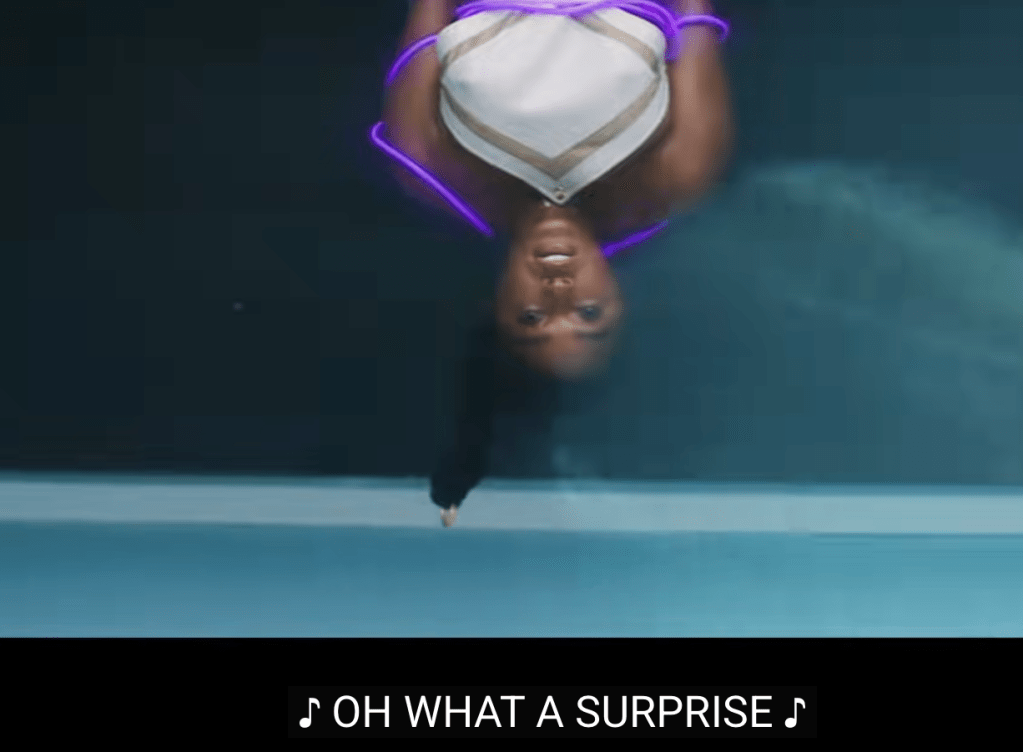

This list represents a fraction of stories and executive orders in 2025; a “flood the zone” strategy overwhelmed the news cycle. Despite censorship efforts – such as the federal defunding of NPR and PBS – much of this information is available to us at the click of a button. State-sanctioned propaganda even encourages the spectacle. Just take a look at footage of ICE raids on the WhiteHouse’s TikTok account, set to songs and images of pop stars:

Yet fatigue and fearmongering keep many complacent; looming economic crises also draw our attention and time. I do not mean to diminish people’s efforts of resistance. But I am interested in the way technology has expanded our potential to witness violence, without ever requiring us to act – as well as the power of entertainment to distract.

So I turn back to MCR’s spectacle, which twists and mirrors this descent into fascism. Up against the tour’s faux-executions, the boos and the cheers of the crowd collapse into a cacophony. I locate the sound of our times within these screams, where dissent seems to go unheard and melds into the chorus of support.

The Black Parade is full of these bombastic wails, but the most intense of them are in “Mama,” a song that balloons into an extended eight-minute performance in the Long Live the Black Parade Tour. Within “Mama,” I try to make some sense of these screams, finding queer resonance with the present moment.

With my analysis, I ask: How might we hold fascist figures to account without squirming out of our own role in the daily exchange of authoritarianism? I argue My Chemical Romance’s 2025-2026 performance of “Mama” highlights structures of violence but also criticizes our participation within them.

In “Mama,” the indulgence of sin is contagious. It’s loud. It’s repeated: “Mama, we all go to hell.” This refrain, set to a polka beat, creates an abject intimacy around our shared fate. Queer listeners have interpreted this song as an angry anthem against the rejection we face from our families and dogmatic religion. For those of us who have heard, “You’re going to hell,” the response, “We all go to hell,” serves as a relief. Verses like “You should’ve raised a baby girl, I should’ve been a better son” have been reclaimed by transgender fans.

Other fans have taken issue with these interpretations, arguing queer attachments distract from the “true” meaning of the song. Indeed, “Mama” tells the story of a soldier writing from the trenches of war, while his mother judges him from afar. But I argue that by placing a queer reading in tandem with the canonical narrative, generative meanings emerge. Queer theory provides tools to understand the role of the mother here. Mama is the title of the song. Mama is pervasive. Mama is mean. Mama is on his mind. But she is also almost entirely absent, avoiding accountability for her son’s violence and instead blaming him: “She said, ‘You ain’t no son of mine.’”

The soldier finds that the trenches are not just his domain, but also his mama’s: “Mama, we’re all full of lies…And right now, they’re building a coffin your size.” His plea to his mother helps us wonder: What about the culture that birthed this violence? In this metaphor, critiquing the mother extends to critiquing the nation.

At the climax of the song, these sentiments come through screams: 30 seconds of an extended, “AHHHHHH” and a crying out of, “Mama, Mama, Mama.” In these wails, I find a soldier begging to share responsibility with his motherland, even as he contends with his own role in the violence. The cries puncture the polka, demonstrating “Mama” is not a celebration, after all.

Mama only sings one line back, through the voice of theater icon Liza Minnelli: “And if you would call me a sweetheart/I’d maybe then sing you a song.” Minnelli’s voice connects the song’s sound to her role in Cabaret, a musical which mixes together queer aesthetics and indulgent decadence with a foreboding rise of fascism. This allusion to Cabaret, as well as the tour performance, serve as a reminder: pleasure and entertainment does not always equal resistance.

In the extended version of “Mama” on tour, these lines are sung by an opera singer, whose voice, too, serves the dictator. Preceding her performance, the song briefly opens up a space for resistance. A new section of the song, debuted on the tour, includes the lyrics:

A dagger, a dagger

Please fetch me a dagger

A tool for our treasonous needs…

With tears in our eyes we collapse on the crosses

And said death be the son of us all

This war is the son of us all.

Even as Gerard Way’s character plots treason against the dictator, these new lyrics make clear: this “death” and “war” is “the son of us all.” Though we might want to pin fascism on one figure, many Americans have participated in the creation of this “death/war.” There are fatal consequences for our collective actions – or our passivity.

Ultimately, the dramatics of this song, delivered like a sinister weapon, critique violence, while ironically trapping us in its pleasures. If prayer is crying out to our father who art in heaven, “Mama” is yelling back at our mother to bring her to where she belongs: the hell in which we both exist. Given the stakes of our political moment, I argue we need to scream louder, with purpose and strategy, against our motherland.

—

Featured Image: “My Chemical Romance Live at T-Mobile Park 6/11/25,” Image by Flickr User Laura Smith CC BY-ND 4.0

—

Max Lubbers (he/she) is a first-year PhD student in the American Studies and Ethnicity department at the University of Southern California. His research centers on transgender affects and sounds. Max earned a bachelor’s degree in Journalism and Gender and Sexuality Studies from Northwestern University, with a senior thesis on butch affect. She previously worked as a journalist, with pieces in Chicago’s NPR station, Colorado Public Radio, and Windy City Times.

—

REWIND!…If you liked this post, check out:

The Sounds of Equality: Reciting Resilience, Singing Revolutions–Mukesh Kulriya

Vocal Anguish, Disinformation, and the Politics of Eurovision 2016–Maria Sonevytsky

“To Unprotect and Subserve:” King Britt Samples the Sonic Archive of Police Violence —Alex Werth

Recent Comments