Resounding Silence and Soundless Surveillance, From TMZ Elevator to Beyoncé and Back Again

This September, Sounding Out! challenged a #flawless group of scholars and critics to give Beyoncé Knowles-Carter a close listen, re-examining the complex relationship between her audio and visuals and amplifying what goes unheard, even as her every move–whether on MTV or in that damn elevator–faces intense scrutiny. Last Monday, you heard from Kevin Allred (Women and Gender Studies, Rutgers) who read Beyoncé’s track “No Angel” against the New York Times’ reference to Michael Brown as #noangel. You will also hear from Liana Silva (Editor, Women in Higher Education, Managing Editor, Sounding Out!), Regina Bradley (writer, scholar, and freelance researcher of African American Life and Culture), and Madison Moore (Research Associate in the Department of English at King’s College, University of London and author of How to Be Beyoncé). Today, Priscilla Peña Ovalle (English, University of Oregon) gives us full Beyoncé realness, from TMZ Elevator to Beyoncé and Back Again,–Editor-in-Chief Jennifer Stoever

This September, Sounding Out! challenged a #flawless group of scholars and critics to give Beyoncé Knowles-Carter a close listen, re-examining the complex relationship between her audio and visuals and amplifying what goes unheard, even as her every move–whether on MTV or in that damn elevator–faces intense scrutiny. Last Monday, you heard from Kevin Allred (Women and Gender Studies, Rutgers) who read Beyoncé’s track “No Angel” against the New York Times’ reference to Michael Brown as #noangel. You will also hear from Liana Silva (Editor, Women in Higher Education, Managing Editor, Sounding Out!), Regina Bradley (writer, scholar, and freelance researcher of African American Life and Culture), and Madison Moore (Research Associate in the Department of English at King’s College, University of London and author of How to Be Beyoncé). Today, Priscilla Peña Ovalle (English, University of Oregon) gives us full Beyoncé realness, from TMZ Elevator to Beyoncé and Back Again,–Editor-in-Chief Jennifer Stoever

—

Less than six months after Beyoncé released Beyoncé, she was momentarily silenced on the small screen when the gossip site TMZ released silent elevator security footage of a fight between her famous husband and sister. Doubly framed by the black and white of a surveillance video screen surreptitiously captured on a security guard’s camera-phone, the video’s silence left plenty of room for speculation. But the footage also revealed a woman conscious that her life is on record: Beyoncé’s body seemed to elude the camera’s full view and she emerged from the elevator with a camera-ready smile.

Like Kevin Allred in his powerful reading of “No Angel,” I could not help but rethink Beyoncé in the wake of Michael Brown’s murder. I already read Beyoncé as a sophisticated response to the visual and aural policing of black female bodies, but the closed-circuit images of Beyoncé on TMZ (and in Beyoncé) made me reconsider silence as a damning convention of video surveillance; like Aaron Trammell in “Video Gaming and the Sonic Feedback of Surveillance,” I questioned (the lack of) sound as a technique of control. When the camera-phone recording of Kajieme Powell’s murder, photographed and narrated by a community member in real-time, was released with silent surveillance footage of the alleged theft, my appreciation of Beyoncé—as a response to those silent damnations—took a new turn.

“Resounding Silence and Surveillance” argues that Beyoncé returns the media’s visual-aural gaze. Because of its pop package, the album’s artistic composition and socio-cultural merit are often underestimated. Like the silence of surveillance footage, omitting any one sensory element from Beyoncé distorts the holistic meaning. To untangle this critically complex interplay of audio and video, I analyze the visualized song “Haunted” and briefly address the single “***Flawless” to show how the artist’s triple consciousness anchors Beyoncé. She is on to us: Beyoncé is the culmination of an artist who has spent her career watching us watch her. Temporarily silenced by footage that she could not control, Beyoncé resounds that “elevator incident”—and our sonic/optic perceptions of her feminism—with a flawless remix.

“I see music. It’s more than just what I hear,” declares Beyoncé. Her voiceover runs over the black screen that opens the promotional video “Self-Titled.” Released the same day Beyoncé premiered on iTunes, “Self-Titled” directs audiences to “see the whole vision of the album.” By design, Beyoncé is an immersive experience—like watching Michael Jackson’s “Thriller” as a television event on MTV.

Because Beyoncé was born the same year the cable music channel MTV premiered, she has never known a world without the ability to “see music.” In many ways, her visual album reinvigorates the early spirit of MTV: after Beyoncé, we will “never look at music the same way again.” Though music videos exacerbate the pop single obsession that Beyoncé explicitly resists with Beyoncé, they also produce a unique kinetic connection with the listener-viewer, whose experience of sound is visually registered by the body as it processes shots and edits. This is especially true when strong imagery, rhythmic editing, and dance movements are expertly employed, as in Beyoncé.

Beyoncé deftly critiques the beauty and music/media industries that have been central to her pop success. If taken piecemeal, these critiques can be easily dismissed: the sustained gloss of her image works all too well. There is much to say on a video-by-video basis, but I focus here on the specific aural elements of “Haunted” that articulate Beyoncé’s refusal of the music industry’s status quo. This visualized rejection reveals the layers of racism and sexism that nonwhite female artists (even Beyoncé, even today) must negotiate.

Because of my personal and professional interest in music videos, I consumed Beyoncé as she intended: a sequence of MPEG-4 videos rather than AAC audio files. But it was not until I solely listened to the album that I could discern Beyoncé’s maturation as a black female multimedia pop/culture artist. One refrain from “Haunted” was especially effective:

I know if I’m onto you, I’m onto you/ Onto you, you must be on to me

The song’s ethereal quality is amplified by Boots (Jordy Asher), one of Beyoncé’s (then-unknown) collaborators with whom she shares “Haunted”’s writing and producing credit. The track builds slowly, supporting Beyoncé’s “stream of consciousness” delivery with layers of reverberation and waves of synth sounds like “Soundtrack” or the Roland TR-808 kick drum. Punches of bass accelerate the beat until Beyoncé riffs her explicit desire to create something more than a product:

The music winds to a halt, but the song is not over. Breathy, reverberating vocals transition the track and a piano is delicately introduced:

It’s what you do, it’s what you see

I know if I’m haunting you, you must be haunting me

It’s where we go, it’s where we’ll be

I know if I’m onto you, I’m onto you

Onto you, you must be on to me

At this point, the song “Haunted” is split into two videos: “Ghost” (directed by Pierre Debusschere) and “Haunted” (directed by Jonas Åkerlund). The videos’ visual differences exemplify the various points of view—from active subject to object of desire and back again—employed across Beyoncé. “Ghost”’s hypnotic visuals underscore the song’s sentiments: close-ups of Beyoncé’s immaculately lit visage soberly mouthing lyrics are intercut with medium shots of her still body swathed in floating fabric and wide shots of her athletic movements against sparse backgrounds. The ar/rhythmic cuts of “Ghost” enunciate an artistic dissatisfaction with the industry: visuals build against/with the synthetic beat, mixing Beyoncé’s kinetically intense movements with her deadpan delivery.

At this point, the song “Haunted” is split into two videos: “Ghost” (directed by Pierre Debusschere) and “Haunted” (directed by Jonas Åkerlund). The videos’ visual differences exemplify the various points of view—from active subject to object of desire and back again—employed across Beyoncé. “Ghost”’s hypnotic visuals underscore the song’s sentiments: close-ups of Beyoncé’s immaculately lit visage soberly mouthing lyrics are intercut with medium shots of her still body swathed in floating fabric and wide shots of her athletic movements against sparse backgrounds. The ar/rhythmic cuts of “Ghost” enunciate an artistic dissatisfaction with the industry: visuals build against/with the synthetic beat, mixing Beyoncé’s kinetically intense movements with her deadpan delivery.

The fiery agency of “Ghost” sets up the chill of “Haunted,” a voyeuristic tour in which Beyoncé watches and is watched. The “knowing-ness” of her breathy refrain (“I know if I’m haunting you”) is heightened when the tempo accelerates in the song’s second half. There is much to say about “Haunted”—from the interracial family of atomic bomb mannequins to Beyoncé’s writhing boudoir choreography. Most significantly, she is the video’s voyeur and object of surveillance: her face appears on multiple television screens and her voyeur-character is regularly captured on closed-circuit footage. The “Haunted” video soundtrack features the foley and stinger sounds of a horror film, but these surveillance shots feature the low whirr of a film projector rather than silence. The silence of a moving image is so jarring that it compels us to watch differently, so much so that “silent” film scenes utilize a recorded sound of “nothing” (“room tone”) to focus the audience.

The fiery agency of “Ghost” sets up the chill of “Haunted,” a voyeuristic tour in which Beyoncé watches and is watched. The “knowing-ness” of her breathy refrain (“I know if I’m haunting you”) is heightened when the tempo accelerates in the song’s second half. There is much to say about “Haunted”—from the interracial family of atomic bomb mannequins to Beyoncé’s writhing boudoir choreography. Most significantly, she is the video’s voyeur and object of surveillance: her face appears on multiple television screens and her voyeur-character is regularly captured on closed-circuit footage. The “Haunted” video soundtrack features the foley and stinger sounds of a horror film, but these surveillance shots feature the low whirr of a film projector rather than silence. The silence of a moving image is so jarring that it compels us to watch differently, so much so that “silent” film scenes utilize a recorded sound of “nothing” (“room tone”) to focus the audience.

When Beyoncé finally resounded the silence of the “elevator incident,” she chose to do it through “***Flawless,” her explicit response to anti-feminist accusations. While the multifaceted anthem gained attention because of Chimamanda Ngozi Adichie’s audio, the song is uniquely infused with a kind of docu-visuality thanks to Ed McMahon’s well-known voice and the Star Search jingle. These bookends cite a young Beyoncé losing to an all-male rock band, the kind heavily programmed during MTV’s early days. The clips reinforce the album’s critique of racial and gender hierarchies while questioning the double-edged “work ethic” required to surpass them. Of course, Beyoncé pre-emptively frames this discussion for us in “Self-Titled,” a necessary step that helps audiences appreciate the many moving parts of her tour de force, including her creative business mind.So when Beyoncé swapped the audio of Adichie and McMahon for Nicki Minaj, it was no less of a feminist move. Instead, Beyoncé silences TMZ gawkers:

Of course sometimes shit go down/

When it’s a billion dollars on an elevator

She then offers herself as a medium of empowerment. Beyoncé may be part of a billion-dollar empire, but she willingly shares that pleasure with us:

I wake up looking this good

And I wouldn’t change it if I could

(If I could, if I, if I, could)

And you can say what you want, I’m the shit

(What you want I’m the shit, I’m the shit)

(I’m the shit, I’m the shit, I’m the shit)

I want everyone to feel like this tonight

God damn, God damn, God damn!

Beyoncé’s last word is an image. She and her creative team remixed the visuals of the “elevator incident”: the remix single website features black and white photos of Beyoncé and Minaj, simultaneously evoking surveillance footage and the photo booth images of a girls’ night out. Beyoncé is the work of an artist who has spent her career watching us watch her: this minor moment exemplifies Beyoncé’s multimedia resonance as an artist whose power is visible and audible across iTunes and TMZ screens alike.

Beyoncé and Nicki Minaj mugging for the camera

Thanks to Elizabeth Peterson, Charise Cheney, Loren Kajikawa, André Sirois and Jennifer Stoever for providing research and intellectual support for this essay

Priscilla Peña Ovalle is the Associate Director of the Cinema Studies Program at the University of Oregon. After studying film and interactive media production at Emerson College, she received her PhD from the University of Southern California School of Cinema-Television while collaborating with the Labyrinth Project at the Annenberg Center for Communication. She has written on MTV, Jennifer Lopez, and Beyoncé. Her book, Dance and the Hollywood Latina: Race, Sex, and Stardom (Rutgers University Press, 2011), addresses the symbolic connection between dance and the racialized sexuality of Latinas in popular culture. Her next research project explores the historical, industrial, and cultural function of hair in mainstream film and television. You can find her work in American Quarterly, Theatre Journal, and Women & Performance.

—

REWIND!…If you liked this post, check out:

REWIND!…If you liked this post, check out:

Aurally Other: Rita Moreno and the Articulation of “Latina-ness”-Priscilla Peña Ovalle

Music Meant to Make You Move: Considering the Aural Kinesthetic–Imani Kai Johnson

Karaoke and Ventriloquism: Echoes and Divergences–Sarah Kessler and Karen Tongson

The Better to Hear You With, My Dear: Size and the Acoustic World

Today the SO! Thursday stream inaugurates a four-part series entitled Hearing the UnHeard, which promises to blow your mind by way of your ears. Our Guest Editor is Seth Horowitz, a neuroscientist at NeuroPop and author of The Universal Sense: How Hearing Shapes the Mind (Bloomsbury, 2012), whose insightful work on brings us directly to the intersection of the sciences and the arts of sound.

Today the SO! Thursday stream inaugurates a four-part series entitled Hearing the UnHeard, which promises to blow your mind by way of your ears. Our Guest Editor is Seth Horowitz, a neuroscientist at NeuroPop and author of The Universal Sense: How Hearing Shapes the Mind (Bloomsbury, 2012), whose insightful work on brings us directly to the intersection of the sciences and the arts of sound.

That’s where he’ll be taking us in the coming weeks. Check out his general introduction just below, and his own contribution for the first piece in the series. — NV

—

Welcome to Hearing the UnHeard, a new series of articles on the world of sound beyond human hearing. We are embedded in a world of sound and vibration, but the limits of human hearing only let us hear a small piece of it. The quiet library screams with the ultrasonic pulsations of fluorescent lights and computer monitors. The soothing waves of a Hawaiian beach are drowned out by the thrumming infrasound of underground seismic activity near “dormant” volcanoes. Time, distance, and luck (and occasionally really good vibration isolation) separate us from explosive sounds of world-changing impacts between celestial bodies. And vast amounts of information, ranging from the songs of auroras to the sounds of dying neurons can be made accessible and understandable by translating them into human-perceivable sounds by data sonification.

Four articles will examine how this “unheard world” affects us. My first post below will explore how our environment and evolution have constrained what is audible, and what tools we use to bring the unheard into our perceptual realm. In a few weeks, sound artist China Blue will talk about her experiences recording the Vertical Gun, a NASA asteroid impact simulator which helps scientists understand the way in which big collisions have shaped our planet (and is very hard on audio gear). Next, Milton A. Garcés, founder and director of the Infrasound Laboratory of University of Hawaii at Manoa will talk about volcano infrasound, and how acoustic surveillance is used to warn about hazardous eruptions. And finally, Margaret A. Schedel, composer and Associate Professor of Music at Stonybrook University will help readers explore the world of data sonification, letting us listen in and get greater intellectual and emotional understanding of the world of information by converting it to sound.

— Guest Editor Seth Horowitz

—

Although light moves much faster than sound, hearing is your fastest sense, operating about 20 times faster than vision. Studies have shown that we think at the same “frame rate” as we see, about 1-4 events per second. But the real world moves much faster than this, and doesn’t always place things important for survival conveniently in front of your field of view. Think about the last time you were driving when suddenly you heard the blast of a horn from the previously unseen truck in your blind spot.

Hearing also occurs prior to thinking, with the ear itself pre-processing sound. Your inner ear responds to changes in pressure that directly move tiny little hair cells, organized by frequency which then send signals about what frequency was detected (and at what amplitude) towards your brainstem, where things like location, amplitude, and even how important it may be to you are processed, long before they reach the cortex where you can think about it. And since hearing sets the tone for all later perceptions, our world is shaped by what we hear (Horowitz, 2012).

But we can’t hear everything. Rather, what we hear is constrained by our biology, our psychology and our position in space and time. Sound is really about how the interaction between energy and matter fill space with vibrations. This makes the size, of the sender, the listener and the environment, one of the primary features that defines your acoustic world.

You’ve heard about how much better your dog’s hearing is than yours. I’m sure you got a slight thrill when you thought you could actually hear the “ultrasonic” dog-training whistles that are supposed to be inaudible to humans (sorry, but every one I’ve tested puts out at least some energy in the upper range of human hearing, even if it does sound pretty thin). But it’s not that dogs hear better. Actually, dogs and humans show about the same sensitivity to sound in terms of sound pressure, with human’s most sensitive region from 1-4 kHz and dogs from about 2-8 kHz. The difference is a question of range and that is tied closely to size.

Most dogs, even big ones, are smaller than most humans and their auditory systems are scaled similarly. A big dog is about 100 pounds, much smaller than most adult humans. And since body parts tend to scale in a coordinated fashion, one of the first places to search for a link between size and frequency is the tympanum or ear drum, the earliest structure that responds to pressure information. An average dog’s eardrum is about 50 mm2, whereas an average human’s is about 60 mm2. In addition while a human’s cochlea is spiral made of 2.5 turns that holds about 3500 inner hair cells, your dog’s has 3.25 turns and about the same number of hair cells. In short: dogs probably have better high frequency hearing because their eardrums are better tuned to shorter wavelength sounds and their sensory hair cells are spread out over a longer distance, giving them a wider range.

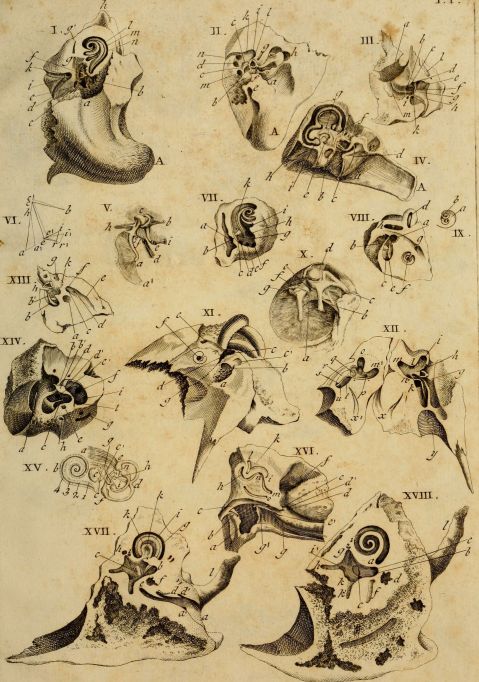

Interest in the how hearing works in animals goes back centuries. Classical image of comparative ear anatomy from 1789 by Andreae Comparetti.

Then again, if hearing was just about size of the ear components, then you’d expect that yappy 5 pound Chihuahua to hear much higher frequencies than the lumbering 100 pound St. Bernard. Yet hearing sensitivity from the two ends of the dog spectrum don’t vary by much. This is because there’s a big difference between what the ear can mechanically detect and what the animal actually hears. Chihuahuas and St. Bernards are both breeds derived from a common wolf-like ancestor that probably didn’t have as much variability as we’ve imposed on the domesticated dog, so their brains are still largely tuned to hear what a medium to large pseudo wolf-like animal should hear (Heffner, 1983).

But hearing is more than just detection of sound. It’s also important to figure out where the sound is coming from. A sound’s location is calculated in the superior olive – nuclei in the brainstem that compare the difference in time of arrival of low frequency sounds at your ears and the difference in amplitude between your ears (because your head gets in the way, making a sound “shadow” on the side of your head furthest from the sound) for higher frequency sounds. This means that animals with very large heads, like elephants, will be able to figure out the location of longer wavelength (lower pitched) sounds, but probably will have problems localizing high pitched sounds because the shorter frequencies will not even get to the other side of their heads at a useful level. On the other hand, smaller animals, which often have large external ears, are under greater selective pressure to localize higher pitched sounds, but have heads too small to pick up the very low infrasonic sounds that elephants use.

Audiograms (auditory sensitivity in air measured in dB SPL) by frequency of animals of different sizes showing the shift of maximum sensitivity to lower frequencies with increased size. Data replotted based on audiogram data by Sivian and White (1933). “On minimum audible sound fields.” Journal of the Acoustical Society of America, 4: 288-321; ISO 1961; Heffner, H., & Masterton, B. (1980). “Hearing in glires: domestic rabbit, cotton rat, feral house mouse, and kangaroo rat.” Journal of the Acoustical Society of America, 68, 1584-1599.; Heffner, R. S., & Heffner, H. E. (1982). “Hearing in the elephant: Absolute sensitivity, frequency discrimination, and sound localization.” Journal of Comparative and Physiological Psychology, 96, 926-944.; Heffner H.E. (1983). “Hearing in large and small dogs: Absolute thresholds and size of the tympanic membrane.” Behav. Neurosci. 97: 310-318. ; Jackson, L.L., et al.(1999). “Free-field audiogram of the Japanese macaque (Macaca fuscata).” Journal of the Acoustical Society of America, 106: 3017-3023.

But you as a human are a fairly big mammal. If you look up “Body Size Species Richness Distribution” which shows the relative size of animals living in a given area, you’ll find that humans are among the largest animals in North America (Brown and Nicoletto, 1991). And your hearing abilities scale well with other terrestrial mammals, so you can stop feeling bad about your dog hearing “better.” But what if, by comic-book science or alternate evolution, you were much bigger or smaller? What would the world sound like? Imagine you were suddenly mouse-sized, scrambling along the floor of an office. While the usual chatter of humans would be almost completely inaudible, the world would be filled with a cacophony of ultrasonics. Fluorescent lights and computer monitors would scream in the 30-50 kHz range. Ultrasonic eddies would hiss loudly from air conditioning vents. Smartphones would not play music, but rather hum and squeal as their displays changed.

And if you were larger? For a human scaled up to elephantine dimensions, the sounds of the world would shift downward. While you could still hear (and possibly understand) human speech and music, the fine nuances from the upper frequency ranges would be lost, voices audible but mumbled and hard to localize. But you would gain the infrasonic world, the low rumbles of traffic noise and thrumming of heavy machinery taking on pitch, color and meaning. The seismic world of earthquakes and volcanoes would become part of your auditory tapestry. And you would hear greater distances as long wavelengths of low frequency sounds wrap around everything but the largest obstructions, letting you hear the foghorns miles distant as if they were bird calls nearby.

But these sounds are still in the realm of biological listeners, and the universe operates on scales far beyond that. The sounds from objects, large and small, have their own acoustic world, many beyond our ability to detect with the equipment evolution has provided. Weather phenomena, from gentle breezes to devastating tornadoes, blast throughout the infrasonic and ultrasonic ranges. Meteorites create infrasonic signatures through the upper atmosphere, trackable using a system devised to detect incoming ICBMs. Geophones, specialized low frequency microphones, pick up the sounds of extremely low frequency signals foretelling of volcanic eruptions and earthquakes. Beyond the earth, we translate electromagnetic frequencies into the audible range, letting us listen to the whistlers and hoppers that signal the flow of charged particles and lightning in the atmospheres of Earth and Jupiter, microwave signals of the remains of the Big Bang, and send listening devices on our spacecraft to let us hear the winds on Titan.

Here is a recording of whistlers recorded by the Van Allen Probes currently orbiting high in the upper atmosphere:

When the computer freezes or the phone battery dies, we complain about how much technology frustrates us and complicates our lives. But our audio technology is also the source of wonder, not only letting us talk to a friend around the world or listen to a podcast from astronauts orbiting the Earth, but letting us listen in on unheard worlds. Ultrasonic microphones let us listen in on bat echolocation and mouse songs, geophones let us wonder at elephants using infrasonic rumbles to communicate long distances and find water. And scientific translation tools let us shift the vibrations of the solar wind and aurora or even the patterns of pure math into human scaled songs of the greater universe. We are no longer constrained (or protected) by the ears that evolution has given us. Our auditory world has expanded into an acoustic ecology that contains the entire universe, and the implications of that remain wonderfully unclear.

__

Exhibit: Home Office

This is a recording made with standard stereo microphones of my home office. Aside from usual typing, mouse clicking and computer sounds, there are a couple of 3D printers running, some music playing, largely an environment you don’t pay much attention to while you’re working in it, yet acoustically very rich if you pay attention.

.

This sample was made by pitch shifting the frequencies of sonicoffice.wav down so that the ultrasonic moves into the normal human range and cuts off at about 1-2 kHz as if you were hearing with mouse ears. Sounds normally inaudible, like the squealing of the computer monitor cycling on kick in and the high pitched sound of the stepper motors from the 3D printer suddenly become much louder, while the familiar sounds are mostly gone.

.

This recording of the office was made with a Clarke Geophone, a seismic microphone used by geologists to pick up underground vibration. It’s primary sensitivity is around 80 Hz, although it’s range is from 0.1 Hz up to about 2 kHz. All you hear in this recording are very low frequency sounds and impacts (footsteps, keyboard strikes, vibration from printers, some fan vibration) that you usually ignore since your ears are not very well tuned to frequencies under 100 Hz.

.

Finally, this sample was made by pitch shifting the frequencies of infrasonicoffice.wav up as if you had grown to elephantine proportions. Footsteps and computer fan noises (usually almost indetectable at 60 Hz) become loud and tonal, and all the normal pitch of music and computer typing has disappeared aside from the bass. (WARNING: The fan noise is really annoying).

.

The point is: a space can sound radically different depending on the frequency ranges you hear. Different elements of the acoustic environment pop up depending on the type of recording instrument you use (ultrasonic microphone, regular microphones or geophones) or the size and sensitivity of your ears.

![Spectrograms (plots of acoustic energy [color] over time [horizontal axis] by frequency band [vertical axis]) from a 90 second recording in the author’s home office covering the auditory range from ultrasonic frequencies (>20 kHz top) to the sonic (20 Hz-20 kHz, middle) to the low frequency and infrasonic (<20 Hz).](https://soundstudiesblog.com/wp-content/uploads/2014/08/figure3officerange.jpg?w=479&h=637)

Spectrograms (plots of acoustic energy [color] over time [horizontal axis] by frequency band [vertical axis]) from a 90 second recording in the author’s home office covering the auditory range from ultrasonic frequencies (>20 kHz top) to the sonic (20 Hz-20 kHz, middle) to the low frequency and infrasonic (<20 Hz).

Featured image by Flickr User Jaime Wong.

—

Seth S. Horowitz, Ph.D. is a neuroscientist whose work in comparative and human hearing, balance and sleep research has been funded by the National Institutes of Health, National Science Foundation, and NASA. He has taught classes in animal behavior, neuroethology, brain development, the biology of hearing, and the musical mind. As chief neuroscientist at NeuroPop, Inc., he applies basic research to real world auditory applications and works extensively on educational outreach with The Engine Institute, a non-profit devoted to exploring the intersection between science and the arts. His book The Universal Sense: How Hearing Shapes the Mind was released by Bloomsbury in September 2012.

—

REWIND! If you liked this post, check out …

Reproducing Traces of War: Listening to Gas Shell Bombardment, 1918– Brian Hanrahan

Learning to Listen Beyond Our Ears– Owen Marshall

This is Your Body on the Velvet Underground– Jacob Smith

Recent Comments